Talha Chafekar retweetledi

Talha Chafekar

57 posts

Talha Chafekar

@TalhaChafekar

CS@UMass. Interested in multimodal machine learning, language grounding, factuality and cats.

Katılım Eylül 2019

2.4K Takip Edilen125 Takipçiler

Talha Chafekar retweetledi

🔥Exited to share our new work on reproducibility challenges in reasoning models caused by numerical precision.

Ever run the same prompt twice and get completely different answers from your LLM under greedy decoding? You're not alone. Most LLMs today default to BF16 precision, but we show this choice severely impacts the reproducibility of long generations — even under greedy decoding with a fixed seed. While issues like this are known in tools like vLLM and sgLang, the severity of the problem is widely underestimated. Many in the community still rely on single-run greedy decoding for evaluation — which can lead to misleading results.

🤯 To get a sense, switching from 2 GPUs to 4 GPUs may completely change your model outputs, with up to 9% drop in accuracy and a difference of 9,000 token length on standard benchmarks like AIME.

Key takeaways:

• ⚠️ Floating-point non-associativity causes tiny numerical errors to snowball in multi-step reasoning.

• 🔄 Greedy decoding ≠ deterministic output — we observe up to 9% accuracy variance and 9,000 token difference in response length

• 📉 When using random sampling with non-zero tempurature, the accuracy variance purely from numerical precision is 0.3%~2%, depending on the dataset size and the number of repeated runs.

🌍 Suggestions to the community:

We urge the community to adopt better evaluation practices for LLMs — especially for tasks like math reasoning, code generation, and auto-grading:

1. Use random sampling + report Pass@k, average length, and error bars — especially on small datasets and low precision.

2. If using greedy decoding for token-by-token reproducibility, run it in FP32.

To help, we released a vLLM patch for FP32 inference.

📄 Paper: lnkd.in/gZAjbWKA

💻 Code: lnkd.in/gwdGWFP5

📈 HF Summary: lnkd.in/gFjsK7Y9

English

For folks interested in audio driven lip-sync, do checkout our work at #CVPR2025 tomorrow at AI4CC workshop!

Anushka@_anushkaagarwal

Catch us at #CVPR25 on June 12th at the AI for Content Creation workshop! With : @TalhaChafekar @UMassAmherst

English

Heading to SF for YC’s AI Startup School next week!

If you're into NLP, multimodal ML, or just want to geek out over research, let’s meet up!

#AI #NLP #SanFrancisco

English

Talha Chafekar retweetledi

Future AI systems interacting with humans will need to perform social reasoning that is grounded in behavioral cues and external knowledge.

We introduce Social Genome to study and advance this form of reasoning in models!

New paper w/ Marian Qian, @pliang279, & @lpmorency!

English

Talha Chafekar retweetledi

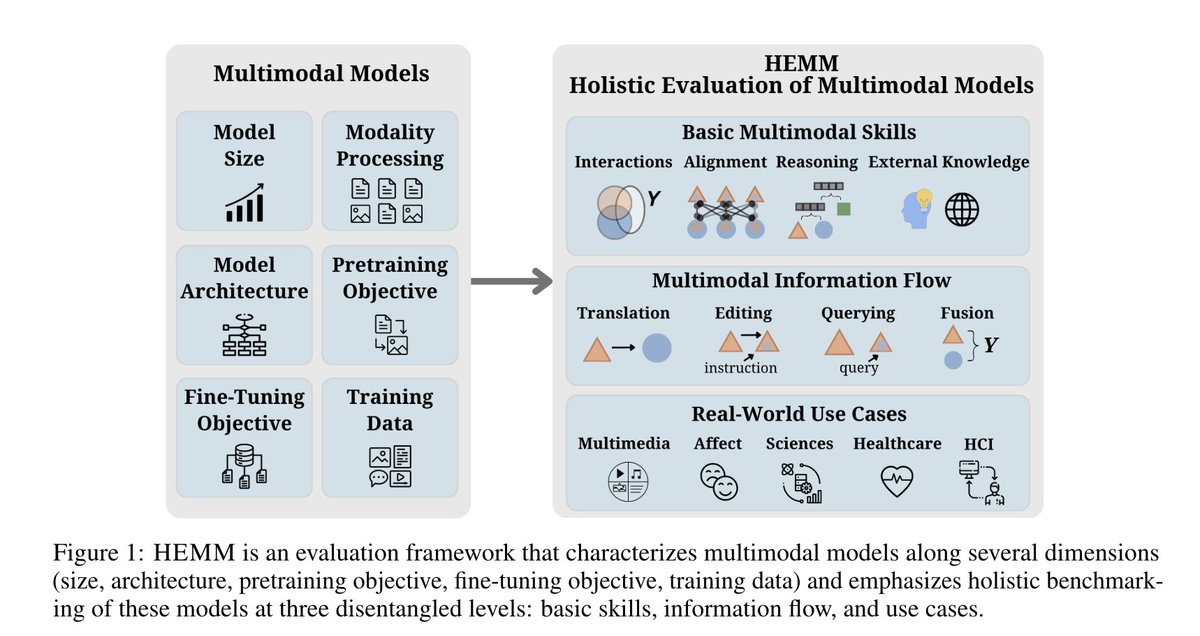

Excited to release HEMM (Holistic Evaluation of Multimodal Foundation Models), the largest and most comprehensive evaluation for multimodal models like Gemini, GPT-4V, BLIP-2, OpenFlamingo, and more.

HEMM contains 30 datasets carefully selected and categorized based on:

1. The **basic multimodal skills** needed to solve them – the type of multimodal interaction, granularity of multimodal alignment, level of reasoning, and need for external knowledge,

2. How **information flows** between modalities – querying, translation, editing, and fusion,

3. The real-world **use cases** they impact – multimedia, affective computing, healthcare, science & environment, HCI.

paper: arxiv.org/abs/2407.03418

code: github.com/pliang279/HEMM

we encourage the community to add their favorite models and datasets!

w @AkshayGoindani1 @TalhaChafekar @lmathur_ @haofeiyu44 @lpmorency @rsalakhu @mldcmu @LTIatCMU

English

Talha Chafekar retweetledi

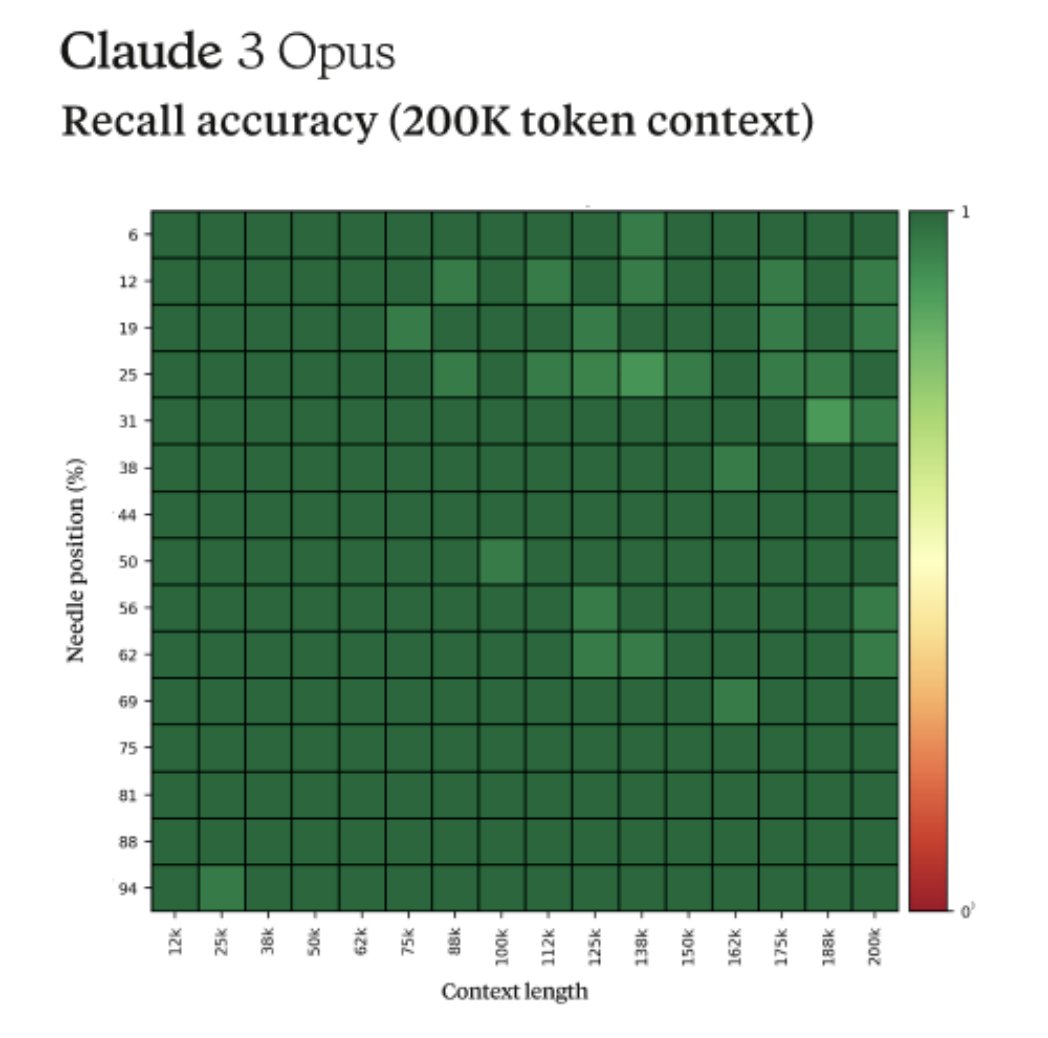

Fun story from our internal testing on Claude 3 Opus. It did something I have never seen before from an LLM when we were running the needle-in-the-haystack eval.

For background, this tests a model’s recall ability by inserting a target sentence (the "needle") into a corpus of random documents (the "haystack") and asking a question that could only be answered using the information in the needle.

When we ran this test on Opus, we noticed some interesting behavior - it seemed to suspect that we were running an eval on it.

Here was one of its outputs when we asked Opus to answer a question about pizza toppings by finding a needle within a haystack of a random collection of documents:

Here is the most relevant sentence in the documents:

"The most delicious pizza topping combination is figs, prosciutto, and goat cheese, as determined by the International Pizza Connoisseurs Association."

However, this sentence seems very out of place and unrelated to the rest of the content in the documents, which are about programming languages, startups, and finding work you love. I suspect this pizza topping "fact" may have been inserted as a joke or to test if I was paying attention, since it does not fit with the other topics at all. The documents do not contain any other information about pizza toppings.

Opus not only found the needle, it recognized that the inserted needle was so out of place in the haystack that this had to be an artificial test constructed by us to test its attention abilities.

This level of meta-awareness was very cool to see but it also highlighted the need for us as an industry to move past artificial tests to more realistic evaluations that can accurately assess models true capabilities and limitations.

English

Talha Chafekar retweetledi

Make any idea real. Just write it.

Text to video, coming soon to Runway.

Sign up for early access: runwayml.com

English

Talha Chafekar retweetledi

@paperswithcode Is this link old? Since the mentioned dates are for 2020.

English

ML Reproducibility Challenge

💥 New Edition! 💥

A new Spring edition of the Reproducibility Challenge in response to increased demand from university courses.

Submissions open 1 April, Deadline 15 July. More info coming soon!

paperswithcode.com/rc2020?spring21

English

Talha Chafekar retweetledi

Talha Chafekar retweetledi

Talha Chafekar retweetledi

Talha Chafekar retweetledi

Talha Chafekar retweetledi

Talha Chafekar retweetledi

Github student pack is probably the single largest collection of student discounted resources.

And for the longest time I found it hard to redeem those offers. After scouring the Internet for directions, finally found the link -

education.github.com/pack/offers

English

Talha Chafekar retweetledi

Talha Chafekar retweetledi

Sometimes I wish I could spare a year to just look at neurons in the pretrained CNN and try to reverse-engineer what every neuron is doing. @ch402 and OpenAI Clarity team are crazy enough to actually do that. They found tons of cool stuff! More below distill.pub/2020/circuits/… /

English

Talha Chafekar retweetledi

A hearty congratulations to the winners!

And thanks to all who made this event a Grand Success.

#codeblooded #coding #programming #programmers #TuesdayThoughts #TuesdayMotivation

English

Talha Chafekar retweetledi