Kuldeep Bhatt

19K posts

@TeslaJi

Engineering Manager | Architecture | Leadership | Finance | Agnostic | Free Thinker | F1 Fan 🏁| Calling Out Hypocrisy Left to Right. 🗣

India 🇮🇳 Makes history!! INDIA has successfully demonstrated a 1,000-km secure quantum communication network, which is one of the longest in the world. This achievement comes less than two years after the mission's launch in October 2024, far outpacing the original timeline to reach 2,000 km in 8 years🤯🚀 For more information PIB Link: pib.gov.in/PressReleasePa…

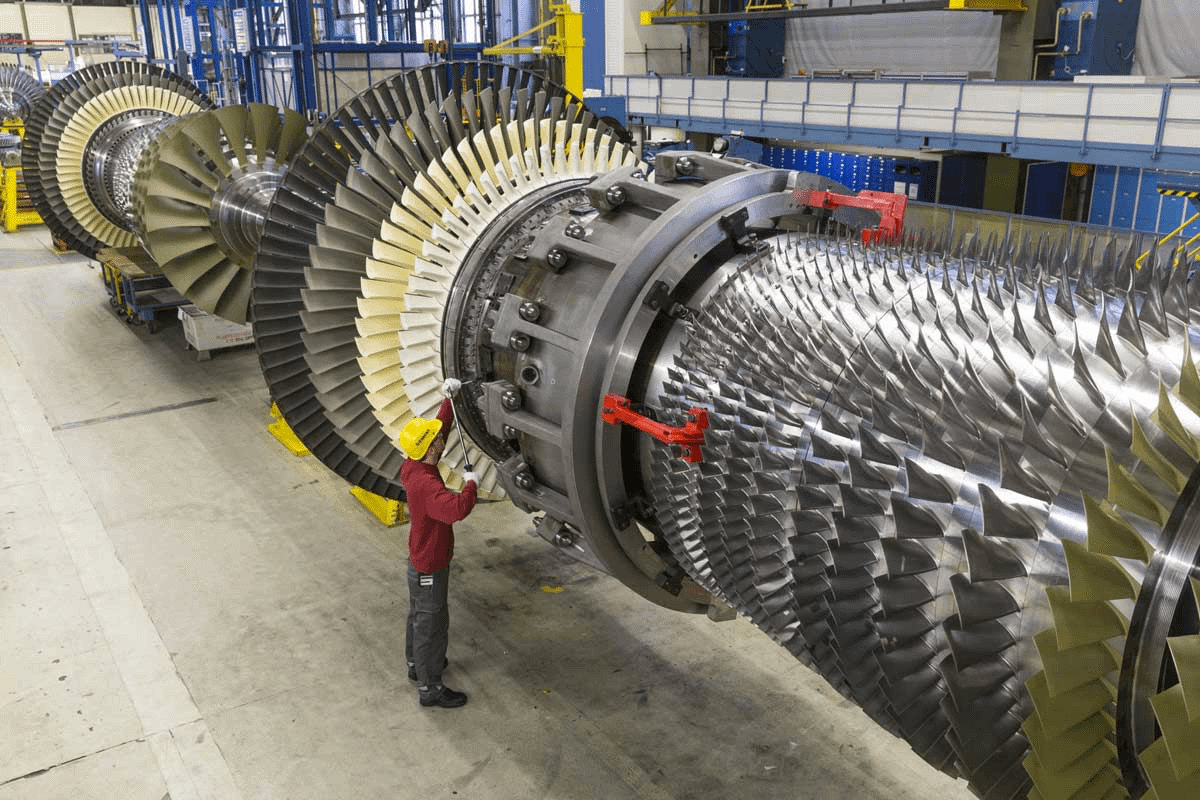

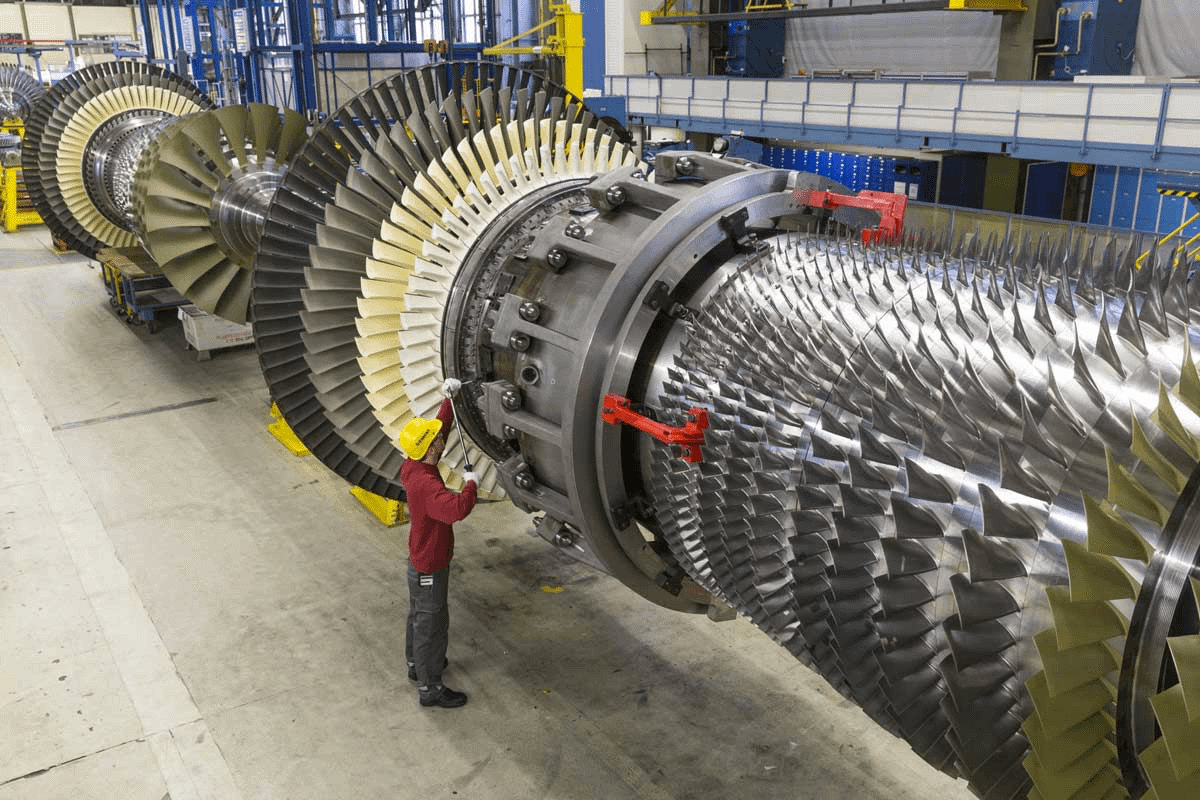

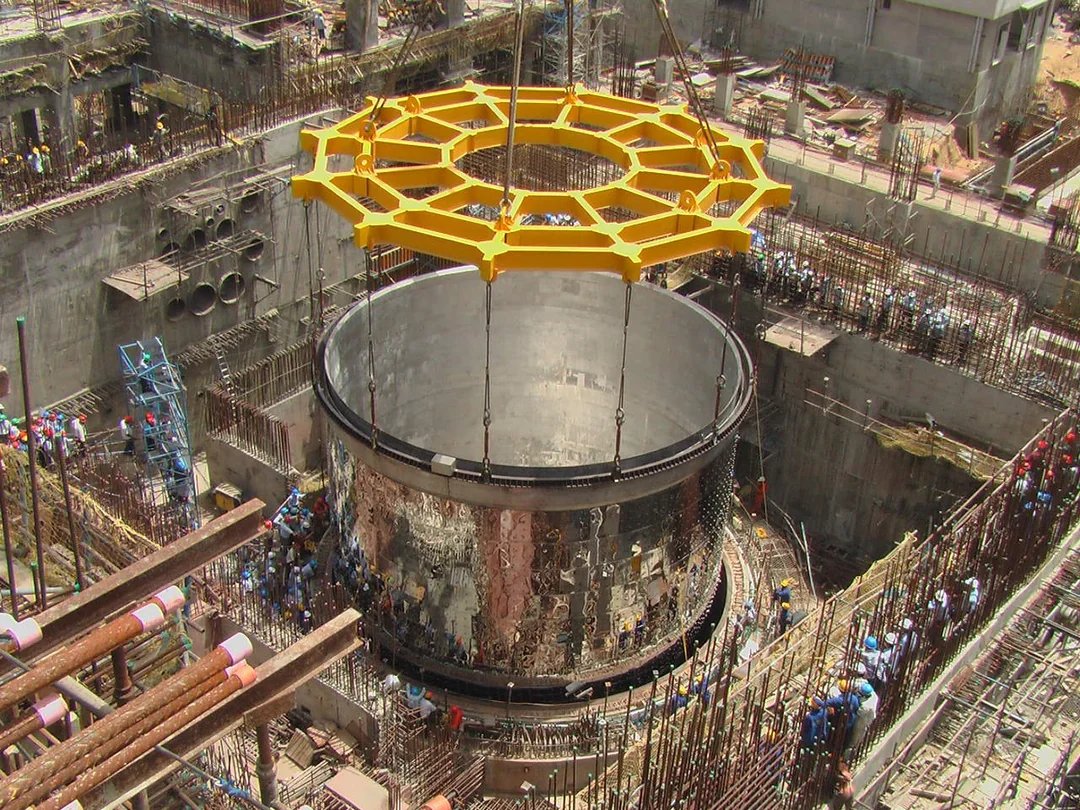

Today, India takes a defining step in its civil nuclear journey, advancing the second stage of its nuclear programme. The indigenously designed and built Prototype Fast Breeder Reactor at Kalpakkam has attained criticality. This advanced reactor, capable of producing more fuel than it consumes, reflects the depth of our scientific capability and the strength of our engineering enterprise. It is a decisive step towards harnessing our vast thorium reserves in the third stage of the programme. A proud moment for India. Congratulations to our scientists and engineers.

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

I’m from Gujarat So I grew up watching Modi ji as CM from 2001 I have seen the Gujarat of power cuts, average roads, and uncertainty… and I have seen the Gujarat of 24x7 electricity, highways, industry, and stability My village in Jamnagar used to get water once a week, that too by tanker After Modi ji became CM, Narmada water reached Jamnagar & Kutch Today, we get water twice a day We also got CC roads in our last village with 24x7 electricity. This is not politics for me. This is lived reality And today, as he leads India, I’m witnessing the same transformation at a national level Stronger infrastructure, global recognition, decisive governance So when people like Subramanian Swamy and Madhukishwar suddenly throw filthy, baseless allegations on his personal life… it doesn’t feel political to me, it feels personal For 25+ years, this man has faced the harshest scrutiny from media, opposition, global pressure Yet not one proven scandal or scam has ever stood the test of law And now No FIR. No case. No evidence. Just social media noise and “sources” We are expected to ignore lived experience and believe random allegations? Sorry, I won’t You can oppose his politics You can debate his policies But this desperate attempt at character assassination only proves one thing... when you can’t defeat a leader’s work, you target his image I have seen the change in Gujarat, In India That’s reality for me 🇮🇳