Sabitlenmiş Tweet

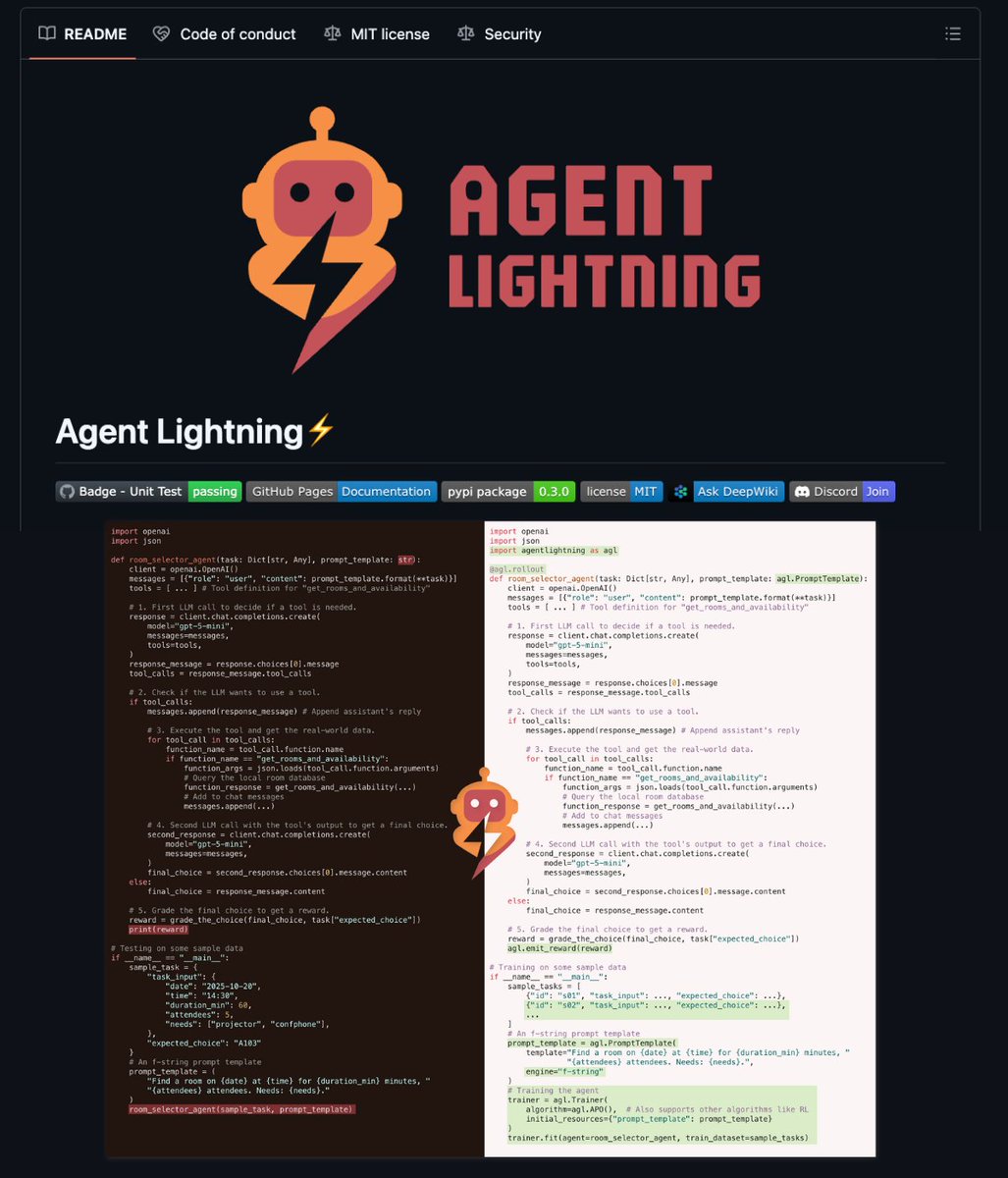

Built an open-source tool for debugging LLM agents:

- Record runs with full traces

- Replay w/o API calls

- Diff to see what changed

"Agent worked yesterday, broke today" - now you can see exactly why.

Works with PydanticAI, LangGraph, CrewAI, etc.

github.com/metawake/work-…

English