Toddlogical

3.1K posts

Toddlogical

@Todwalog

I'm Questing, don't bother me

California, USA Katılım Kasım 2022

1.2K Takip Edilen398 Takipçiler

I will never get over the fact that solar panels can produce enough energy to move a 7,000 pound Cybertruck.

In fact, the panels on my roof produce enough energy to propel my Cybertruck over 100 miles a day.

Why doesn’t everyone have a Tesla with Solar Panels? Imagine how much fresher our neighborhoods would smell. Plus so much less noise pollution.

English

@MarioNawfal Lots of parents i know are terrified of the schools transing their kids behind their backs. Its such a wild time to be a parent and a child

English

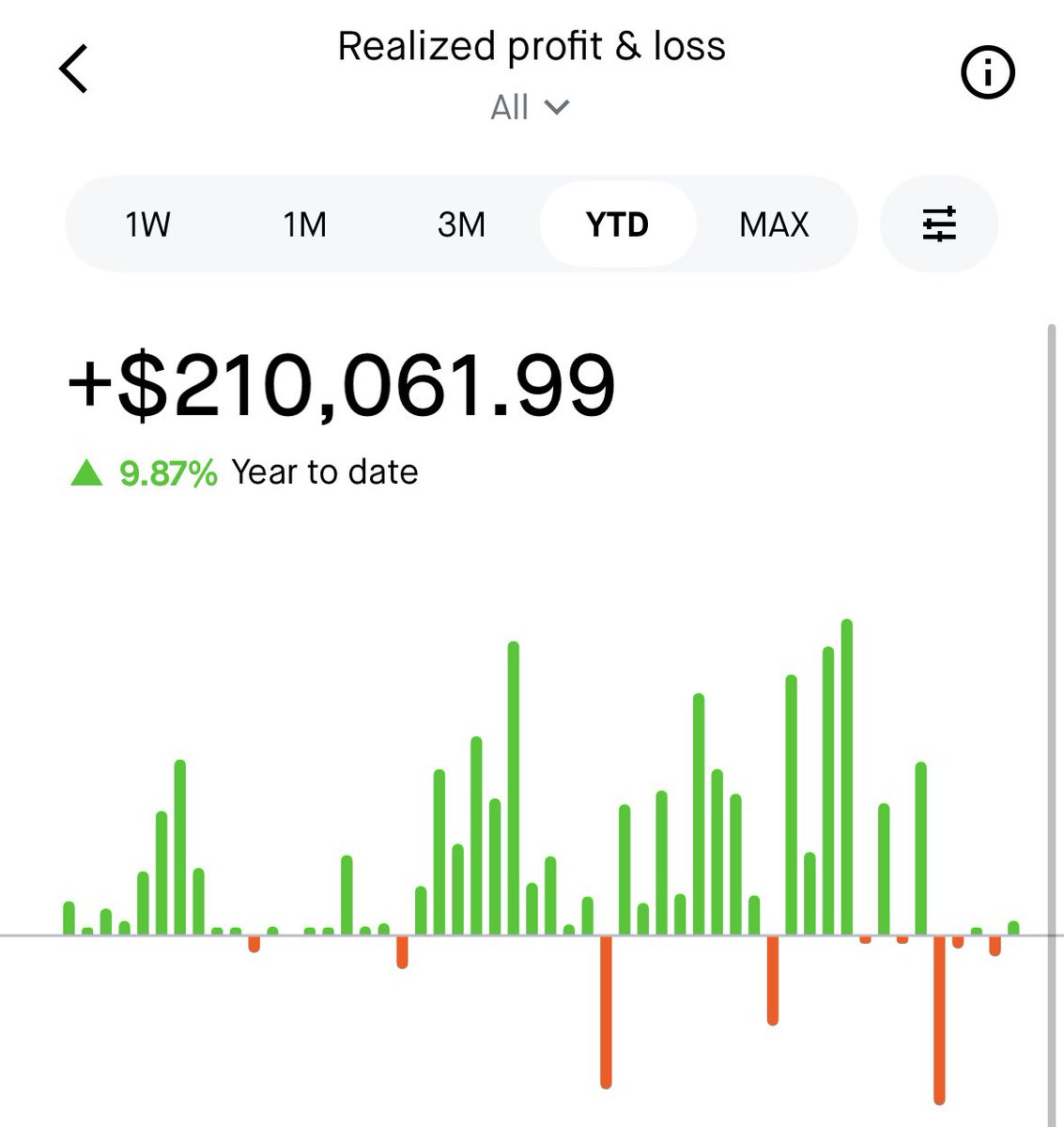

@TrevMcKendrick The problem is when I use those platforms they are not good compared to what Robinhoods experience is. Theres lots of...friction. The apps and websites suck if im being blunt. Have you opened an account on fidelity and robinhood lately and compared the experience?

English

@MarioNawfal Put your phone down, put it in a pocket, or hand it to someone else. Holding it like that is weird af in this setting.

English

🇺🇸 FULTON COUNTY COMMISSIONER CALLS DOJ SEARCH WARRANT FOR 2020 BALLOTS "AN ASSAULT ON YOUR VOTE"

The feds showed up at the elections warehouse with a warrant for 2020 ballots, absentee envelopes, and voter data.

Commissioner Mo Ivory ran to the cameras to declare it an attack on democracy.

Four years of insisting there's nothing to hide, and this is the reaction when someone finally comes looking.

Source: @bluestein

English

@wholemars S and X will always continue to exist in the fleet, just not in production, but it is still a little sad

English

Just started Tesla Robotaxi drives in Austin with no safety monitor in the car.

Congrats to the @Tesla_AI team!

If you’re interested in solving real-world AI, which is likely to lead to AGI imo, join Tesla AI. Solving real-world AI for Optimus will be 100X harder than cars.

TSLA99T@Tsla99T

I am in a robotaxi without safety monitor

English

Toddlogical retweetledi

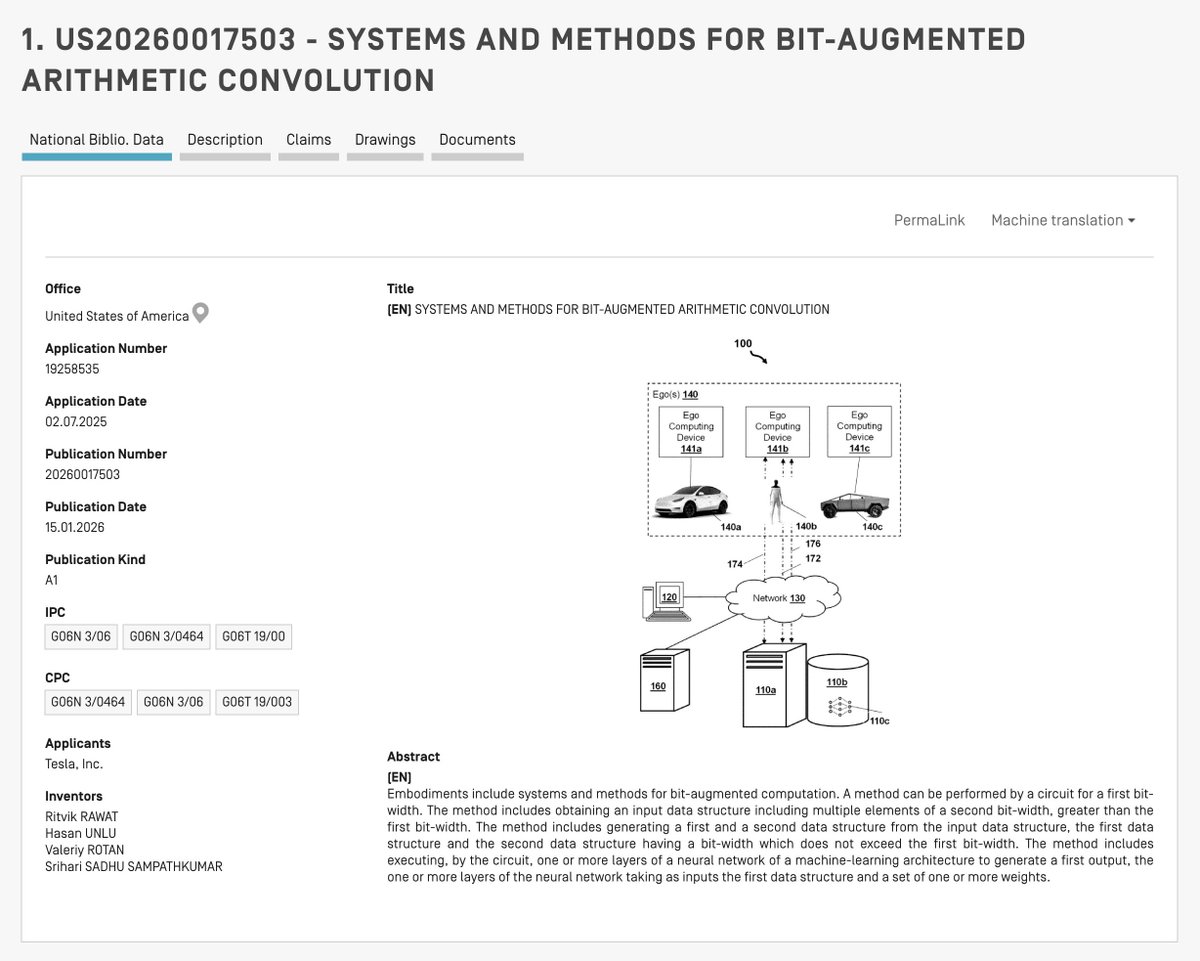

READ IT TO BELIEVE IT 🚨 TESLA'S LATEST PATENT HAS SOLVED THE BIGGEST ANXIETY—THE HARDWARE OBSOLESCENCE—FOR ALL HW3 and HW4 OWNERS 🔥

If you are driving a Hardware 3 vehicle, you might feel like the clock is ticking on your Full Self-Driving capabilities. If you just bought a shiny new AI4 model, you are likely looking over your shoulder at the rumors of the upcoming AI5, wondering if your cutting-edge car is about to become a legacy artifact.

But on January 15, 2026, Tesla quietly published a document that should let every owner breathe a massive sigh of relief. Patent US20260017503A1 is not just a collection of circuit diagrams; it is a technical lifeline.

It reveals a sophisticated method for "BIT-AUGMENTED ARITHMETIC CONVOLUTION" that essentially allows Tesla to push future-generation AI models onto current-generation silicon.

For the Hardware 3 owners feeling abandoned, this is your consolation prize—proof that Tesla is engineering deep-tech workarounds to keep your car in the game. For the AI4 buyers, this is your safety net, ensuring that the neural networks of the future won't break your investment.

This patent confirms that Tesla isn't just relying on new chips to solve autonomy; they are rewriting the rules of math to ensure the car in your driveway today can "think" with the precision of the cars being built tomorrow.

🛑 The problem: The hardware-software mismatch

Designing hardware for autonomous vehicles is a brutal balancing act because the product lifecycle of a car spans decades, while the lifecycle of an AI algorithm is measured in weeks or months. When Tesla designs a chip, like the FSD computer, they have to lock in the hardware architecture years before the car hits the road.

They might fill the chip with 8-bit multipliers because they are fast, small, and power-efficient. In the world of computing, "bits" are just the amount of detail a computer can handle at once. Think of an 8-bit system like a box of 256 crayons. It is great for basic coloring, but it lacks nuance.

However, three years later, the AI team might develop a massive new Transformer model or an Occupancy Network that performs best with 16-bit or 32-bit data. These newer models are like using a box of 65,000 crayons—they can see subtle shades and textures that the 8-bit box simply misses.

Suddenly, you have a mismatch. The new software is too "heavy" and detailed for the old hardware, and you cannot physically upgrade the chips in millions of cars already on the road.

The traditional solution is to "quantize" the model. This basically means taking that high-definition 16-bit picture and crunching it down to a grainy 8-bit version to fit the chip. But "dumbing down" the data degrades performance and accuracy—something you absolutely cannot afford when the car is trying to distinguish a stop sign from a red balloon at 60 mph.

💡 Tesla's solution: Virtual high-precision arithmetic

Tesla’s engineers have devised a brilliant workaround that essentially uses software logic to hack the physical limitations of the hardware. Instead of dumbing down the data, they break the high-precision data into smaller, manageable chunks that the hardware can understand.

If the hardware can only eat "bite-sized" 8-bit pieces, but the data comes in a "king-sized" 16-bit format, this system splits the 16-bit data into two 8-bit packages. They call this process "deplaning."

The system separates the Most Significant Byte (the big numbers) from the Least Significant Byte (the fine details). Think of this like looking at a price tag of $1,500. The "Most Significant" part is the $1,000 (the big picture), and the "Least Significant" part is the $500 (the specific detail).

These separate "planes" are then fed through the standard, lower-precision hardware independently. Once the hardware processes these chunks, the system cleverly stitches the results back together at the end, applying the necessary mathematical shifts to reconstruct a high-precision output. It is like moving a grand piano through a narrow door by taking the legs off first and reassembling it inside.

🔪 The genius of deplaning: Weaponizing the MAC array

The genius of this patent lies in how Tesla re-purposes the existing hardware to handle this splitting process. Normally, preparing data—like splitting 16-bit numbers into 8-bit chunks—is a housekeeping task left to the Central Processing Unit (CPU) or a simple Arithmetic Logic Unit (ALU).

The problem is that the Neural Network Accelerator is a beast that eats data faster than a CPU can spoon-feed it. If you ask the CPU to split every pixel in a 4K video feed into high and low bytes, the accelerator will starve waiting for the data.

Tesla’s solution is to perform this "surgery" using the accelerator itself. They realized that a mathematical convolution is just a fancy way of multiplying and adding. "Convolution" is just the math word for sliding a filter over an image to find patterns, like edges or colors.

So, they designed a specific "kernel"—which acts like a digital stencil or cookie cutter—that tricks the convolution engine into acting like a data splitter.

The patent details a specific "deplaning" operation that avoids the memory bottleneck entirely via a "stride-two" hack. Imagine a stream of 16-bit raw data entering the chip. Instead of stopping the flow to cut these numbers in half, Tesla runs a 1x2 convolutional kernel over them with a specific stride of two.

A "stride" is just how many steps the filter skips as it moves across the data. They define a kernel of [1 0] and another of [0 1]. When the hardware convolves the [1 0] kernel with a stride of two, it mathematically multiplies the first byte (the big number) by 1 and the second byte (the detail number) by 0.

The stride of two then forces the window to jump over the next byte, effectively snatching only the "upper" halves of the numbers. A parallel process runs the inverse [0 1] kernel to snatch the "lower" halves.

This effectively turns the massive array of multipliers—usually reserved for finding lane lines or stop signs—into a high-speed data shredder, separating the high-precision signal into two digestable streams without ever slowing down the pipeline.

🧮 Doing the math: The four-way cross-multiplication

Once the data is split into these lower-precision planes, the system runs the neural network layers on them. This involves convolving weights (the learned parameters of the AI) across the input data. If the weights themselves are also high-precision, they get split up too.

The patent describes a reconstruction method that mirrors the "FOIL" method (First, Outer, Inner, Last) you might remember from high school algebra. To multiply a 16-bit input by a 16-bit weight using only 8-bit hardware, the system actually performs four distinct operations: High-byte x High-byte, High-byte x Low-byte, Low-byte x High-byte, and Low-byte x Low-byte.

Because the hardware just sees 8-bit integers and doesn't know that a "High-byte" is worth 256 times more than a "Low-byte," the patent specifies a post-processing shift.

The results involving a High-byte are conceptually "left-shifted" or multiplied by 256 to restore their magnitude. Think of this like adding zeros to the end of a check. A "1" in the millions place is worth way more than a "1" in the ones place, so the system has to add those zeros back in to make the math work.

The system then sums these four partial products in a larger accumulator register to get the final, mathematically perfect high-precision answer. This allows the 8-bit silicon to punch way above its weight class, delivering 16-bit or even 32-bit accuracy by simply running four times as many cheap operations rather than one expensive operation.

💾 Data storage: The logarithmic packing format

Perhaps the most specific detail in the patent is how they handle the massive numbers generated by this process. When you stitch two 16-bit operations back together, you often end up with a 32-bit result. However, moving 32-bit numbers around the chip consumes expensive memory bandwidth—it clogs the pipes.

Tesla’s solution, described in the later sections of the patent, is a custom data format that compresses this 32-bit value back into a 16-bit container without losing the "meaning" of the data.

They utilize a custom floating-point format that drastically skews the balance between the "exponent" and the "mantissa." In scientific notation (like 1.23 x 10^5), the exponent is the "10^5" part that tells you how big the number is, and the mantissa is the "1.23" part that gives you the precision.

Standard formats might split the bits evenly, but Tesla’s patent proposes using a massive 10-bit exponent and a tiny 5-bit mantissa.

This is a deliberate engineering trade-off. In autonomous driving—specifically for "Occupancy Networks" that map 3D space—knowing the scale of an object (is it 5 meters away or 50?) is often more critical than knowing the precise millimeter detail.

By allocating 10 bits to the exponent, the format can represent a gargantuan dynamic range of values, preventing the "overflow" errors that crash standard integer math, while packing the data tight enough to keep the memory bus running cool and fast.

➕ Handling the sign: Zero-padding vs sign-extension

The document also touches on the subtle but headache-inducing problem of negative numbers. In binary math, the "sign" of a number (positive or negative) is usually determined by the first bit.

When you slice a 16-bit number in half, the lower 8 bits lose their context—the hardware doesn't know if they belong to a positive or negative whole.

The patent details a logic flow for "Sign Extension." If the original number was signed (like a velocity vector, which needs to know if the car is moving forward or backward), the system has to intelligently fill the empty bits of the upper planes with ones or zeros to preserve that negative value.

Conversely, for "natural numbers" like pixel intensity (which are always positive because you can't have negative light), it uses "Zero Padding." This dynamic switching ensures that the system works equally well for image data (unsigned) and motion vectors (signed), making it a universal accelerator for the entire Full Self-Driving stack.

❄️ Efficiency: Why this beats bigger chips

You might wonder why Tesla does not just put bigger, more powerful chips in the cars to begin with. The answer lies in the constraints of an electric vehicle: power and heat.

A 16-bit multiplier takes up significantly more silicon area and consumes much more electricity than an 8-bit multiplier. It creates heat that is hard to get rid of.

By sticking with the smaller, narrower hardware, Tesla keeps the chip size down and the power consumption low, which preserves battery range and simplifies cooling. This patent allows them to have their cake and eat it too; they get the thermal and physical benefits of efficient, low-precision hardware while retaining the ability to run high-precision, cutting-edge AI models whenever the safety case demands it.

🚀 How this patent contributes to Tesla's now and future

This patent is the strategic linchpin that prevents Tesla’s older fleet from becoming obsolete as their AI ambitions grow. Consider the millions of vehicles currently on the road running Hardware 3. These chips were designed in an era when standard Convolutional Neural Networks running 8-bit integer math were the gold standard.

However, the industry has rapidly shifted toward massive "End-to-End" Transformers and complex Occupancy Networks that crave the stability and dynamic range of 16-bit or even 32-bit floating-point precision.

Without the technology described in this document, Tesla would face a brutal fork in the road: either cap the intelligence of their newest models to accommodate the old hardware, or leave millions of customers behind.

This "bit-augmented" breakthrough provides a third option, effectively emulating next-generation precision on current-generation silicon. By unlocking virtual high-precision arithmetic, Tesla unshackles its AI training team.

Engineers training models on massive H100 or Dojo clusters can now push for higher fidelity—using that custom 10-bit exponent to perfectly track distinct objects at 300 meters—without worrying that the car’s computer will fail to execute the math.

It allows for a unified software stack where a single, sophisticated model architecture can be deployed across Hardware 3, Hardware 4, and the upcoming AI5. The system simply adjusts how many "passes" the hardware makes to achieve the required precision.

This not only saves the company billions of dollars by avoiding a logistical nightmare of hardware retrofits but also ensures that the "Full Self-Driving" capability sold years ago can actually be delivered using the cutting-edge transformer models of today and tomorrow.

English

@WallStreetApes We lied for years but those days are over, trust us now...

English

FDA Commissioner Dr. Marty Makary says the FDA has intentionally been lying to us for 16 years about fat being bad for you

He says this allowed Big Pharma to sell more drugs

“They suppressed the data for 16 years. Two other large studies failed to show an association. Finally, the study trickled out in the medical literature. Nobody noticed it. Those in the low-fat group had higher rates of heart attacks.

Why? Maybe because when you avoid fat, you have to pound food with carbohydrates and often ultra-processed carbohydrates stripped of fiber and chopped up at functions like sugar.

We created a generation of children with low protein, high carbohydrates, sugar addiction, and burdened with ultra-processed foods, and what did we do as a medical field? Drugged them at scale.

Those days are over. We are telling people the truth about food.”

English

@KanekoaTheGreat @ILA_NewsX Yah and with taxes coming up....quite the timing

English

@ILA_NewsX Maybe not the best week to push tax-payer funded universal childcare lol

English

@OldeWorldOrder @CollinRugg And absolutely no one's getting arrested, and the fraud will continue as designed. 🤷🏻♂️

English

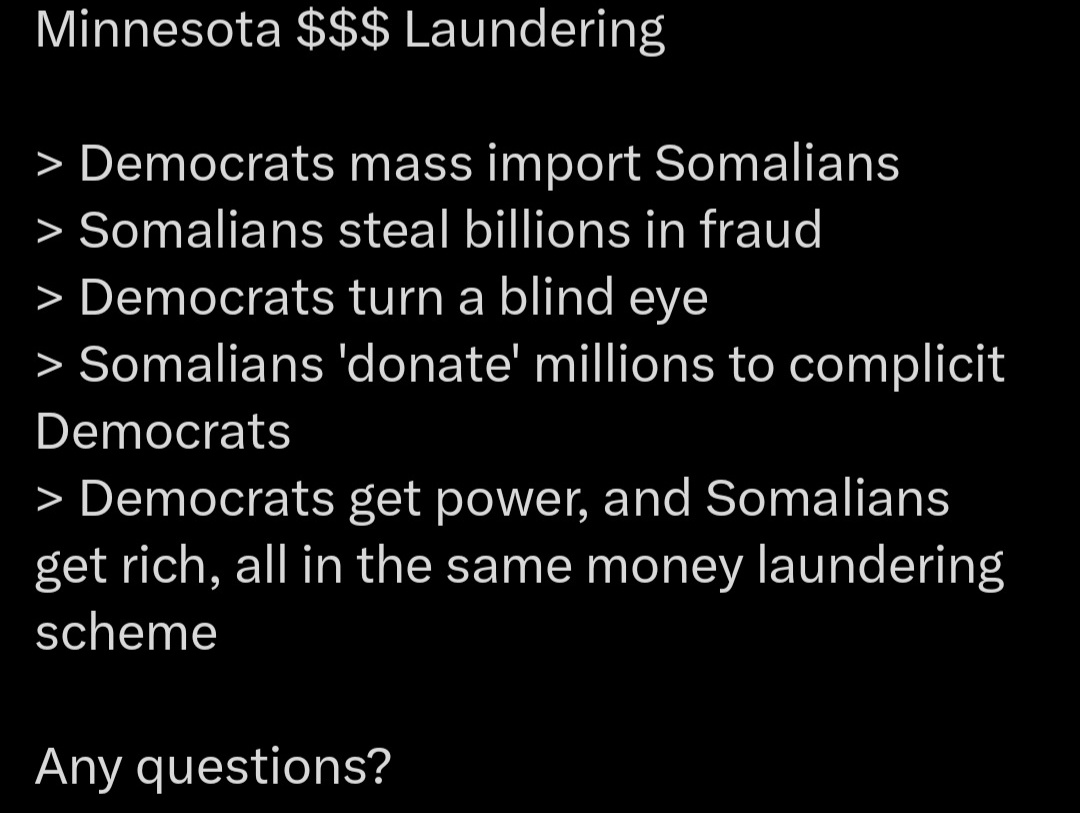

@CollinRugg The fact that the Minnesota government is funneling MILLIONS of dollars into the hands of known fraudsters is proof that state officials are in on the corruption.

There is literally no other way to explain it.

English

NEW: The Quality Learing Center, formerly known as the Salama Child Care Center, was raided by the FBI in 2015, for allegedly defrauding a state program, according to ABC 7.

At some point following the raid, they rebranded to the Quality Learing Center.

According to ABC 7, the FBI raided the facility "to revoke the child care license of the owner due to alleged safety violations."

The Quality Learing Center, which still receives tons of taxpayer money, claims there is "no fraud going on whatsoever."

In response to the viral video, Department of Children Tikki Brown suggested no one was at the business in the video because it had closed down last week.

On the same day, however, the center allegedly "trucked" in kids to prove that they were in business.

The manager said they had never been closed at any point over the past 8 years.

Video: bbbyjohnnn / tt.

English

@chamath Might have to cut into the daycare learing fund to make up the loss...

English

Here, below, is a press release announcing that a person worth $50-100B (depending on SpaceX valuation) has left California.

And this is one person! There are many, many others.

Now, California’s budget can exclude any tax revenue from Peter Thiel going forward. And it will learn the hard way the many others for whom this is now also true.

Why was this asset seizure law, falsely labeled as a “Billionaire Tax”, a smart idea, again? Why didn’t Newsom kill it? Because he wanted more revenue to leak to daycares?

All the billionaires are leaving now so only the millionaires and middle class will be left. Mathematically, it means California tax revenue and future income taxes are about to fall off a cliff.

Are you still sure the new proposed asset seizures won’t reach you?! How will they pay for the fraud, waste and abuse?

Teddy Schleifer@teddyschleifer

NEW: Peter Thiel is opening an office in Miami for his personal investment firm, Thiel Capital. Thiel just put out this press release. Why? Because he is trying to show publicly that he is a taxpayer in Florida, not California. Note that it says the lease was signed Dec. 2025.

English

@pepemoonboy And if you knew where the funds actually ended up you'd be even more disgusted

English

@TeslaBoomerMama Sad California government forcing everyone out. So much lost potential 😠

English

@vladtenev When can we expect children's accounts on hood? I've been waiting but will soon have to bite the bullet and go off hood for one

English

Robinhood exists to create more owners. We are thrilled to support the next generation by matching government contributions to Trump Accounts for our employees’ children.

Robinhood remains ready to dedicate the technology and capital to making Trump Accounts as robust and intuitive as possible. We appreciate the leadership of the Administration, @InvestAmerica24 @altcap, @MichaelDell and others on this initiative.

Robinhood@RobinhoodApp

Robinhood will match the $1,000 contribution from @USTreasury into Trump Accounts for eligible children of Robinhood employees. Our mission is to democratize finance for all and we’re honored to help extend that mission to the next generation through this initiative.

English