Yi (Joshua) Ren

68 posts

@JoshuaRenyi

Postdoc @OATML_Oxford, Prev. Ph.D @UBC_CS, MSc @EdinburghNLP. Working on ML (learning dynamics, continual learning, iterated learning, LLM)

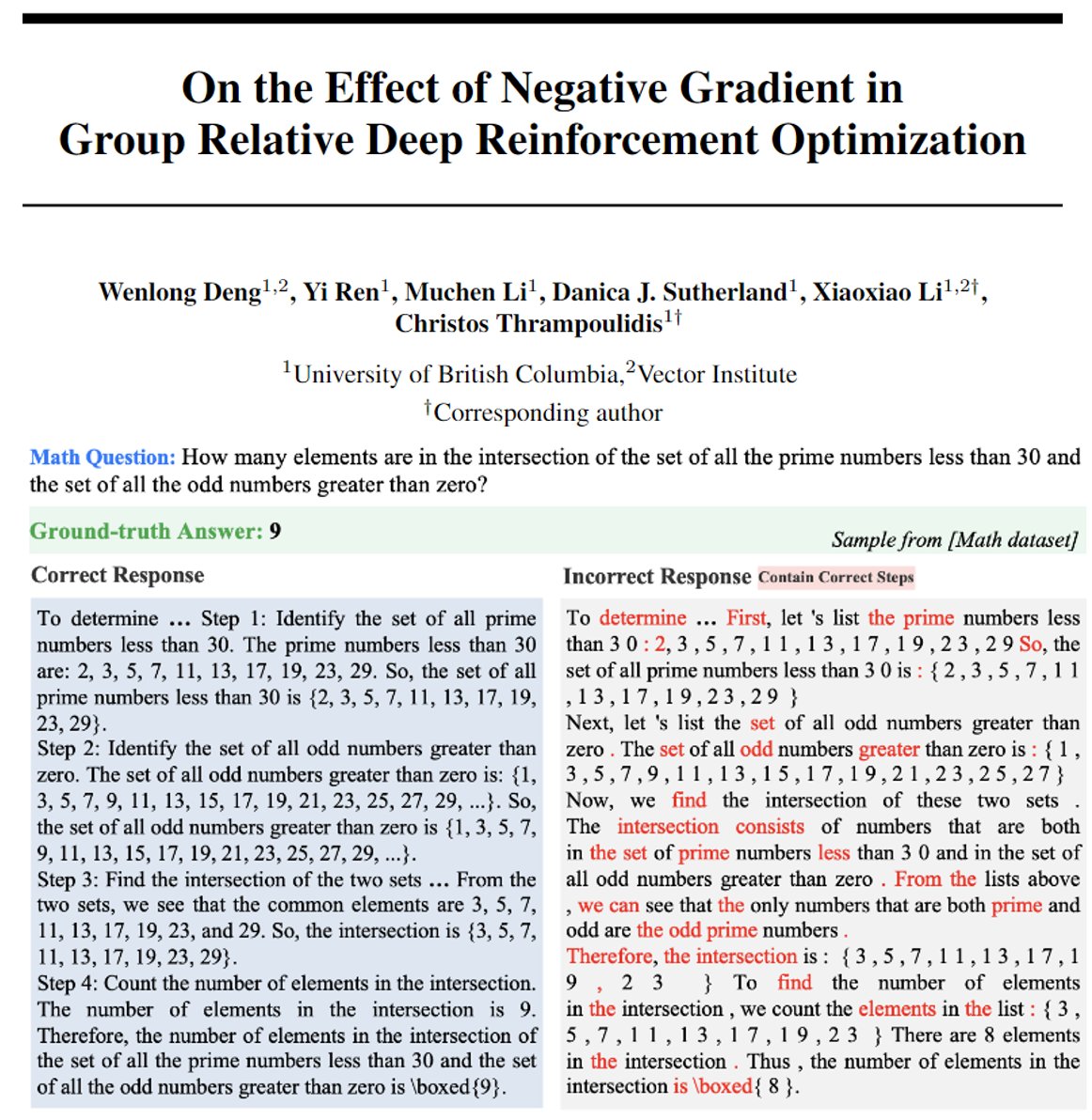

🎉 Our paper about Preference Optimization has been accepted to ICML 2026! We unify entangled & disentangled objectives via incentive–score decomposition, derive the Disentanglement Band for ideal training dynamics: suppress loser while preserving winner. #ICML2026

Excited to share our ICML 2026 Hypothesis Testing Workshop in Seoul, this July! @icmlconf 🎉This workshop aims to bring together researchers developing modern hypothesis testing methodology and applying it to machine learning problems such as robustness, distribution shift, security, medicine, and LLM evaluation. In other words, if you care about how we make ML claims rigorous, this workshop is for you. We now have four confirmed speakers: Arthur Gretton @ArthurGretton, Yao Xie @yaoxie21851119, Bo Li @uiuc_aisecure, and Yisong Yue @yisongyue. The organizing team includes Xiuyuan Cheng (Duke), Feng Liu @AlexFengLiu1, Lester Mackey @LesterMackey, Shayak Sen @shayaksen, Danica J. Sutherland @d_j_sutherland, and Nathaniel Xu (UBC). 📌 Submission deadline: 10 May 2026 📌 Notification: 26 May 2026 📌 Camera-ready: 17 June 2026 📌 Workshop date: July 10 or 11, 2026 (TBA) 🚩Check more information below! 🔗Website: testing.ml 🔗Submission Portal: openreview.net/group?id=ICML.… We’re also recruiting PC members/reviewers. 🔗 Reviewer interest form: docs.google.com/forms/d/e/1FAI… 🏁Please feel free to share this with colleagues, collaborators, and students who may be interested. #ICML #ICML26

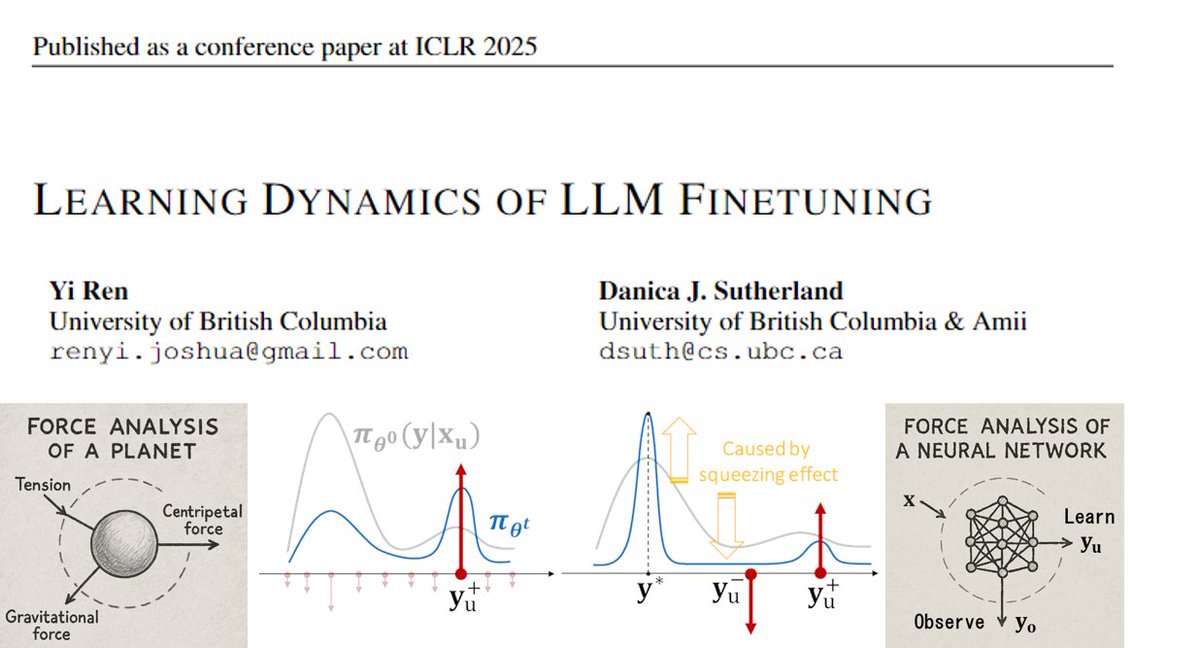

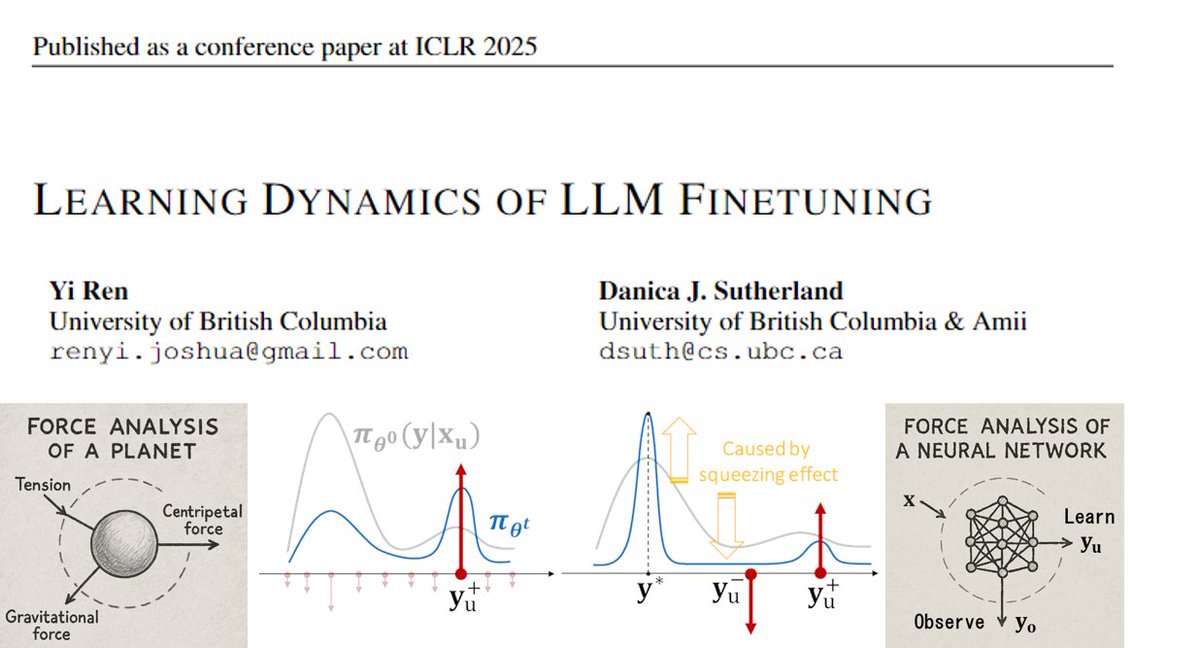

📢Curious why your LLM behaves strangely after long SFT or DPO? We offer a fresh perspective—consider doing a "force analysis" on your model’s behavior. Check out our #ICLR2025 Oral paper: Learning Dynamics of LLM Finetuning! (0/12)

We are launching HALoGEN💡, a way to systematically study *when* and *why* LLMs still hallucinate. New work w/ @shrusti_ghela* @davidjwadden @YejinChoinka 💫 🧵 [1/n]

📢Curious why your LLM behaves strangely after long SFT or DPO? We offer a fresh perspective—consider doing a "force analysis" on your model’s behavior. Check out our #ICLR2025 Oral paper: Learning Dynamics of LLM Finetuning! (0/12)

Outstanding Papers Safety Alignment Should be Made More Than Just a Few Tokens Deep. Xiangyu Qi, et al. Learning Dynamics of LLM Finetuning. Yi Ren and Danica J. Sutherland. AlphaEdit: Null-Space Constrained Model Editing for Language Models. Junfeng Fang, et al.