Triatomic Capital

185 posts

@TriatomicCap

‘AppliedAI’ in Deep tech, Health tech, & New economy // Helping great entrepreneurs build 'century-defining' technologies & businesses.

Multiplexing can be thought of as "GPU-ification" of a complex biological system Serial measurement becomes massively parallel To turn biology digital, you need data at *scale* and *relevance* The power of multiplexing is you get both.

Faster paths to mRNA therapeutics 🧬💨 Traditional plasmid prep is slowing down discovery. Watch our DDN webinar to explore a cell-free approach to generating IVT Ready DNA. Watch now hubs.ly/Q048wKYB0

Your workflow shouldn’t have to change to unlock a new architecture. With the effcc Compiler: – Write in C, C++, or LiteRT – Build with CMake, Make, or Ninja – Work in the IDE you already use Everything stays the same—until you compile. That’s where Efficient Fabric comes in. See how it works: ow.ly/Y3VW50YLnep

Like Claude Cowork? On a Mac? Wish Cowork could talk to iMessage? So did I. So I made a thing... 🧵

@Jokerbernrin @MatXComputing 1. Cracked Team 2. Been there, done that before (with TPU) 3. First principles design on what's needed (and only what's needed) for frontier AI models 4. "Add lightness" judo move on CUDA (see #3)

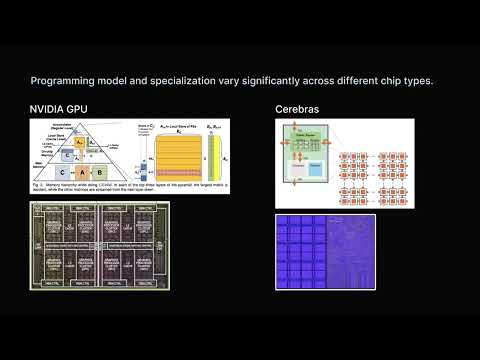

I chatted with @ysmulki about MatX, chip design and where silicon designed for LLMs is headed (8:17) Tightly coupling SRAM and HBM on one chip (14:03) More MoE FLOPS, smaller KV cache load (16:08) Numerics: from 32-bit to 4-bit (19:02) Targeting both training and inference (22:14) Chip timelines (27:15) Logic and memory scarcity (29:42) Compute costs (32:07) Latency: from 20ms to 1ms as the new table stakes (40:50) Programming the chip (43:00) Starting MatX (47:11) Codesign without seeing the models (51:57) Interconnect design (55:44) Performance modeling philosophy (1:07:02) Prefill vs. decode (1:13:47) What's next