Tsung-Yi Lin

140 posts

Tsung-Yi Lin

@TsungYiLinCV

Principal Research Scientist @Nvidia | Ex-@Google Brain Team | Computer Vision & Machine Learning

Fully agreed with the sentiment that much of computer vision research (concretely, those not for “human consumption”) should be grounded in robotics. But as a robotics researcher, I think the more nuanced question is: how can we *rethink* these intermediate representations for embodied intelligence rather than discarding them? Why? The challenge, as also pointed out in Vincent’s article, is precisely the lack of perception-action data at scale. This is why intermediate representations IMO are *preferable rather than obsolete* because they open up training from scalable data sources. This can include even the vision/language encoders people love and use in robot learning — it’s hard to imagine training low-level visual representation or high-level language understanding purely from limited robot data. The same goes for intermediate representations at the structure level — world modeling, learning from Internet videos, learning from humans, and simulation — many of which still rely on 3D representations too.

Here we introduce SAGE: Scalable Agentic 3D Scene Generation for Embodied AI, which can generate sim-ready 3D scenes with agents following user demands at scale, ready for robotic action generation. Paper, code, and SAGE-10k dataset are all released! nvlabs.github.io/sage/

Our team won 2nd place for the BEHAVIOR challenge at NeurIPS🏅I’ll present our team’s solution Sunday, feel free to stop by! Event time: 11:00 AM - 1:45 PM PST, December 7 Event link: luma.com/9r2nskbz GitHub link: github.com/mli0603/openpi…

Most VLM benchmarks watch the world; few ask how actions *change* it from a robot's eye. Embodied cognition tells us that intelligence isn't just watching – it's enacted through interaction. 👉We introduce ENACT: A benchmark that tests if VLMs can track the evolution of a home-scale environment from a robot's egocentric view. 🌐enact-embodied-cognition.github.io 📄enact-embodied-cognition.github.io/enact.pdf 1/N

We raised $28M seed from Threshold Ventures, AIX Ventures, and NVentures (Nvidia's venture capital arm) —alongside 10+ unicorn founders and top AI researchers— to build reasoning models that generate real-time simulations and games. Models are bottlenecked by practical simulations that can act as Reinforcement Learning environments. Human self-expression is bounded by tools that let us create alternate realities. At Moonlake, we are building a future where anyone can create interactive worlds, bring their child-like wonder to life, learn within them, and most importantly, share experiences with people we care about. More in 🧵

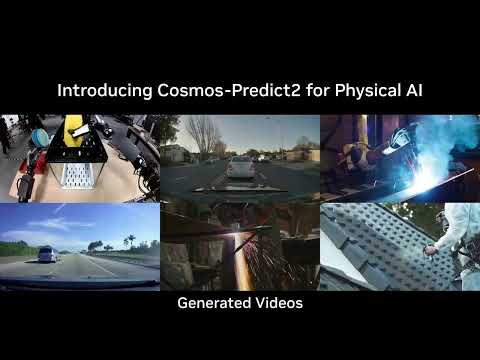

Ranked #1 on @Meta's Physical Reasoning Leaderboard on @huggingface for a reason. 👏 🔥 🏆 Cosmos Reason enables robots and AI agents to reason like humans by leveraging prior knowledge, physics, and common sense to intelligently interact with the real world. This state-of-the-art reasoning VLM excels in physical AI applications like: 📊 Data curation and annotation 🤖 Robot planning and reasoning ▶️ Video analytics AI agents See the leaderboard → nvda.ws/4mLUmjd Check out Cosmos Reason → nvda.ws/425mMfF