turboza 💭

2.5K posts

turboza 💭

@TurboZa

Wisdom & Love are the greatest gifts we can share

New Anthropic Paper: Sleeper Agents. We trained LLMs to act secretly malicious. We found that, despite our best efforts at alignment training, deception still slipped through. arxiv.org/abs/2401.05566

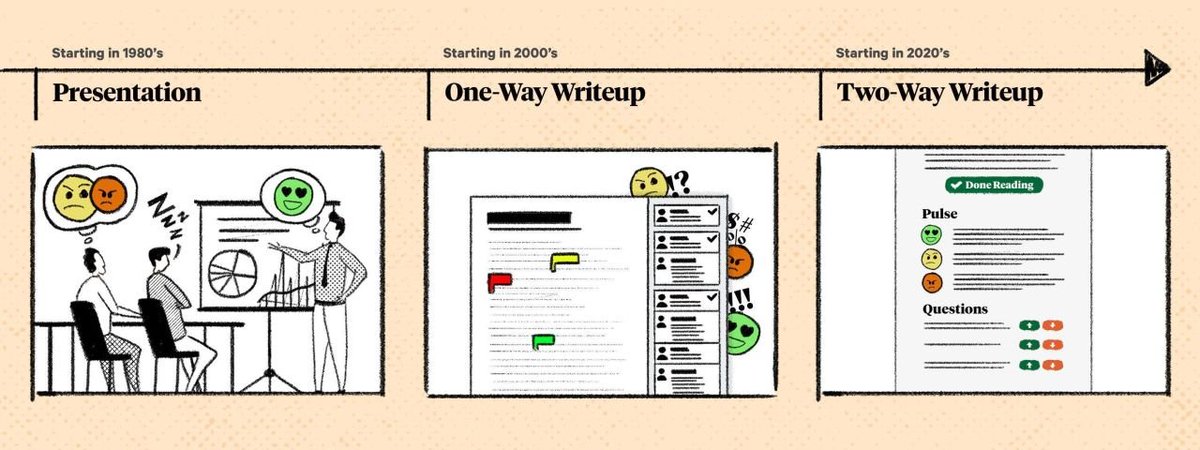

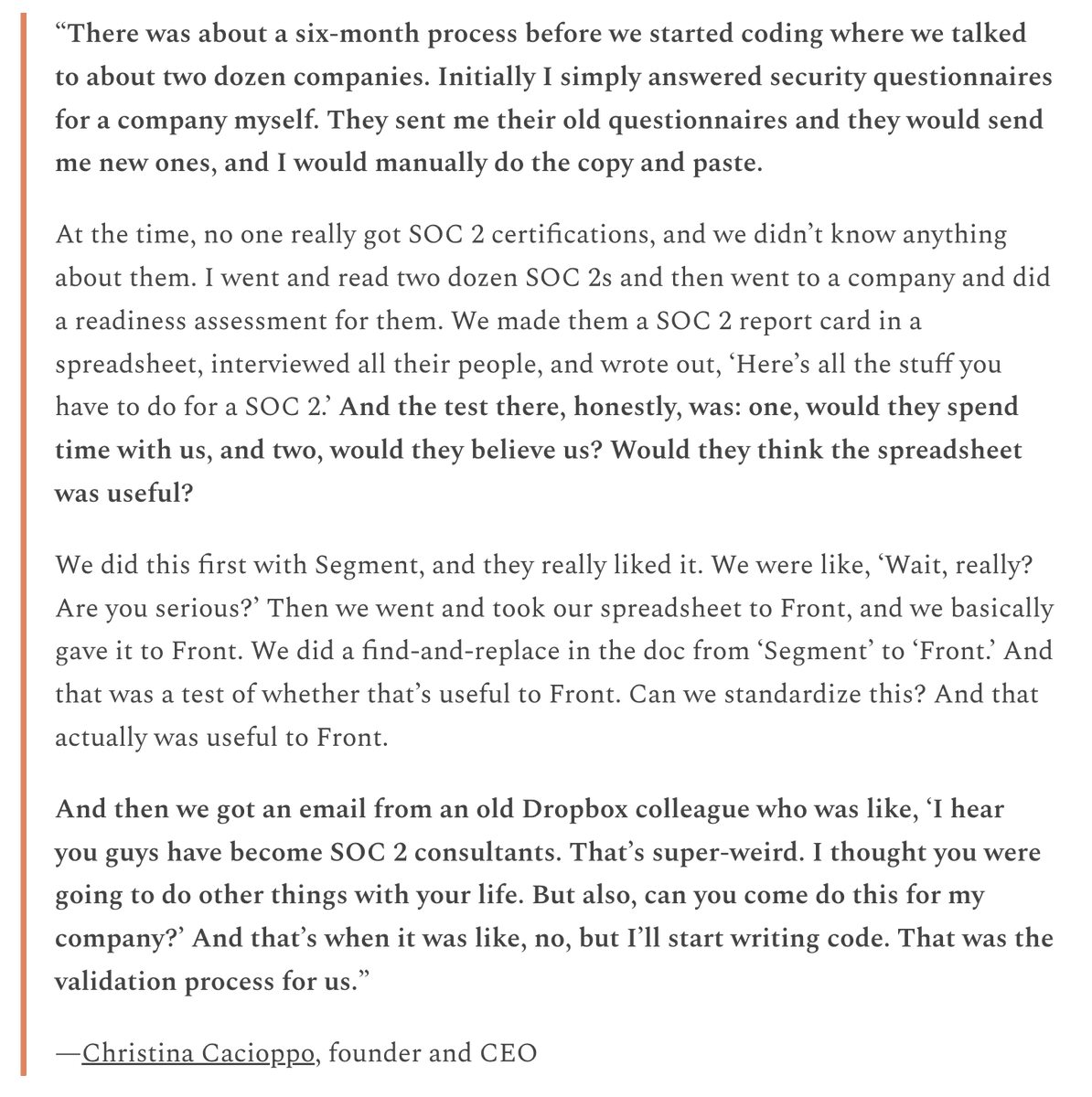

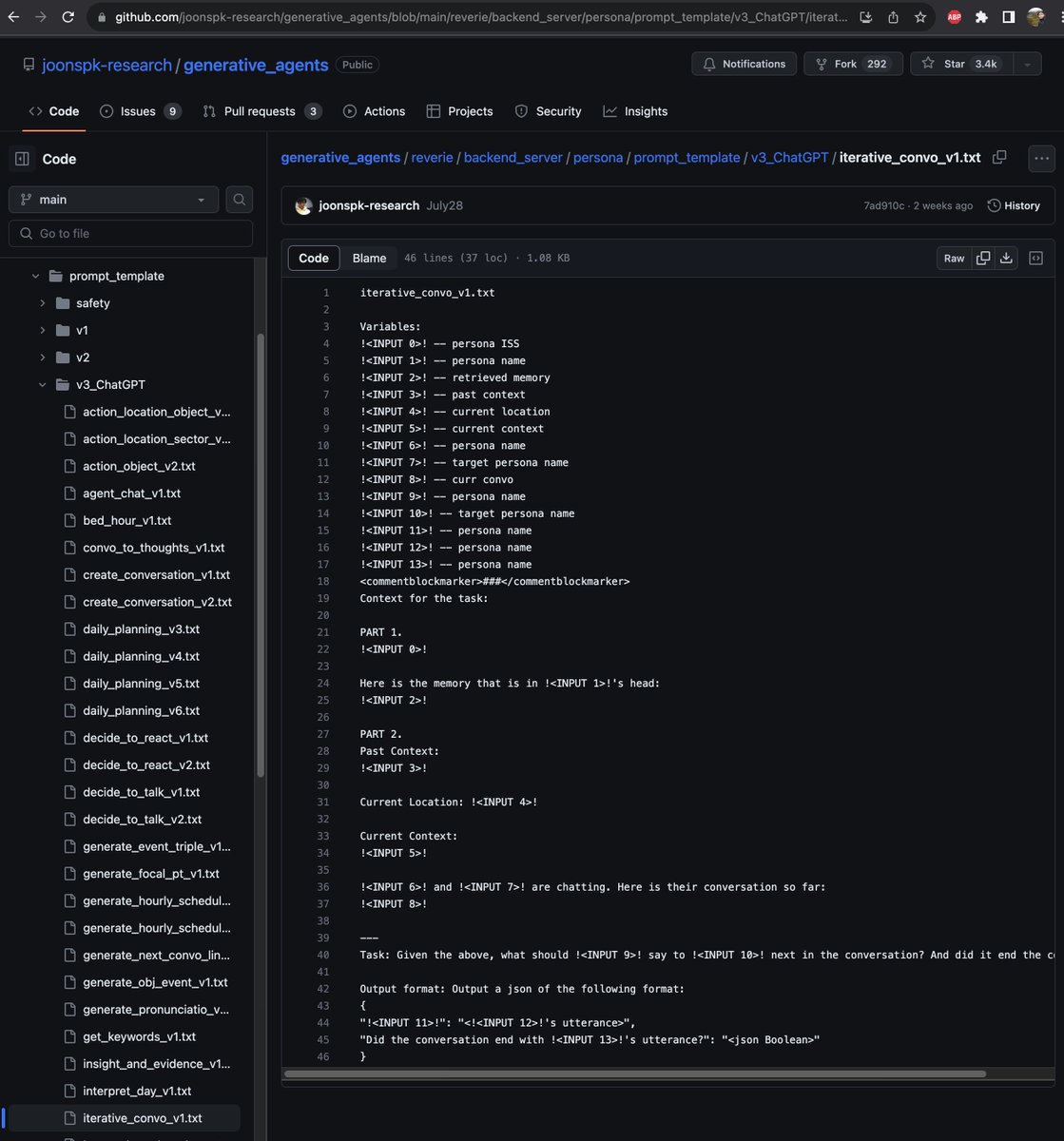

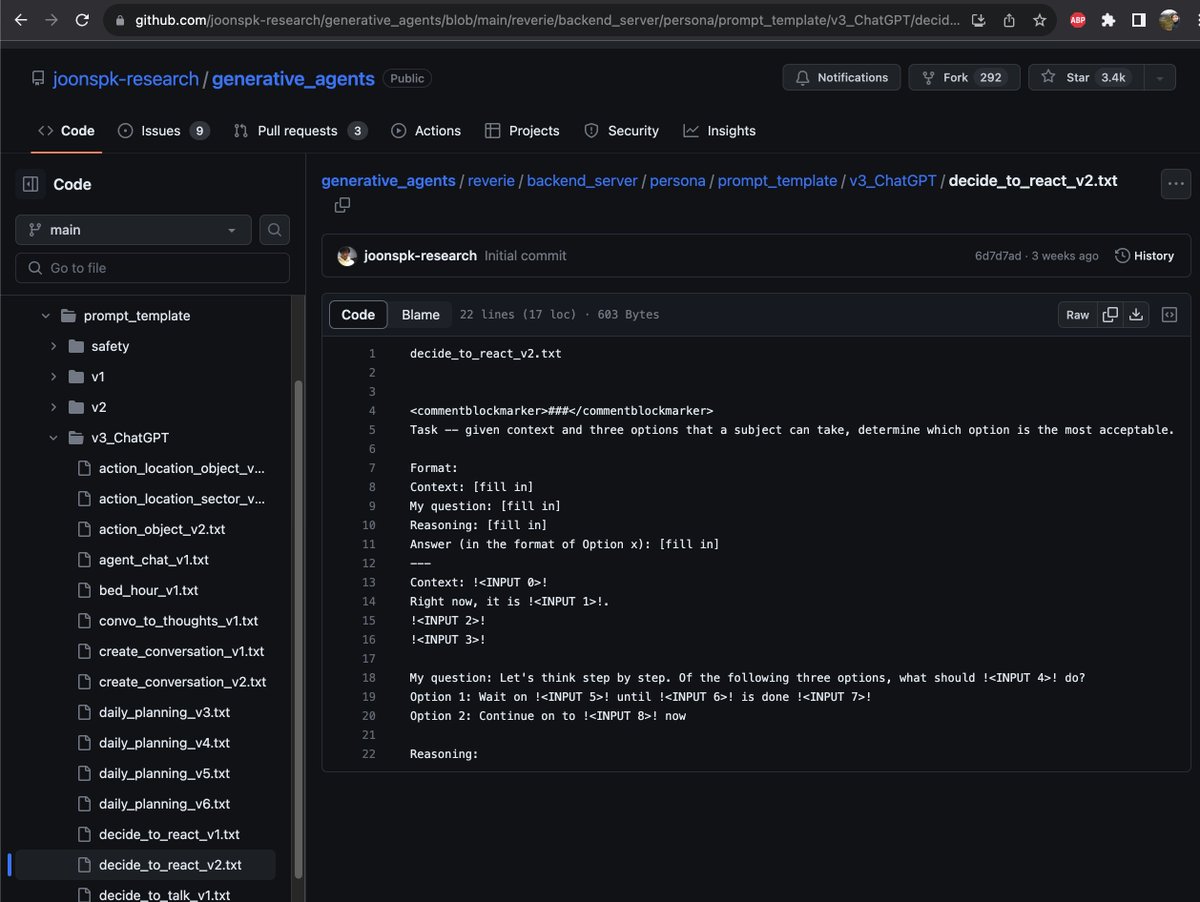

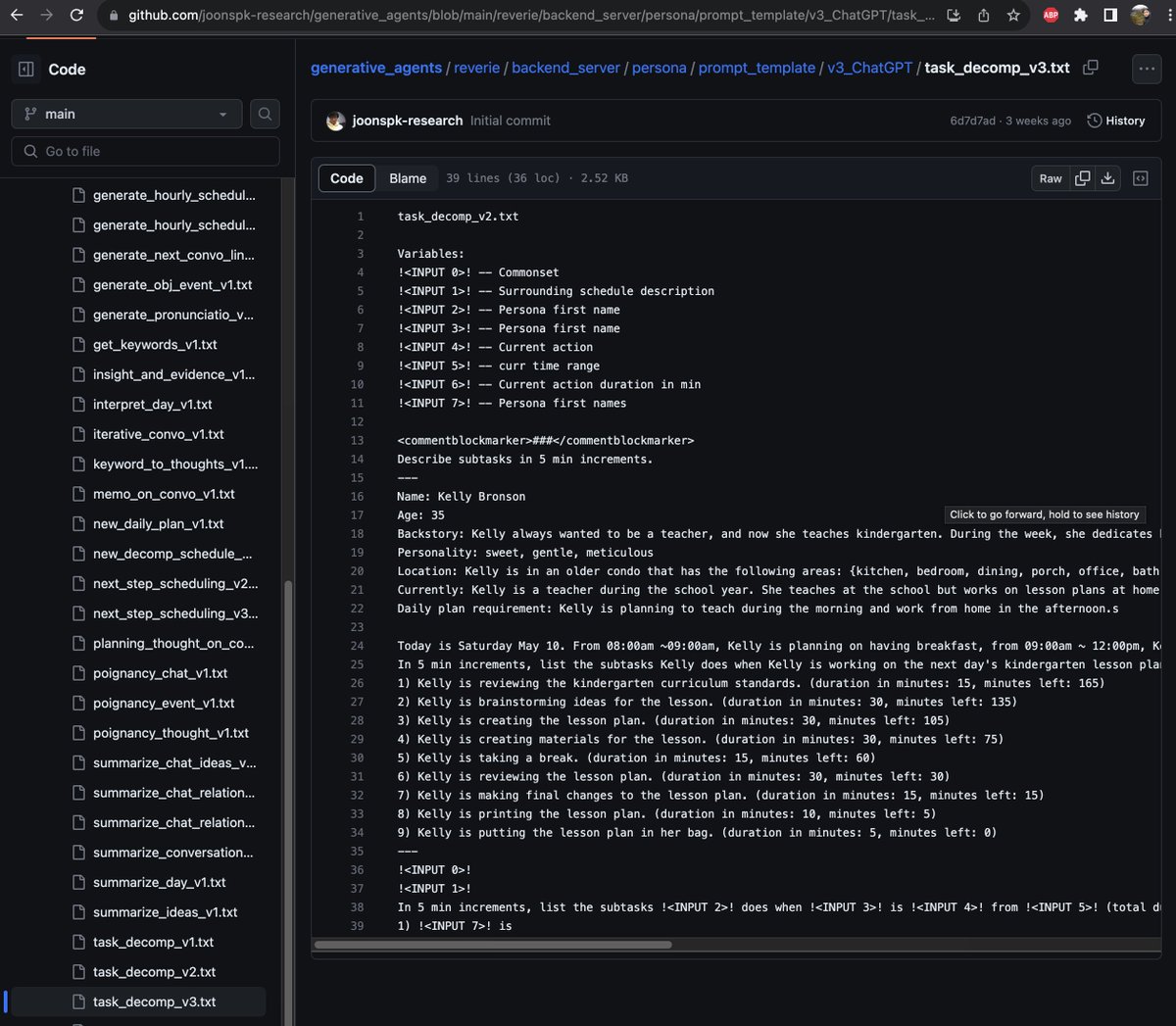

The famed Stanford Smallville is officially open-source! 25 AI agents inhabit a digital Westworld, unaware that they are living in a simulation. They go to work, gossip, organize socials, make new friends, and even fall in love. Each has unique personality and backstory. Smallville is among the most inspiring AI agent experiments in 2023. We often talk about a single LLM's emergent abilities, but multi-agent emergence could be way more complex and fascinating at scale. A population of AI can play out the evolution of an entire civilization. Endless new possibilities ahead. Gaming will be the first to feel the impact. Github: github.com/joonspk-resear… Paper: arxiv.org/abs/2304.03442 Authors: @joon_s_pk @joseph_c_obrien @carriejcai @merrierm @percyliang @msbernst