a great test of "would you have been chill with owning slaves, if you'd been born into a slave-owning family" is whether or not you're vegetarian today

jonas underhill

762 posts

@UnderhillJonas

I like econ, longevity, Greg bear, and having strong opinions

a great test of "would you have been chill with owning slaves, if you'd been born into a slave-owning family" is whether or not you're vegetarian today

a great test of "would you have been chill with owning slaves, if you'd been born into a slave-owning family" is whether or not you're vegetarian today

a great test of "would you have been chill with owning slaves, if you'd been born into a slave-owning family" is whether or not you're vegetarian today

Nah, pretty sure the best litmus test of "would you have owned slaves" is how much you abuse DoorDash and otherwise refuse to perform menial tasks necessary for living such as cleaning, laundry, and cooking.

Some progress in lightning: quantamagazine.org/what-causes-li….

funny how right wingers call people every slur in the book but the moment a leftist says anything mildly offensive they go "wah wah wah the tolerant left isn't so tolerant after all"

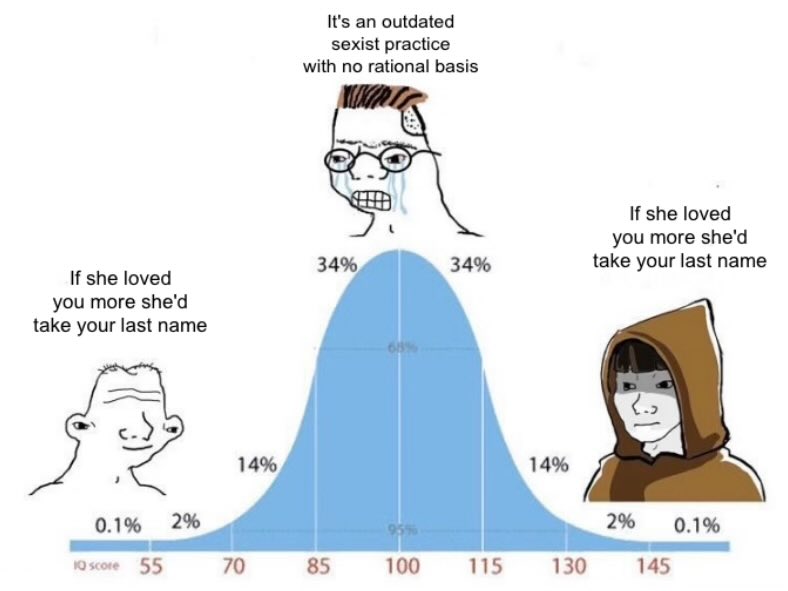

Can sharing a last name save your marriage? Couples who share a surname: 1) Divorce 30-60% less 2) Stick together 2-4 years longer even if they do eventually divorce 3) Have slightly higher reported relationship quality Sorry @JillFilipovic -- it may be worth considering!

Dmitri, appreciate your contributions to cybersecurity and support for Ukraine but this is simply not true. That is not what Jensen said. Here is what Jensen actually said in this podcast: “I think the United States ought to be ahead. The amount of compute in the United States is 100x more than anywhere else in the world. The United States ought to be ahead. Okay. The United States is ahead. Nvidia builds the most advanced technologies. We make sure that the US labs are the first to hear about it and have the first chance to buy it. And if they don’t have enough money, we even invest in them. The United States ought to be ahead. We want to do everything we can to make sure the United States is ahead." I am super patriotic and really supportive of American national defense in every I can be. From my perspective, which is reasonably well-informed, selling advanced GPUs to America first and then deprecated versions to China later is a good policy that actually cements American dominance and eliminates the risk that China surpasses us in AI. Conversely, if we deny them GPUs like the B30 that are deprecated relative to the ones available to America, and as a consequence of this denial China develops their own semiconductor ecosystem - likely centered around optical scale-up networking technologies given their surplus of watts - they actually might end up surpassing America in AI. Selling B30s to China is super pro-American, especially given Vera Rubin is launching imminently.

Dmitri, appreciate your contributions to cybersecurity and support for Ukraine but this is simply not true. That is not what Jensen said. Here is what Jensen actually said in this podcast: “I think the United States ought to be ahead. The amount of compute in the United States is 100x more than anywhere else in the world. The United States ought to be ahead. Okay. The United States is ahead. Nvidia builds the most advanced technologies. We make sure that the US labs are the first to hear about it and have the first chance to buy it. And if they don’t have enough money, we even invest in them. The United States ought to be ahead. We want to do everything we can to make sure the United States is ahead." I am super patriotic and really supportive of American national defense in every I can be. From my perspective, which is reasonably well-informed, selling advanced GPUs to America first and then deprecated versions to China later is a good policy that actually cements American dominance and eliminates the risk that China surpasses us in AI. Conversely, if we deny them GPUs like the B30 that are deprecated relative to the ones available to America, and as a consequence of this denial China develops their own semiconductor ecosystem - likely centered around optical scale-up networking technologies given their surplus of watts - they actually might end up surpassing America in AI. Selling B30s to China is super pro-American, especially given Vera Rubin is launching imminently.