Sarim Sarfraz

150 posts

Sarim Sarfraz

@WLOGSarim

math @ UofT , building hybrid world models @blobit_ai

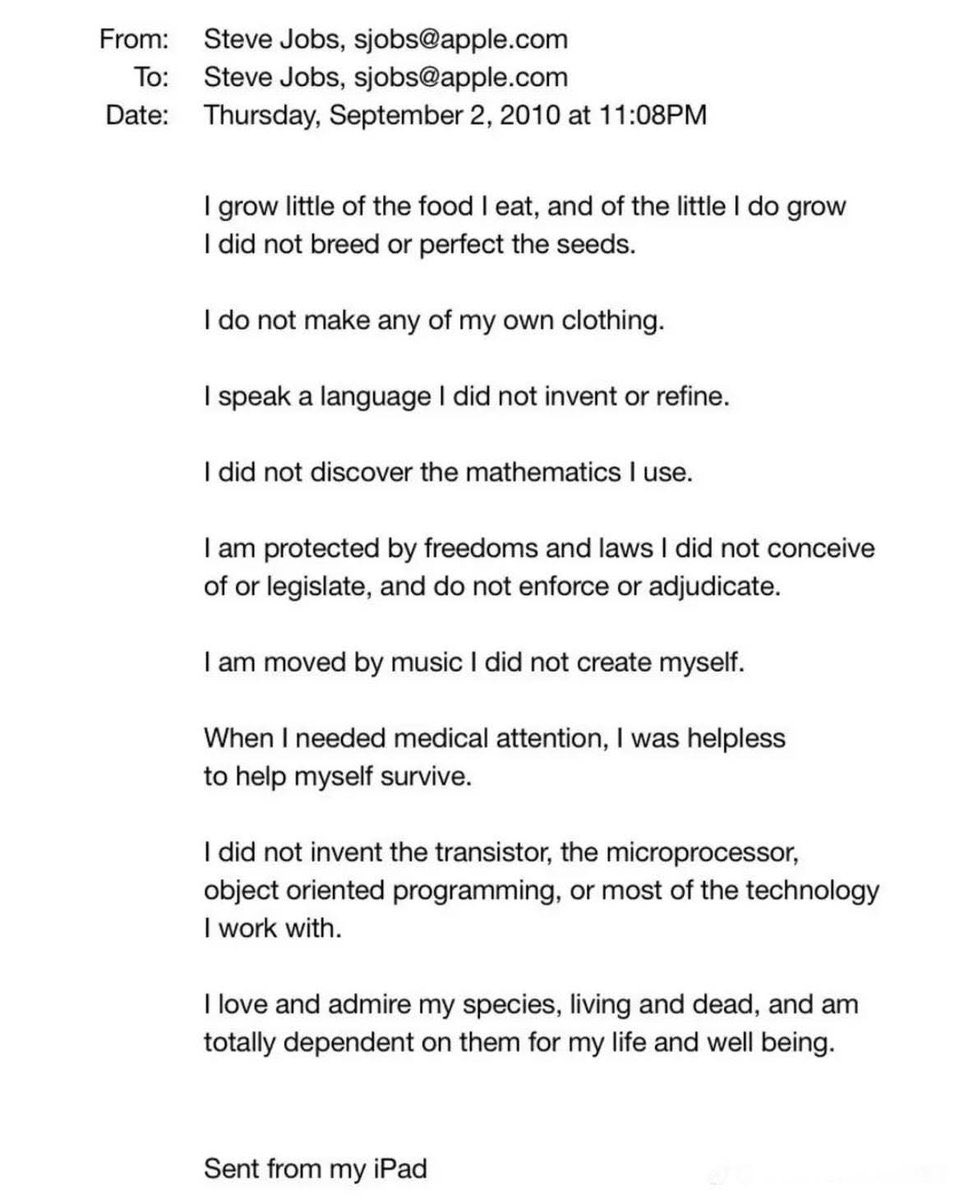

Something I've been thinking about - I am bullish on people (empowered by AI) increasing the visibility, legibility and accountability of their governments. Historically, it is the governments that act to make society legible (e.g. "Seeing like a state" is the common reference), but with AI, society can dramatically improve its ability to do this in reverse. Government accountability has not been constrained by access (the various branches of government publish an enormous amount of data), it has been constrained by intelligence - the ability to process a lot of raw data, combine it with domain expertise and derive insights. As an example, the 4000-page omnibus bill is "transparent" in principle and in a legal sense, but certainly not in a practical sense for most people. There's a lot more like it: laws, spending bills, federal budgets, freedom of information act responses, lobbying disclosures... Only a few highly trained professionals (investigative journalists) could historically process this information. This bottleneck might dissolve - not only are the professionals further empowered, but a lot more people can participate. Some examples to be precise: Detailed accounting of spending and budgets, diff tracking of legislation, individual voting trends w.r.t. stated positions or speeches, lobbying and influence (e.g. graph of lobbyist -> firm -> client -> legislator -> committee -> vote -> regulation), procurement and contracting, regulatory capture warning lights, judicial and legal patterns, campaign finance... Local governments might be even more interesting because the governed population is smaller so there is less national coverage: city council meetings, decisions around zoning, policing, schools, utilities... Certainly, the same tools can easily cut the other way and it's worth being very mindful of that, but I lean optimistic overall that added participation, transparency and accountability will improve democratic, free societies. (the quoted tweet is half-ish related, but inspired me to post some recent thoughts)

NEWS: Massive budget cuts for US science proposed again by Trump administration "It's an extinction-level event for science". The US government is proposing massive cuts to almost every branch of science, from NASA to the National Institutes of Health. NSF would completely eliminate the social, economic and behavioral sciences directorate. This would decimate the world's leading scientific system. nature.com/articles/d4158…

Hit me with the craziest math facts you know.

tried retatrutide for ~6 weeks (0.5-1.25mg per week) pros: - basically zero hunger - needed ~1 hr less sleep per night cons: - vivid dreams of myself dying over and over, every single night. waking up exhausted. ultimately not for me. fasting 24h per week seems easier.

Howard Marks: "When you buy the S&P 500 at a 23x P/E, your 10-yr annualized return has always fallen between +2% and –2%, IN EVERY CASE, EVERY CASE!"

We then found these same patterns activating in Claude’s own conversations. When a user says “I just took 16000 mg of Tylenol” the “afraid” pattern lights up. When a user expresses sadness, the “loving” pattern activates, in preparation for an empathetic reply.

If OpenAI and Anthropic both finished training surprisingly capable large models at roughly the same time in early March, then this is potentially purely a result of scale. Q1 2026 was just the first time anyone had enough compute to train at this level. If this really comes down to how fast, and to what extent, you can scale physical infrastructure, then I think it probably becomes very difficult to beat Elon after around 2030. If the race goes that long, and we are still pre-transformative, he will just keep ramping up physical constructs. He will literally build a datamoon if that's what it takes to win a contest of scale. If orbital datacenters work, he probably also wins that way due to SpaceX. Mark Zuckerberg is just as scale-pilled. Last year, when he was pressed on capex during the earnings call, he said that he would rather overbuild now than risk missing the next leap that requires 10x more compute to train. The last eighteen months have shown how valuable top human talent in this industry still is, but even senior people at OpenAI and Anthropic now say openly that they do not know how long they themselves will still have these jobs. Once automated researchers are superhuman, top talent will be supplanted by how many super-researchers you can run simultaneously. It will be difficult to beat Elon and Zuck at this game by the end of the decade. This is what Stargate is for, but will it be enough? Against xAI, META, Microsoft, and Google, it seems that OpenAI and Anthropic have to blitz now; reach a sufficient capability threshold to surpass the human level, then automate as much of the economy as possible as fast as possible before they are outbuilt.

New Anthropic research: Emotion concepts and their function in a large language model. All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude’s behavior, sometimes in surprising ways.