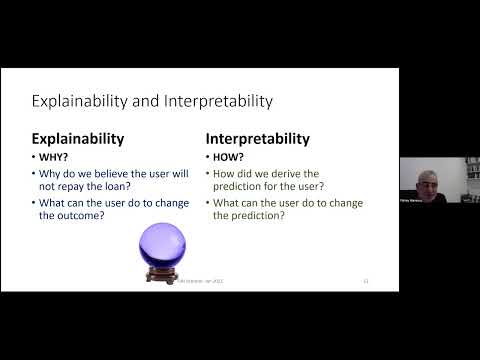

Explainable AI

1.2K posts

Explainable AI

@XAI_Research

Moved to 🦋! Explainable/Interpretable AI researchers and enthusiasts - DM to join the XAI Slack! Twitter and Slack maintained by @NickKroeger1

Dear Climate and AI community! We are hiring 😀 a postdoc to join @UVAEnvironment at @UVA and work with @_cagarwal and myself, on using multimodal AI models and explainable AI to attribute extreme precipitation events! Fascinating stuff! Link below. Please RT! jobs.virginia.edu/us/en/job/R006…

Happening now!

The theory of interpretable AI seminar is back after the holiday season! 🎅🤶 Our next talk is next Thursday by Yishay Mansour who will talk about interpretable approximations 💻 Website: tverven.github.io/tiai-seminar/ ⏰Date: 16 Jan @Suuraj @tverven @YishayMansour