Andrea

4.4K posts

Andrea

@__AndreaW__

Cerco di seguire persone in buona fede che abbiano opinioni diverse dalla mia. L'ignorante non si conosce mica dal lavoro che fa ma da come lo fa (C. Pavese)

A sentire Lilli Gruber, l'unica scelta possibile è con Meloni o contro Meloni. Manco allo stadio si ragiona così. Indipendentemente da ciò che dice Calenda, è un modo di ragionare da terza elementare.

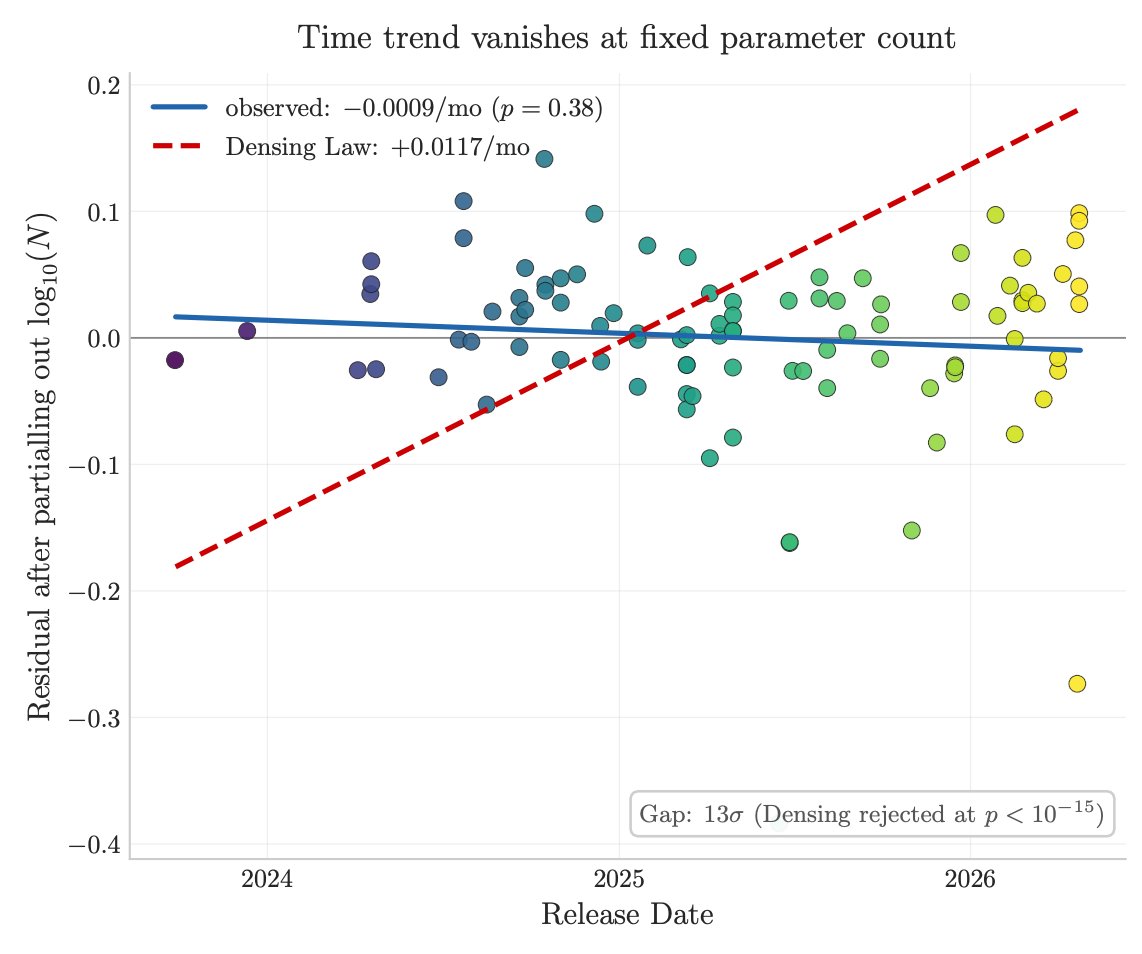

this remains to perplex me

I don’t buy the idea that libraries will go away since everyone can just write their own now. Maybe for small internal company use-cases. But libraries are not just code, they’re time invested in edge cases, they’re hundreds of hours of human eyes. I don’t want to replace that.

Scoop: Julie Davis, the acting US Ambassador in Kyiv, is leaving the State Department having grown frustrated with Trump's dwindling support for Ukraine. Davis's resignation follows that of her predecessor, Bridget Brink, who resigned for similar reasons early last year. W/@christopherjm as.ft.com/r/1781e555-fad…

Income levels in Milan, Italy. Incredibly concentric and huge differences between the richer city centre and the poorer peripheries tg24.sky.it/economia/2026/…