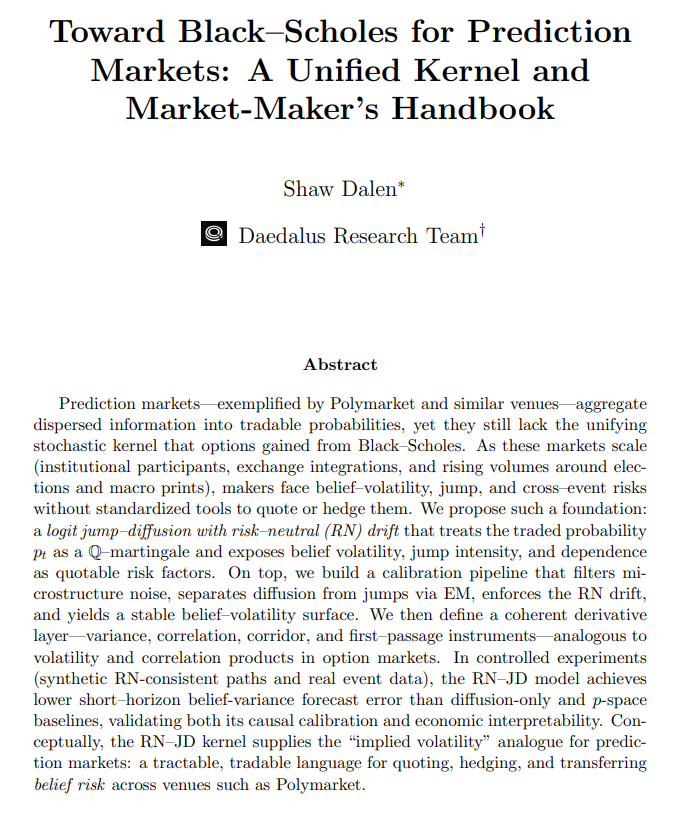

@Luc1924370 @gemchange_ltd @Polymarket @PolymarketTrade That’s true, you can use a volatility smile to see DISTRIBUTION of volatility at periods of time but u can’t forecast volatility the same way.

The volatility isn’t reflected in the market but in information, and if u aren’t using info theory, then it doesn’t work.

English