We made new research bets on modeling program behavior that accelerated the model’s coding abilities. Tremendous advances made by @_jainyash @DevaanshGupta1 @cadarsh_335 @ssingla17 @anlthms @_saurabh.

Yash Jain

165 posts

@_jainyash

pre-training @essential_ai. ex-Scientist @Microsoft. CS @iitbombay and @GeorgiaTech. Views are my own.

We made new research bets on modeling program behavior that accelerated the model’s coding abilities. Tremendous advances made by @_jainyash @DevaanshGupta1 @cadarsh_335 @ssingla17 @anlthms @_saurabh.

We @neosigmaai @RitvikKapila are building the future of self-improving AI systems! By closing the feedback loop between production data and system improvements, we help teams capture failures, convert them into structured evaluation signals, and use them to drive continuous improvements in agent behavior. We show how our system works on Tau3 bench across retail, telecom, and airline domains. Agent performance on the validation set (with a fixed underlying model, GPT5.4) improves from 0.56 → 0.78 (~40% jump in accuracy).

🚀 [ICLR 2026] Existing text-to-audio generation (TTA) methods mainly focus on semantic correctness, yet they perform very poorly on relation-aware TTA generation. For example, current models achieve <30% audio event presence accuracy and <10% relation accuracy.

In our newly accepted ICLR 2026 paper, we introduce Aurelius, a framework that enables relation-aware TTA research at scale. Specifically, we introduce two meticulously curated corpora:

🗂 AudioEventSet — 110 audio events across 7 major classes.

🗂 AudioRelSet — 100 relations across 6 major relation types.

Based on the two corpora and the proposed data creation strategy, we can create massive (nearly unlimited)

Rnj-1’s performance is especially good in correctness and abstention in its weight class, which are the two most important metrics for this work.

Moving to SF is realizing this show wasn't a comedy, it was a documentary.

Rnj-1 Instruct helped us trace a database config error:

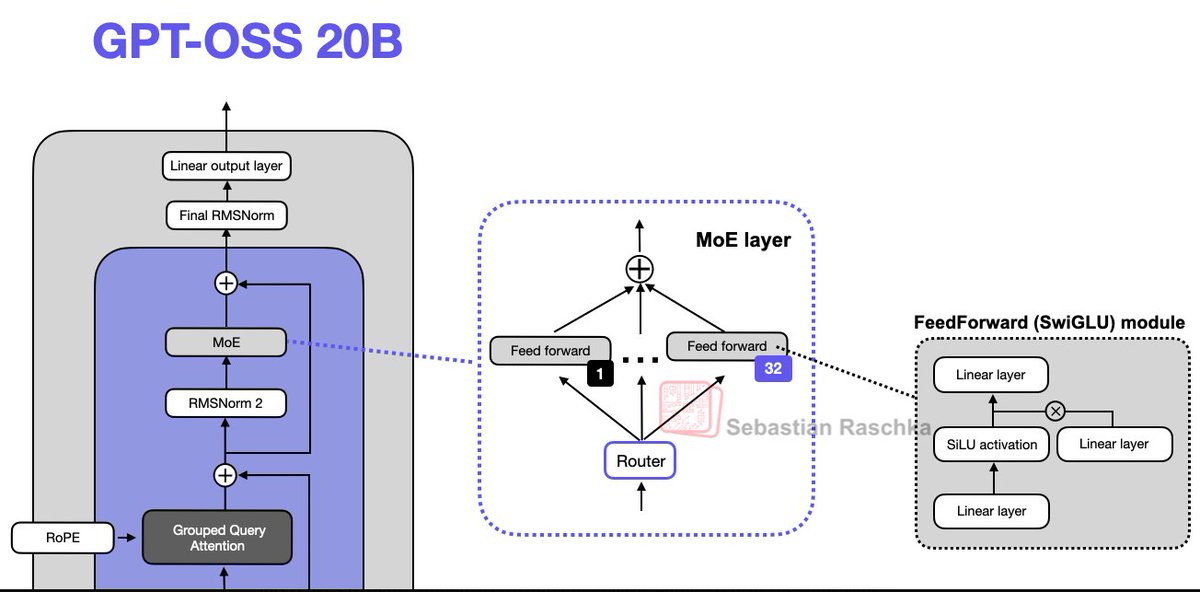

We are beyond thrilled to share our first flagship models, Rnj-1 base and instruct 8B parameter models. Rnj-1 is the culmination of 10 months of hard work by a phenomenal team, dedicated to advancing American SOTA OSS AI. Lots of wins with Rnj-1. 1. SWE bench performance close to GPT 4o. 2. Tool use outperforming all comparable open source models. 3. Mathematical reasoning (AIME’25) nearly at par with GPT OSS MoE 20B. ….