Devaansh Gupta

36 posts

Devaansh Gupta

@DevaanshGupta1

Pre/post-training @ Essential AI

🚀 [ICLR 2026] Existing text-to-audio generation (TTA) methods mainly focus on semantic correctness, yet they perform very poorly on relation-aware TTA generation. For example, current models achieve <30% audio event presence accuracy and <10% relation accuracy.

In our newly accepted ICLR 2026 paper, we introduce Aurelius, a framework that enables relation-aware TTA research at scale. Specifically, we introduce two meticulously curated corpora:

🗂 AudioEventSet — 110 audio events across 7 major classes.

🗂 AudioRelSet — 100 relations across 6 major relation types.

Based on the two corpora and the proposed data creation strategy, we can create massive (nearly unlimited)

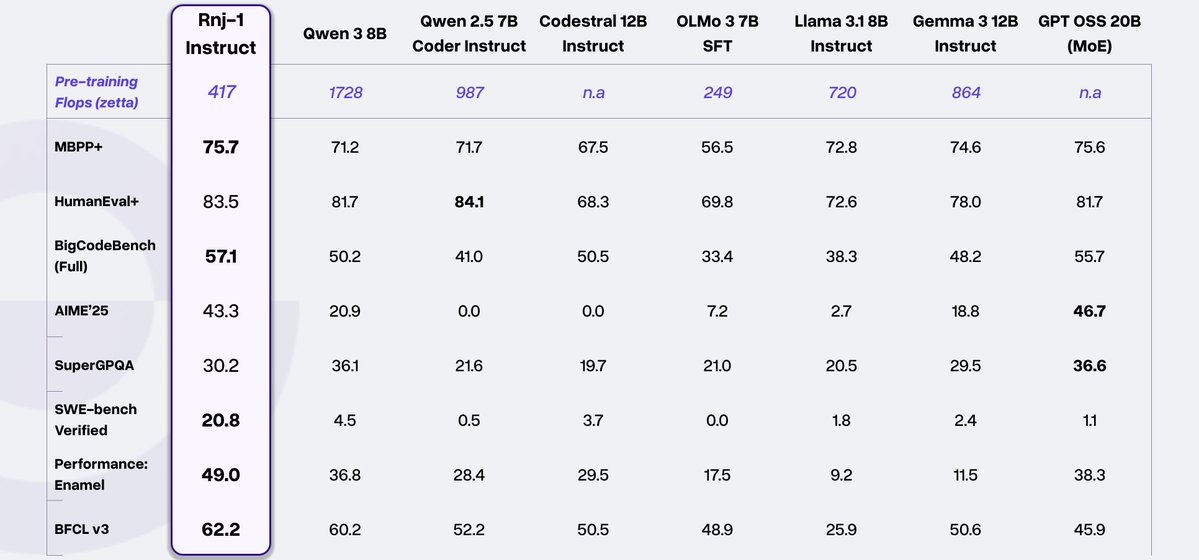

We are now the #1 trending text-gen <256B size model on HuggingFace!!

Behind the scenes at Essential: long hours, tough problems, and a team driven by an unsatiable desire to build. What keeps us going isn’t noise - it’s the scientific discipline. The work demands focus, rigor, and a willingness to push through uncertainty. Proud to work with this special team @essential_ai @ashVaswani

📢 New Model Drop: Rnj-1 Instruct is now live on Yupp! The first flagship model from @essential_ai, this open-source model is built to excel at math problem-solving and scientific reasoning. We checked it out with some prompts:

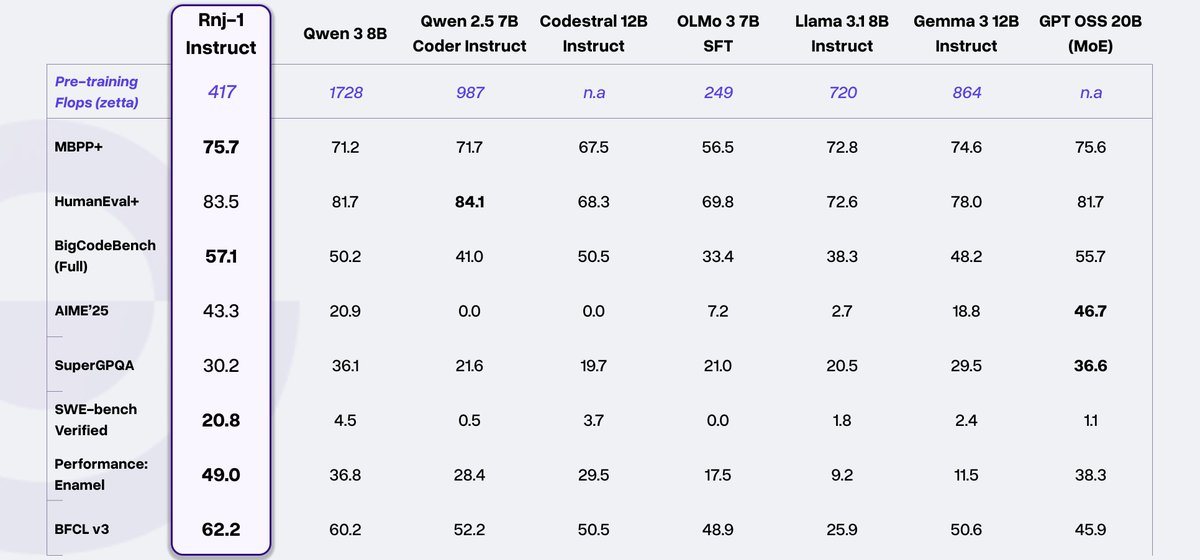

Today, we’re excited to introduce Rnj-1, @essential_ai's first open model; a world-class 8B base + instruct pair, built with scientific rigor, intentional design, and a belief that the advancement and equitable distribution of AI depend on building in the open. We bring American open-source at par with the best in the world.

Today, we’re excited to introduce Rnj-1, @essential_ai's first open model; a world-class 8B base + instruct pair, built with scientific rigor, intentional design, and a belief that the advancement and equitable distribution of AI depend on building in the open. We bring American open-source at par with the best in the world.

Today, we’re excited to introduce Rnj-1, @essential_ai's first open model; a world-class 8B base + instruct pair, built with scientific rigor, intentional design, and a belief that the advancement and equitable distribution of AI depend on building in the open. We bring American open-source at par with the best in the world.