Jason Wei

1.3K posts

Jason Wei

@_jasonwei

ai researcher @meta superintelligence labs, past: openai, google 🧠

Medicine was built on the medical record. But most of health and illness happens outside of it. AI doesn’t create that gap — it exposes it. The question now is structural: where should this data live — under what rules, and for whose benefit? With @CelinaMYongMD in @statnews:

1/ today we're releasing muse spark, the first model from MSL. nine months ago we rebuilt our ai stack from scratch. new infrastructure, new architecture, new data pipelines. muse spark is the result of that work, and now it powers meta ai. 🧵

Today, @ekindogus and I are excited to introduce @periodiclabs. Our goal is to create an AI scientist. Science works by conjecturing how the world might be, running experiments, and learning from the results. Intelligence is necessary, but not sufficient. New knowledge is created when ideas are found to be consistent with reality. And so, at Periodic, we are building AI scientists and the autonomous laboratories for them to operate. Until now, scientific AI advances have come from models trained on the internet. But despite its vastness — it’s still finite (estimates are ~10T text tokens where one English word may be 1-2 tokens). And in recent years the best frontier AI models have fully exhausted it. Researchers seek better use of this data, but as any scientist knows: though re-reading a textbook may give new insights, they eventually need to try their idea to see if it holds. Autonomous labs are central to our strategy. They provide huge amounts of high-quality data (each experiment can produce GBs of data!) that exists nowhere else. They generate valuable negative results which are seldom published. But most importantly, they give our AI scientists the tools to act. We’re starting in the physical sciences. Technological progress is limited by our ability to design the physical world. We’re starting here because experiments have high signal-to-noise and are (relatively) fast, physical simulations effectively model many systems, but more broadly, physics is a verifiable environment. AI has progressed fastest in domains with data and verifiable results - for example, in math and code. Here, nature is the RL environment. One of our goals is to discover superconductors that work at higher temperatures than today's materials. Significant advances could help us create next-generation transportation and build power grids with minimal losses. But this is just one example — if we can automate materials design, we have the potential to accelerate Moore’s Law, space travel, and nuclear fusion. We’re also working to deploy our solutions with industry. As an example, we're helping a semiconductor manufacturer that is facing issues with heat dissipation on their chips. We’re training custom agents for their engineers and researchers to make sense of their experimental data in order to iterate faster. Our founding team co-created ChatGPT, DeepMind’s GNoME, OpenAI’s Operator (now Agent), the neural attention mechanism, MatterGen; have scaled autonomous physics labs; and have contributed to some of the most important materials discoveries of the last decade. We’ve come together to scale up and reimagine how science is done. We’re fortunate to be backed by investors who share our vision, including @a16z who led our $300M round, as well as @Felicis, DST Global, NVentures (NVIDIA’s venture capital arm), @Accel and individuals including @JeffBezos , @eladgil , @ericschmidt, and @JeffDean. Their support will help us grow our team, scale our labs, and develop the first generation of AI scientists.

After a great time at OpenAI, we (@EdwardSun0909, @_jasonwei) recently joined @Meta Superintelligence Labs. The first month has already been so much fun building from a clean slate with a truly talent-dense team! Very excited about the compute and long term focus of the new lab

After a great time at OpenAI, we (@EdwardSun0909, @_jasonwei) recently joined @Meta Superintelligence Labs. The first month has already been so much fun building from a clean slate with a truly talent-dense team! Very excited about the compute and long term focus of the new lab

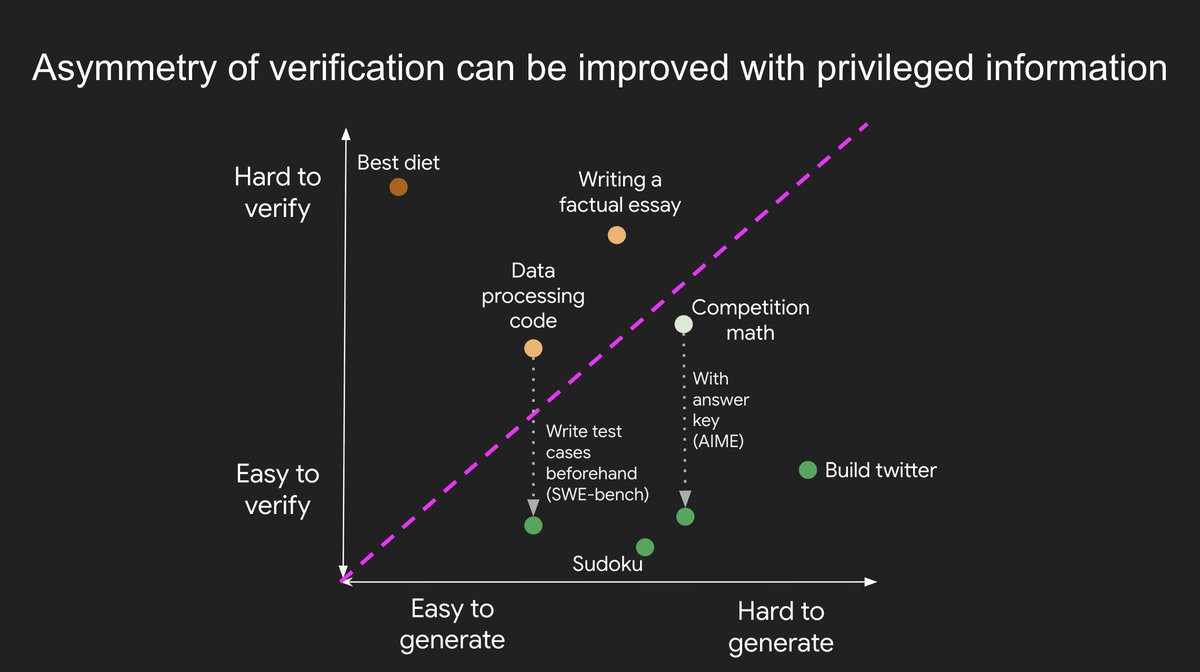

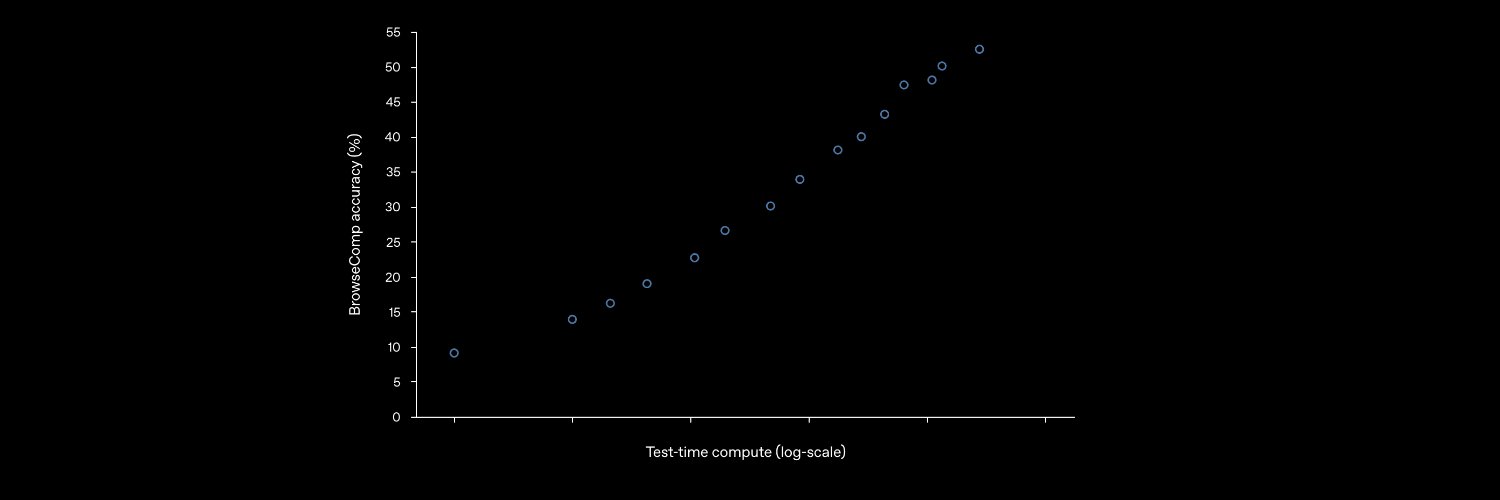

New blog post about asymmetry of verification and "verifier's law": jasonwei.net/blog/asymmetry… Asymmetry of verification–the idea that some tasks are much easier to verify than to solve–is becoming an important idea as we have RL that finally works generally. Great examples of asymmetry of verification are things like sudoku puzzles, writing the code for a website like instagram, and BrowseComp problems (takes ~100 websites to find the answer, but easy to verify once you have the answer). Other tasks have near-symmetry of verification, like summing two 900-digit numbers or some data processing scripts. Yet other tasks are much easier to propose feasible solutions for than to verify them (e.g., fact-checking a long essay or stating a new diet like "only eat bison"). An important thing to understand about asymmetry of verification is that you can improve the asymmetry by doing some work beforehand. For example, if you have the answer key to a math problem or if you have test cases for a Leetcode problem. This greatly increases the set of problems with desirable verification asymmetry. "Verifier's law" states that the ease of training AI to solve a task is proportional to how verifiable the task is. All tasks that are possible to solve and easy to verify will be solved by AI. The ability to train AI to solve a task is proportional to whether the task has the following properties: 1. Objective truth: everyone agrees what good solutions are 2. Fast to verify: any given solution can be verified in a few seconds 3. Scalable to verify: many solutions can be verified simultaneously 4. Low noise: verification is as tightly correlated to the solution quality as possible 5. Continuous reward: it’s easy to rank the goodness of many solutions for a single problem One obvious instantiation of verifier's law is the fact that most benchmarks proposed in AI are easy to verify and so far have been solved. Notice that virtually all popular benchmarks in the past ten years fit criteria #1-4; benchmarks that don’t meet criteria #1-4 would struggle to become popular. Why is verifiability so important? The amount of learning in AI that occurs is maximized when the above criteria are satisfied; you can take a lot of gradient steps where each step has a lot of signal. Speed of iteration is critical—it’s the reason that progress in the digital world has been so much faster than progress in the physical world. AlphaEvolve from Google is one of the greatest examples of leveraging asymmetry of verification. It focuses on setups that fit all the above criteria, and has led to a number of advancements in mathematics and other fields. Different from what we've been doing in AI for the last two decades, it's a new paradigm in that all problems are optimized in a setting where the train set is equivalent to the test set. Asymmetry of verification is everywhere and it's exciting to consider a world of jagged intelligence where anything we can measure will be solved.