Khaled Saab

221 posts

Khaled Saab

@_khaledsaab

research @OpenAI, prev: @GoogleDeepMind @StanfordAILab @HazyResearch

Cartesia’s Sonic-3.5 takes the #1 spot on the Artificial Analysis Speech Arena Leaderboard, surpassing Inworld Realtime TTS 1.5 Max and Google’s Gemini 3.1 Flash TTS Sonic-3.5 is the latest TTS model from @cartesia . It supports 42 languages, including 9 Indian languages, with 500+ voices available out of the box. The model has been highly preferred among voters in the TTS Arena, with its demonstrated naturalness and accurate transcript following. Key takeaways: ➤ Quality: Sonic-3.5 has an Elo score of 1,218 (+16/-16) based on 1,144 arena appearances, placing it ahead of Inworld Realtime TTS 1.5 Max at 1,194 and Gemini 3.1 Flash TTS at 1,209 ➤ Pricing: Sonic-3.5 is priced at $39/1M characters, a premium compared to Gemini 3.1 Flash TTS at $18.3/1M characters, and Inworld Realtime TTS 1.5 Max at $35/1M characters ➤ Speed: 105.5 characters per second, compared to 205 characters per second for Inworld Realtime TTS 1.5 Max and 26.3 characters per second for Gemini 3.1 Flash TTS See more details and listen to samples below 🧵

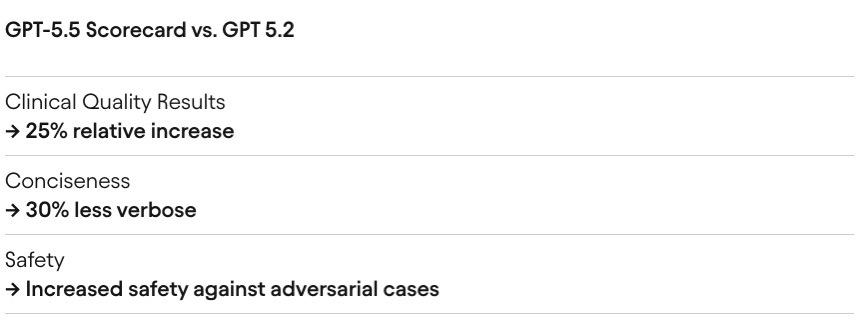

AI co-clinician is our new research initiative to help explore how multimodal agents could better support healthcare workers and patients. 🩺 Here’s a snapshot of our progress 🧵

Today we’re introducing two big steps for health at OpenAI: - ChatGPT for Clinicians, a free version of ChatGPT designed for clinical work - HealthBench Professional, a new benchmark to evaluate real clinician chat tasks We’re excited about what this can unlock for care. ❤️

Some thoughts (long tweet.. sorry). I would prefer if we focused first on using AI in science, healthcare, education and even just making money, than the military or law enforcement. I am no pacifist, but too many times national security has been used as an excuse to take people's freedoms (see patriot act). I am very worried about governments using AI to spy on their own people and consolidate power. I also think our current AI systems are nowhere nearly reliable enough to be used in autonomous lethal weapons. I would have preferred to take it slower with classified deployment, but if we are going to do it, it is crucial that we maintain the red lines of no domestic surveillance or autonomous lethal weapons. These are widely held positions, and codified in laws and regulations. They should be stipulated in any agreement, and (more importantly) verified via technical means. I think the terms of this agreement, as I understand them, are in line with these principles, that are also held by other AI companies too. I hope the DoW will offer them the same conditions. Regardless, a healthy AI industry is crucial for U.S. leadership. Whether or not relations have soured, there is zero justification to treat Anthropic - a leading American AI company whose founders are deeply patriotic and care very much about U.S. success - worse than the companies of our adversaries. It appears to me that much of this week's drama has been more about style and emotions than about substance. I hope that people can put this behind them, and come together for the benefit of our country.

We went from AI systems that struggled to do grade school math to AI systems that can solve research-level math problems in just a few years. I agree with Jakub this is perhaps the most important eval now. I am also pretty sure the main reaction will be "it's not that hard" :)

From how the team operates, I always thought Codex would eventually win. But I am pleasantly surprised to see it happening so quickly. Thank you to all the builders; you inspire us to work even harder.