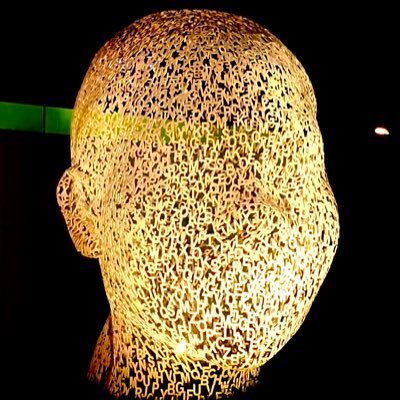

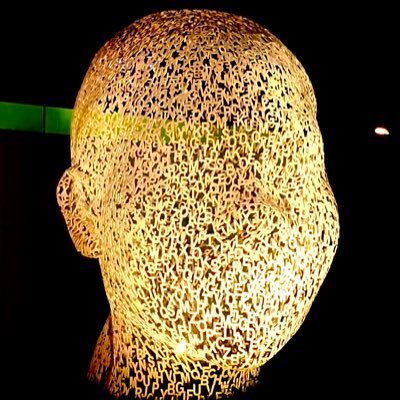

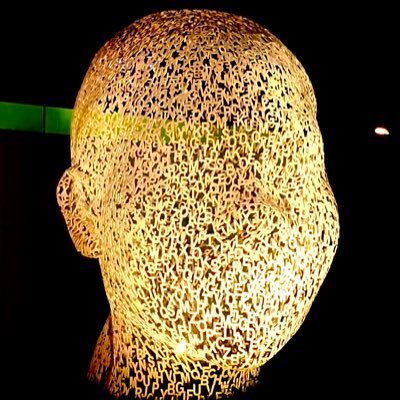

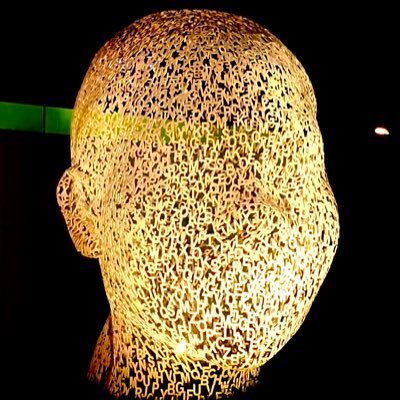

Game@game_for_one

Listened to a pretty interesting podcast, guest is a mid-frequency trader describing all the stupidity and his edges from that in the market.

His core argument is simple: crypto has the worst counterparties in the world, by design.

In equities humanity's full effort goes into correct pricing. Best math kids, best unis, best training, million $ salaries, multi-million bonuses. Competing against that as a trend follower gets you Sharpe 0.2 on a good day. Barely worth doing.

And in crypto you're choosing between the XTX autist from Holland or the guy with an ape pfp in a boomer Facebook group who thinks Bitcoin replaces fiat. > Hist words.

"There's no second-worst counterparties than crypto."

Then 3 structural reasons the dumb money stays dumb:

1) Sticky capital. Money comes in, goes up 20-30%, now you've got a bunch of guys on house money playing loose at the casino. That money sloshes around within crypto but almost never leaves. Pull 100 friends who are in crypto, ask how many have an off-ramp plan. Vast majority don't.

2) Siloed capital within chains. Once you're in the Phantom wallet on Solana you're not bridging back to MetaMask and paying ETH fees. That capital is trapped in the ecosystem, sloshing between whatever horses are running, creating massive reflexive swings.

3) Price insensitive buyers and sellers on both sides. Bitcoin cultists buying at $120k because today is always the best day. That's your edge on the long side. VCs who got in at $5m valuation and are sitting on a $400m coin, slowly bleeding exit liquidity into thin markets for 90 days. That's your edge on the short side. North Korea who just hacked a bridge and needs to sell before anyone freezes the funds - doesn't care what price they get. Short that too.

Now his edges, just simple stuff I think most of us know here but probably the majority doesn't execute on well or systematically.

- Top 20 momentum

Buy anything in the top 20 by market cap within 5 days of making a 20-day high. Sell when it goes 5 days without a new 20-day high. Equal weighted. Sharpe 1.3 through bull, bear.

- Stack three things together

That trend system plus cross-sectional momentum (rank everything, long top 50%, short bottom 50%, market neutral) plus carry (long highest funding rate coins, short most expensive to hold). Equal weighted. Daily execution from a spreadsheet. Comes out Sharpe 2.

- Volume predicts price (volume attention price loop)

Rank all coins by volume after stripping market noise. Long increasing volume, short decreasing volume. "Well over Sharpe 2." Known effect, reflexive, provable statistically. Higher volume predicts higher prices. Lower volume predicts lower prices. Sounds like nothing.

- Short small caps that pump

Momentum works on large caps. Flips negative by the third or fourth decile. Bottom 20% of Binance perps makes a 20-day high - short it. Strong edge because it's the market maker Dubai pump and dump lifecycle playing out mechanically every single time.

- New Binance listing short

Market maker contract is 90 days. Strike price set off 7-day VWAP after launch. From day 7, delta hedging mechanically pushes the coin down. Short it for 90 days. Every time. Edge comes entirely from understanding how the game is structured, not from any signal at all.

Another case of simplicity winning. Will drop the podcast in the replies, worth listening.