Lazy Engineer retweetledi

Lazy Engineer

102 posts

Lazy Engineer

@a_lazy_engineer

automating stuff so I can get back to what I love - stories and music

India Katılım Ağustos 2022

106 Takip Edilen13 Takipçiler

The Recent Lenny's podcast with Evan Spiegel had some ideas that really hit home, especially for anyone building in the AI space.

Distribution is the real moat, not product-market fit

Everyone obsesses over PMF. I get it, I do too. But Spiegel made a point that's been rattling around my head: distribution is increasingly the hardest problem to solve, and it's only getting harder.

TikTok won through massive financial subsidies. Threads won by piggybacking on Meta's billions of existing users. Snapchat got lucky being early in mobile.

The uncomfortable truth? The best product doesn't always win. The best distributed product does.

As AI makes building easier for everyone, your edge won't be what you build. It'll be how you get it in front of people.

Build ecosystems, not just software

Durable moats come from creator-developer relationships and hardware, not just features. Being copied validates your innovation. It's better than building something no one bothers to copy.

And my personal favourite

"Two ears, one mouth, use them in proportion." Spiegel's life motto: listen twice as much as you speak.

There are a lot more ideas in the podcast. Summary link below. Let's discuss what you think about these...

English

Lazy Engineer retweetledi

Lazy Engineer retweetledi

Lazy Engineer retweetledi

wisdom is the new intelligence.

joe hudson (who coaches sam altman and research teams across openai, anthropic, deepmind, apple) has the best explanation why

his logic is simple: every major technology shift in history changed which human skill mattered most

1. before the industrial revolution, physical strength was the edge.

farming, building, hauling goods, fighting wars.

the stronger you were, the more you could produce and the more you were worth

2. then machines took over the physical work. so the edge shifted to learned skills.

you could learn a trade, work a factory line, operate equipment.

the skill was knowing how to do the thing

3. then the information age hit and the edge moved again. raw intelligence.

if you could process information, write code, analyze systems, solve complex problems, you had the advantage

4. now ai is outsourcing intelligence.

you can get a free tool to write your emails, research your market, analyze your data, build your software

so what's the edge now?

wisdom.

sounds abstract until you break it down:

it's the quality of the decisions you make.

> can you see patterns others miss?

> can you decide well on where to direct the ai?

> can you do the hard thing when everyone else avoids it?

> can you spot which opportunity is real and which is hype before you waste 3 months on it?

in other words, a form of taste and emotional intelligence

hudson put it like this:

"if I can get 70 people to run a company for me, they're all free and they're all AI agents, then the question is, what are the decisions I'm making to make that company successful? What advice am I taking? How am I listening advice? How do I create alignment between the five or six people?"

ai handles the thinking, but only you can handle the deciding

we're moving from knowledge workers to wisdom workers

English

Lazy Engineer retweetledi

Lazy Engineer retweetledi

- Drafted a blog post

- Used an LLM to meticulously improve the argument over 4 hours.

- Wow, feeling great, it’s so convincing!

- Fun idea let’s ask it to argue the opposite.

- LLM demolishes the entire argument and convinces me that the opposite is in fact true.

- lol

The LLMs may elicit an opinion when asked but are extremely competent in arguing almost any direction. This is actually super useful as a tool for forming your own opinions, just make sure to ask different directions and be careful with the sycophancy.

English

@SalesMastery_HQ @Codie_Sanchez Agreed. It’s not just about getting to an answer, it’s about becoming the kind of person who can arrive at one.

English

Writing two books taught me something

AI never could.

The process of organizing everything

you know into something coherent -

the struggle of it - builds a kind

of thinking that a prompt can't shortcut.

Old books did the same thing for the people who wrote them.

That's what you're reading when you

read something that's lasted 100 years.

The residue of someone's deepest thinking.

AI gives you the answer.

Old books show you how someone

arrived at theirs.

Different thing entirely.

English

Lazy Engineer retweetledi

@sickdotdev It will shift towards a more structured method 'Spec Driven Development'

English

@samreenn2525 That's true, plus it's great for personal mental health😅

English

@_Anish__Kumar Hobby? This is the hobby.

Low cost. High entertainment. Zero maintenance. 😌

English

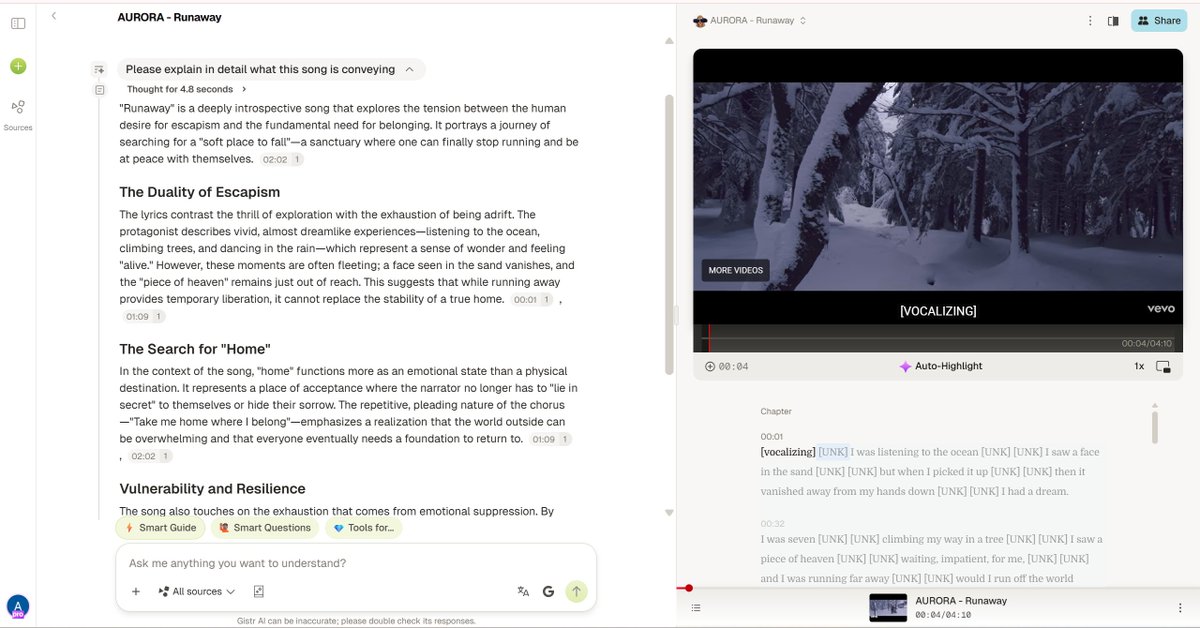

@_Anish__Kumar This is beautiful. Music + reflection is such a powerful combo... thrilled Gistr could be part of that.

It’s a wild experience where you let your mind wander over its inner meaning as you watch them alongside.

English

@samreenn2525 There is too much noise right now.

That makes real visibility incredibly hard.

Marketing is not just about awareness anymore

it is also about standing out from everything around you.

English

@shivanijpatel I am interested to know which startup he founded. Building products is not a bottleneck anymore. It's the distribution bit, that's complex.

English

@itsolelehmann This reminds me of the recent article by @irabukht where she mentions 'AI made building free and distribution harder than ever. Everyone ships now. Good luck getting anyone to notice.'

With too much noise, getting noticed will be the hardest part.

English

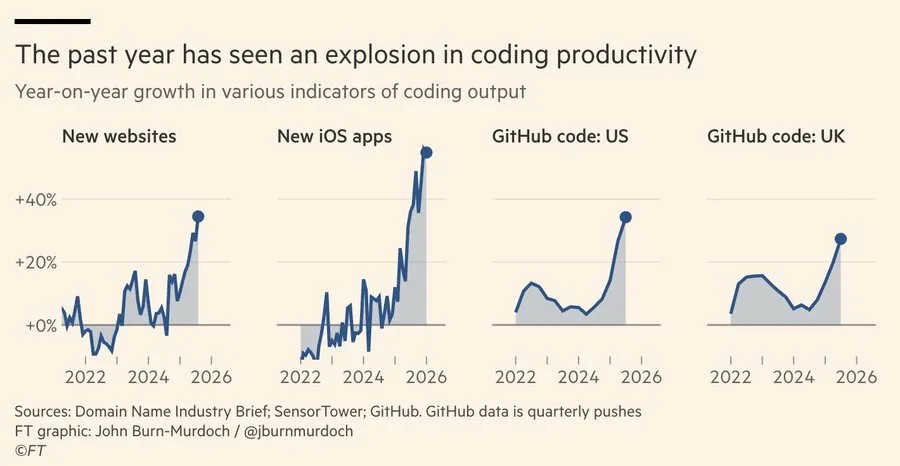

Look at this chart.

The Financial Times published this data recently and it's been stuck in my head since I first saw it.

This is the inflection point.

New websites, iOS apps, GitHub commits, all going parabolic.

A teenager with Claude can ship what used to take a funded team and six months of runway.

Solo developers are launching full SaaS products over a weekend.

Designers who've never written a line of code are building functional apps.

The barrier to creating software has, for all practical purposes, collapsed.

Which leads to a question that I think is more important than most people realize:

If everyone can build, what happens to the *value* of building?

When the supply of something explodes, the thing itself gets cheaper.

That's Markets 101. And software supply is going vertical right now.

The logical next step is that the money and energy that used to flow toward production has to find a new home.

It flows toward the only bottleneck that remains: getting people to actually pay attention.

Human attention is, I think, the last genuinely scarce resource in an economy where production costs are collapsing toward zero.

AI can generate a million apps. It still can't make anyone download one.

It can write a million blog posts. It still can't make anyone read them.

And this is why it's never been more important to learn how to engineer attention.

If you understand both marketing and AI, you're now holding a combination that companies will pay 100x for.

Most people are learning one or the other.

The person who can do both, who can capture attention AND use AI as the lever to do it at scale, is probably the most valuable person in any room right now.

There's an assumption floating around that AI will commoditize marketing the same way it's commoditizing code.

People think "vibe marketing" will follow the same path as "vibe coding"

And I get why that's the intuition.

AI can write copy, generate ads, build landing pages. Same playbook, same compression.

But I don't think it works that way, and the reason comes down to something I keep calling the taste problem.

AI is already deeply embedded in marketing.

The algorithms that decide what you see on every platform, the recommendation engines, the ad targeting, etc all of it runs on AI.

That part is done. But knowing how to read those systems, how to work with them, how to craft something that the algorithm wants to push and that a human actually wants to engage with, that still requires taste.

And "good enough" marketing simply doesn't cut it anymore.

"There is no demand for average" - Naval

The bar for what breaks through keeps rising precisely because AI is flooding every channel with competent, forgettable content.

You can prompt AI to write a tweet.

You *cannot* prompt it to write a tweet that 50,000 people share.

That gap between "technically correct output" and "people actually care" is taste, and taste is built from years of understanding narrative, timing, cultural context, when to be funny, when to shut up.

There's no shortcut. I've watched plenty of people look for one.

What's sort of counterintuitive is that AI actually widens the gap between people who have taste and people who don't.

If you have it, AI becomes a genuine force multiplier.

You test 40 subject lines in the time it used to take to write 4.

You draft a full campaign in an afternoon and spend your real energy on the 15% that makes it land instead of grinding through the 85% that's just scaffolding.

But if you don't have taste, AI just helps you produce more mediocre work, faster (which is arguably worse than producing mediocre work slowly, because at least then you weren't flooding your own channels with it).

Code has a finish line. It either works or it doesn't. You can write tests, benchmark it, measure performance.

A model can evaluate whether code does what it's supposed to do.

But attention, trust, brand, these are built from a thousand small decisions that compound over months and years.

They're the residue of every interaction someone has with you, and none of it can be reverse-engineered by running inference on a competitor's marketing.

This is why distribution specifically resists commoditization in a way that engineering doesn't.

So the people who spent the last few years building audiences, developing their voice, earning trust one reader at a time, they're holding something that just got *radically* more valuable.

The landscape shifted around them.

Every other competitive advantage in tech got cheaper overnight, and theirs didn't.

Human attention will forever be scarce.

Therefore in an age of AI abundance, the art of capturing it is the last moat.

English

Will AI create new job opportunities? My daughter Nova loves cats, and her favorite color is yellow. For her 7th birthday, we got a cat-themed cake in yellow by first using Gemini’s Nano Banana to design it, and then asking a baker to create it using delicious sponge cake and icing. My daughter was delighted by this unique creation, and the process created additional work for the baker (which I feel privileged to have been able to afford).

Many people are worried about AI taking peoples’ jobs. As a society we have a moral responsibility to take care of people whose livelihoods are harmed. At the same time, I see many opportunities for people to take on new jobs and grow their areas of responsibility.

We are still early on the path of AI generating a lot of new jobs. I don't know if baking AI-designed cakes will grow into a large business. (AI Fund is not pursuing this opportunity, because if we do, I will gain a lot of weight.) But throughout history, when people have invented tools that unleashed human creativity, large amounts of new and meaningful work have resulted. For instance, according to one study, over the past 150 years, falling employment in agriculture and manufacturing has been “more than offset by rapid growth in the caring, creative, technology, and business services sectors.”

AI is also growing the demand for many digital services, which can translate into more work for people creating, maintaining, selling, and expanding upon these services. For example, I used to carry out a limited number of web searches every day. Today, my agents carry out dramatically more web searches. For example, the Agentic Reviewer, which I started as a weekend project and Yixing Jiang then helped make much better, automatically reviews research articles. It uses a web search API to search for related work, and this generates a vastly larger number of web search queries a day than I have ever entered by hand.

The evolution of AI and software continues to accelerate, and the set of opportunities for things we can build still grows every day. I’ve stopped writing code by hand. More controversially, I’ve long stopped reading generated code. I realize I’m in the minority here, but I feel like I can get built most of what I want without having to look directly at coding syntax, and I operate at a higher level of abstraction using coding agents to manipulate code for me. Will conventional programming languages like Python and TypeScript go the way of assembly — where it gets generated and used, but without direct examination by a human developer — or will models compile directly from English prompts to byte code?

Either way, if every developer becomes 10x more productive, I don't think we’ll end up with 1/10th as many developers, because the demand for custom software has no practical ceiling. Instead, the number of people who develop software will grow massively. In fact, I’m seeing early signs of “X Engineer” jobs, such as Recruiting Engineer or Marketing Engineer, which are people who sit in a certain business function X to create software for that function.

One thing I’m convinced of based on my experience with Nova’s birthday cake: AI will allow us to have a batter life!

[Original text: deeplearning.ai/the-batch/issu… ]

English

The 'X Engineer' concept is exactly what is happening in the market right now. 📊 When natural language becomes the new compiler, domain experts in marketing, recruiting, and HR suddenly have the power to spin up custom software without waiting on an IT backlog. The bottleneck is no longer code; it's domain knowledge.

The Stanford Agentic Reviewer is such a perfect example of this expanding demand. 🔬 By pulling from arXiv to ground its feedback and mimicking human review scores so closely, it doesn't replace the researcher; it just cuts the feedback loop down from six months to minutes. It's pure leverage.

Not reading the generated code is a controversial take, but it's the right one. 💻 We don't read the assembly code that our C++ compiles into either. If you have the right agentic wrappers verifying the output, operating purely at the architectural level is the only way to scale your output 10x.

English