Ashutosh Baheti

139 posts

@abaheti95

Sr. Research Scientist, Agentic RL @databricks I'm interested in LLM, Agents, Tool use, Reinforcement Learning and making a JARVIS 🤖

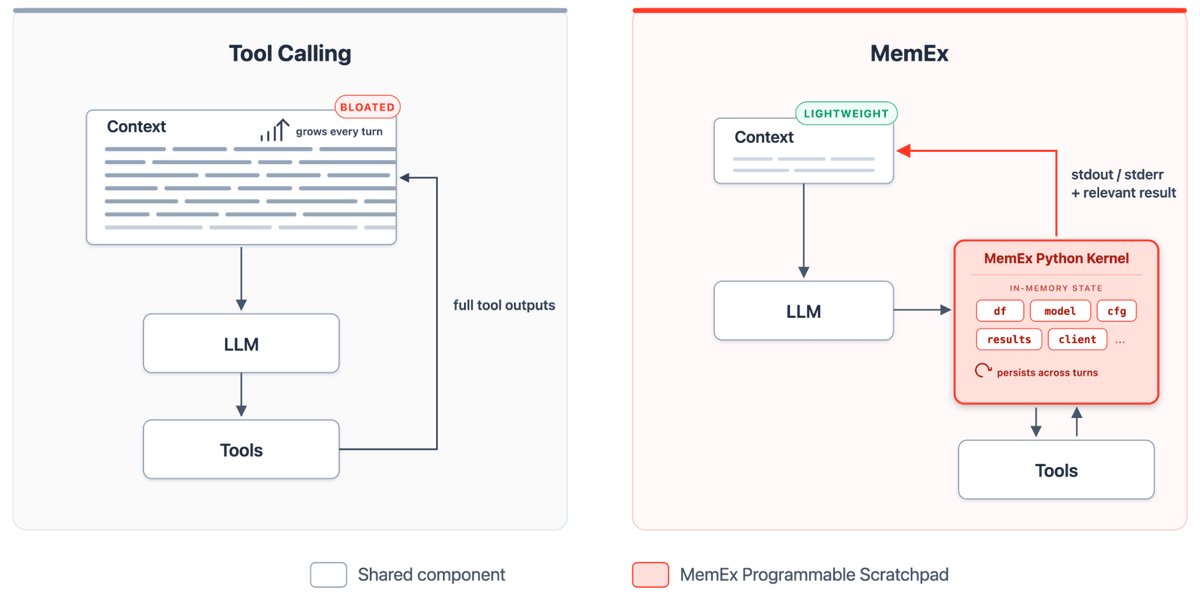

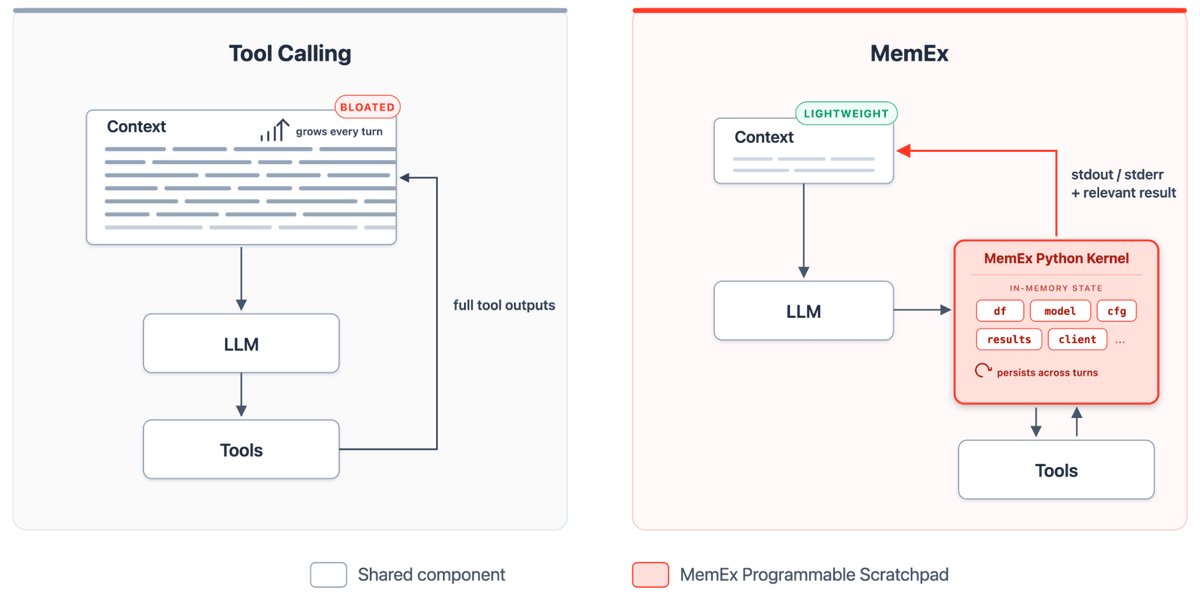

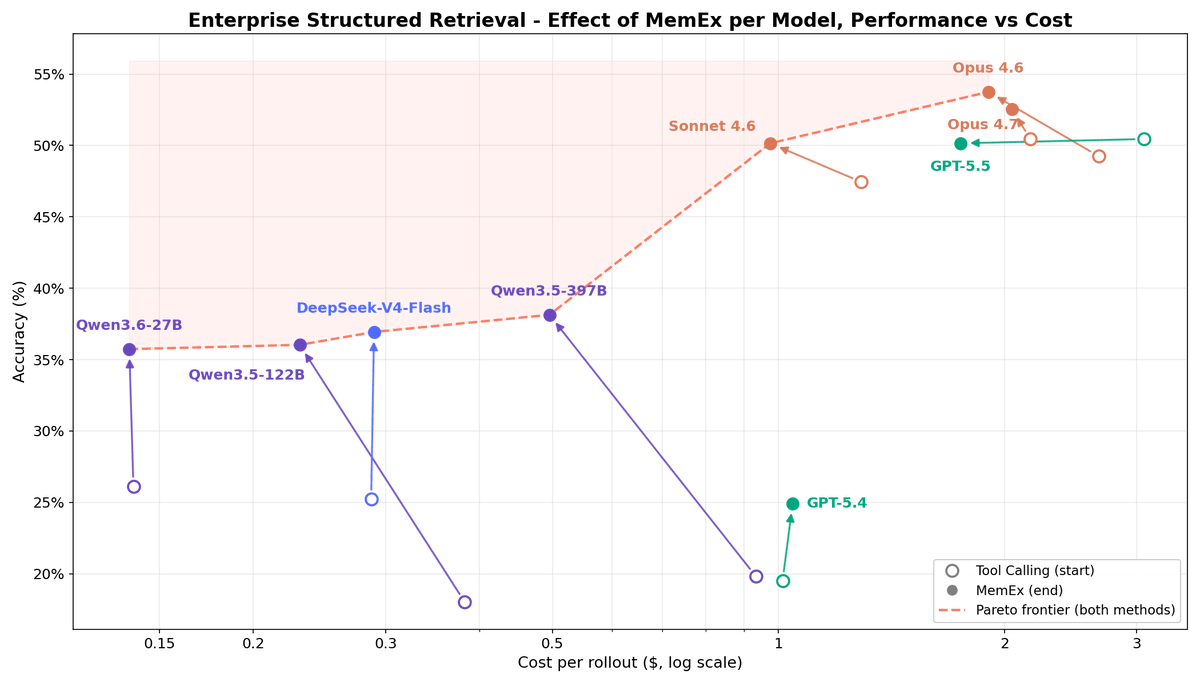

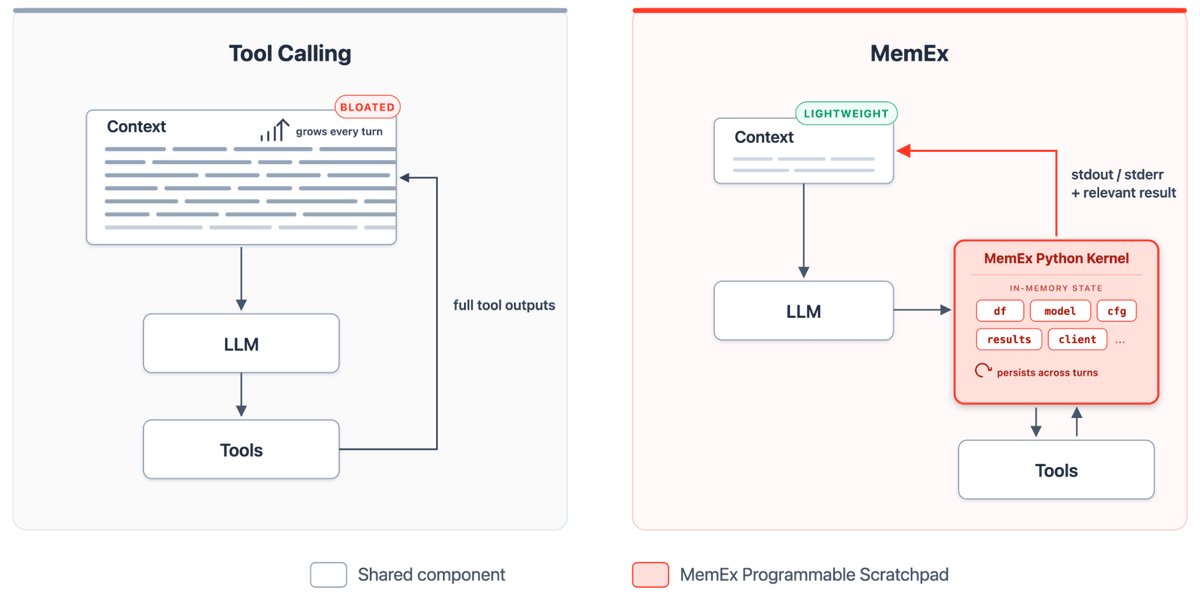

In 1945, Vannevar Bush imagined a machine to extend a scientist's memory. He called it the MemEx. 80 years later, we built one for LLM agents. Tool outputs become Python objects; only print statements reach the model's context. 🧵 databricks.com/blog/memex-pro…

At Databricks, 🧞Genie hits this wall every day! Its queries span an entire workspace and pulls data from tables, vector indices, and other sources via many tool calls. Here's how MemEx can convert complex workflows like these into streamlined code with far less token repetition.

At Databricks, 🧞Genie hits this wall every day! Its queries span an entire workspace and pulls data from tables, vector indices, and other sources via many tool calls. Here's how MemEx can convert complex workflows like these into streamlined code with far less token repetition.

In 1945, Vannevar Bush imagined a machine to extend a scientist's memory. He called it the MemEx. 80 years later, we built one for LLM agents. Tool outputs become Python objects; only print statements reach the model's context. 🧵 databricks.com/blog/memex-pro…

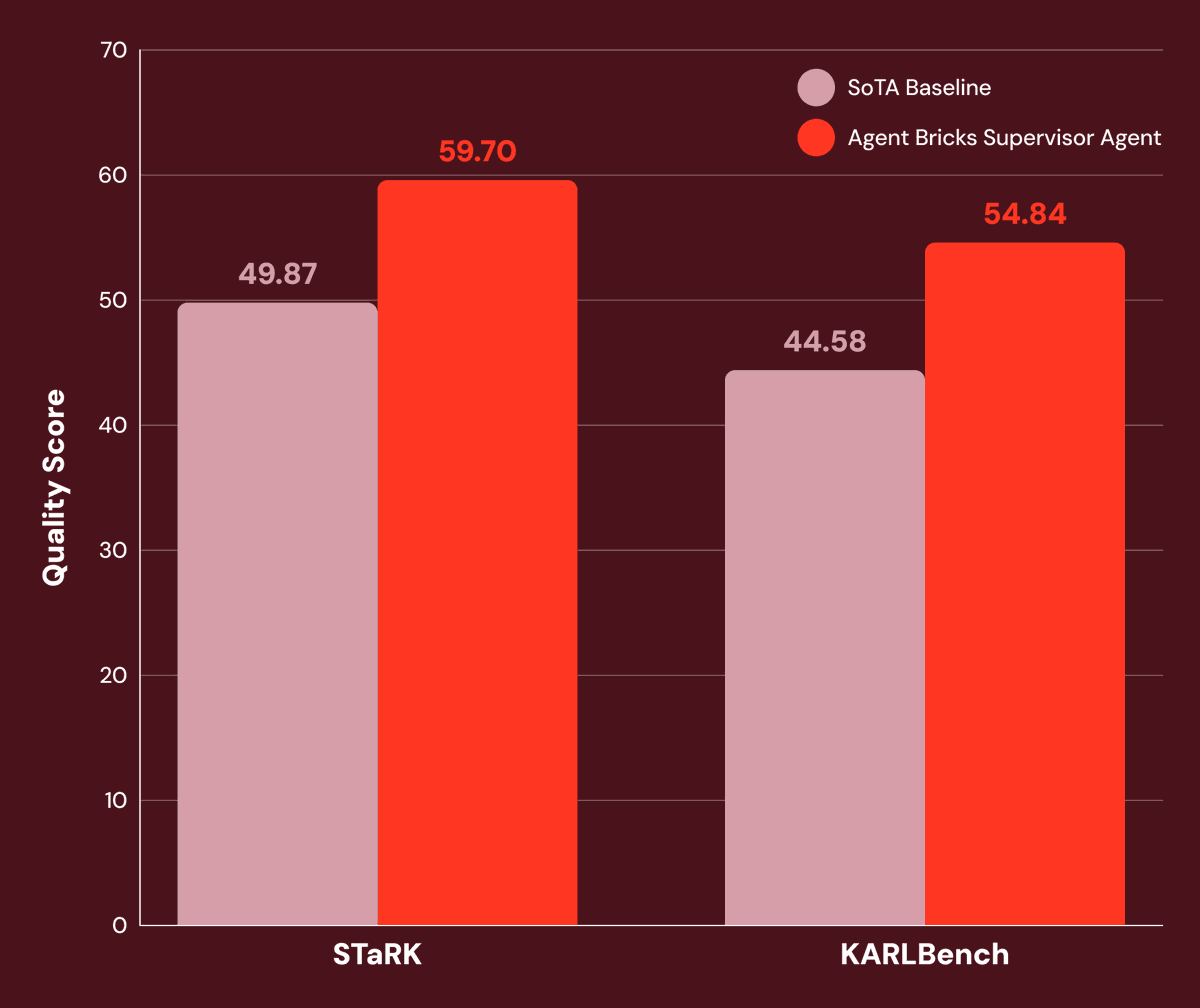

Genie has transformed how Databricks users work with data, with 3x the accuracy of generic agents. We're sharing some of the research behind it and what makes building data agents challenging. Super proud of our research team's impact with this! databricks.com/blog/pushing-f…

Today we're announcing Genie Code, your autonomous AI partner for data. Genie Code is a state-of-the-art agent that lets data teams move from prompting a copilot to delegating real work: building pipelines, machine learning models, debugging failures, and shipping dashboards. This isn't a smarter autocomplete. It's a different kind of AI partner entirely. Unlike general coding agents that stop once the code is built, Genie Code plans, executes, and iterates across the full data and AI lifecycle inside Databricks. It's purpose-built for data engineering, data science, and BI: • More than doubles the success rate of leading coding agents on real-world data science tasks • Proactively monitors your pipelines and AI models in the background, triaging failures and fixing issues before a human intervenes • Works with your data wherever it lives, across Databricks and external platforms, with full governance and MCP support This is what the future of data work looks like. databricks.com/blog/introduci…