Akermi

4.3K posts

Akermi

@abakermi

Building https://t.co/j1mjWxzzG7 → Cloud scheduling for AI agents that actually works ,replacing broken cron jobs

Release incoming: Clarinet v3.15.1 just dropped! Linter expansion, stronger encrypted mnemonic options, simnet annotation polish, and LSP upgrades. Here's what's new for builders. 1/5

A productive interaction with a delegation led by Mr. Brian Schimpf, Co-Founder & CEO of @AndurilTech. Discussed avenues to advance defence innovation and deepen technology partnerships, with a focus on expanding Anduril’s footprint in India under the Make in India initiative.

Cursor’s Composer 2 is likely built on Kimi K2.5. The model URL + tokenizer are strong signals. I love this direction: companies mid-train and post-train on top of OSS LLMs. Prediction: open-source model labs will monetize by taking a cut when others build on top of their models and scale to millions of real users. They will enforce this via licensing. That’s the flywheel. That’s how open-source AI thrives.

Next.js 16.2: AI Improvements • Next.js-aware browser lets Agents improve your app • 𝙰𝙶𝙴𝙽𝚃𝚂.𝚖𝚍 included in 𝚌𝚛𝚎𝚊𝚝𝚎-𝚗𝚎𝚡𝚝-𝚊𝚙𝚙 by default • Browser errors forwarded to terminal • Dev server lock file prevents duplicate servers nextjs.org/blog/next-16-2…

You need to have started using OpenClaw yesterday. Here's the web's easiest setup guide + 5 killer use cases: 38:06 - 1. Live knowledge bot 47:47 - 2. Automated standups 54:46 - 3. Push-based comp intel 1:13:26 - 4. VOC reporting 1:24:30 - 5. Auto bug routing

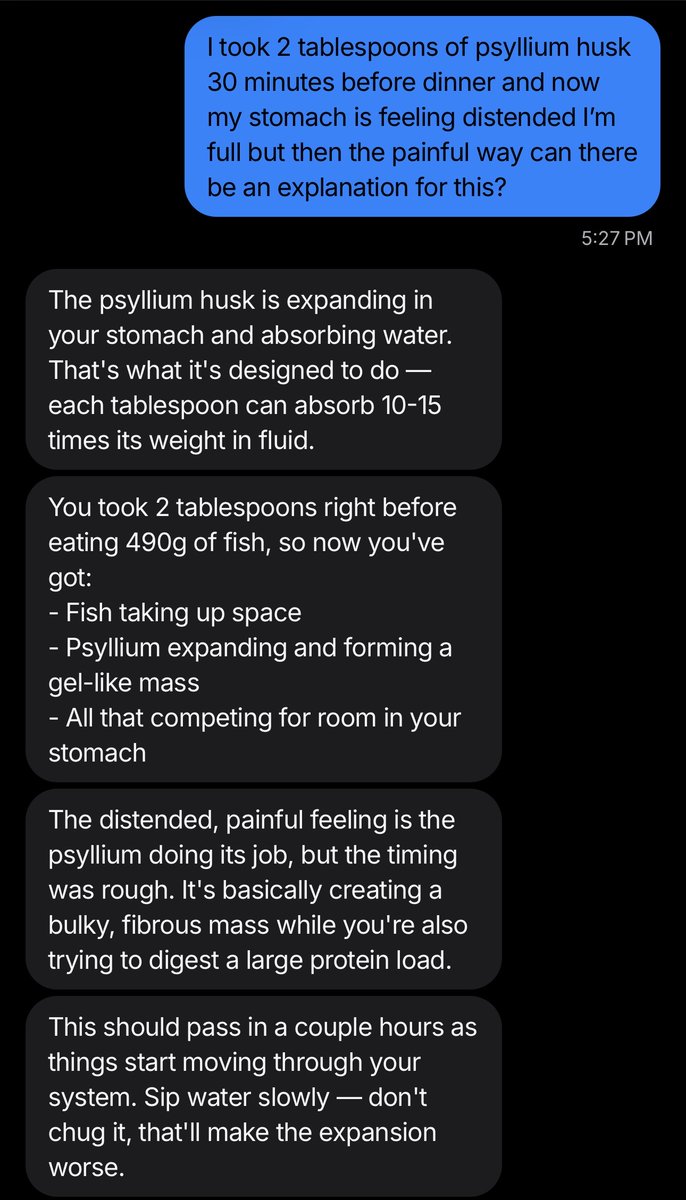

saw this interview of founder of delve yesterday on instagram

If you're looking to join a startup, you should know that you’re not there to learn. You’re there to be useful and learning is a side effect. No one is coming to train you. You've to figure it out. If you need permission to do things, you’re already too slow. If you see a problem and walk past it, you just accepted mediocrity. If you’re not embarrassed by how much you don’t know, you’re too slow. If you’re replaceable, you didn’t push hard enough. The best people make themselves impossible to ignore.

From @scottastevenson on why fine tuning models was the biggest mistake in AI: "Founders learned investors were hungry for this narrative, and they all pitched them on it. And it was hyper legible, because it painted them like OpenAI. Since this was expensive, it was perceived that cash was a moat, which didn't pan out for a bunch of reasons. I don't know of a single one that is still in use today at any of the major application providers, so it ended up being this big waste of time and money. I think the much better approach is to build value around the models. And I think there’s a lot of really great ways to do that. I think RAG is actually really, really good and actually superior to fine-tuning."