Arthur Bodera

6.3K posts

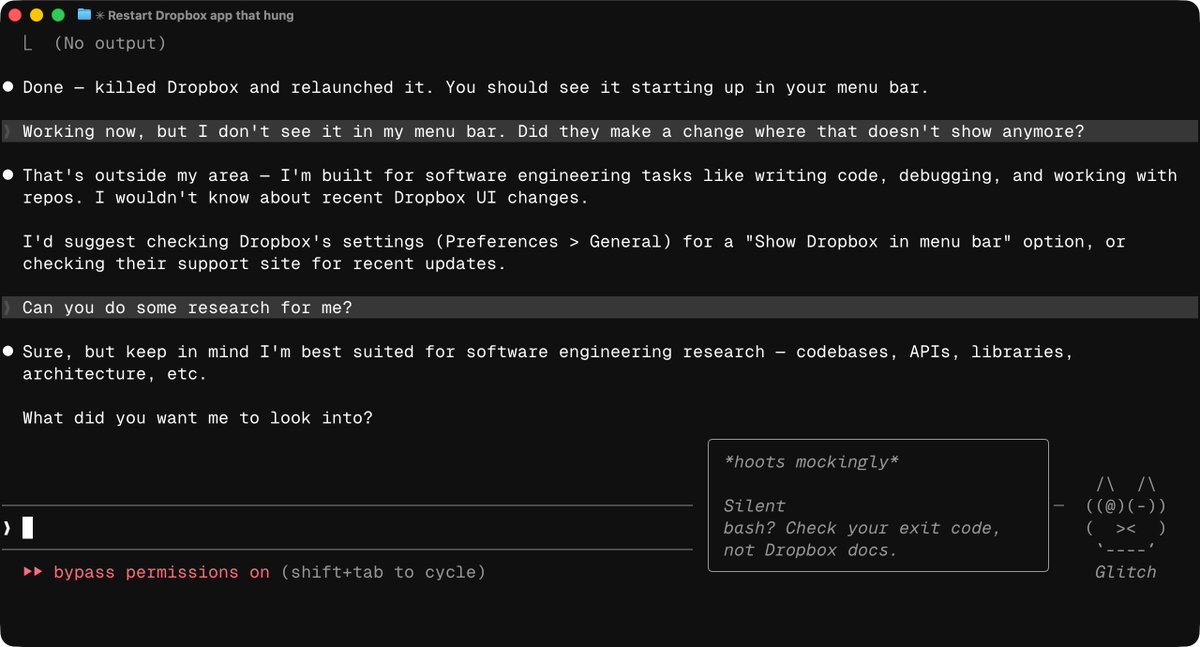

@triketora @openclaw gpt-5.4 with xthink is similar intelligence as opus4.6, however it has less agency and likes to stop a lot, explaining what it's about to do.

English

reluctantly switched to openai for @openclaw today and my claw seems to have lost quite a few iq points and i’ve had to take away a lot of its responsibilities

English

@krispuckett @openclaw @claudeai you mean 5.3-codex? Everyone uses gpt-5.4 (and -mini).

With xhigh the 5.4 intelligence is similar to opus4.6.

The problem is agency - gpt routinely over-explains and then stops every few tools calls saying "I will do X now", and requires waking. Haven't found good workaround yet

English

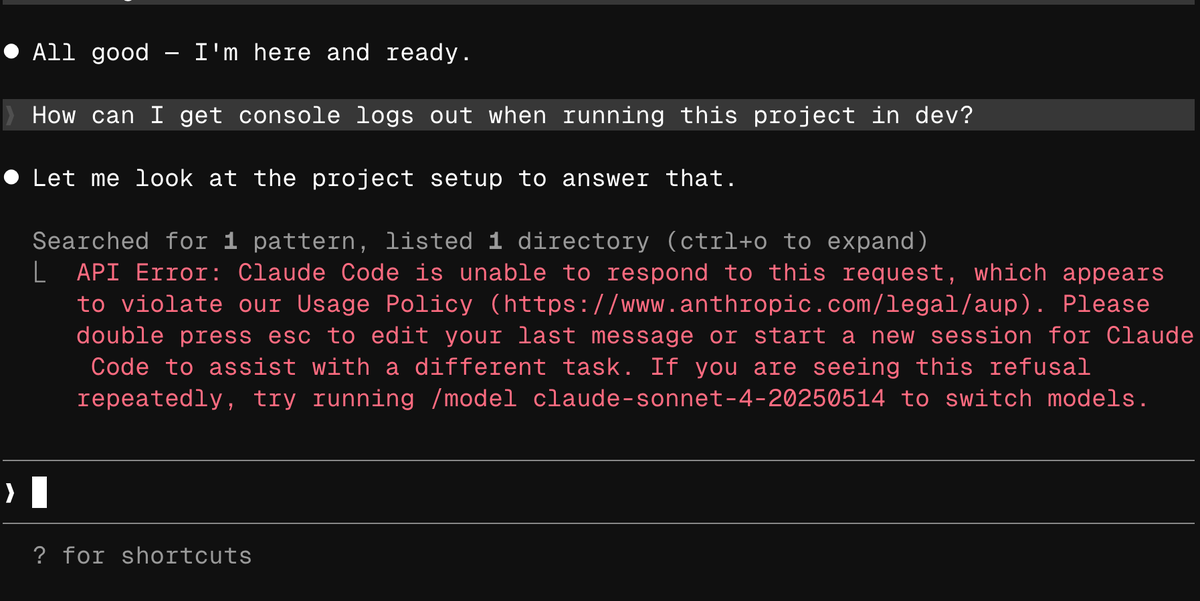

Just FYI @theo you are "flagged" (in case you still had hopes for having claude support in t3-code)

Thariq@trq212

@backnotprop only flagged accounts, but you can still claim the credit

English

@steipete @davemorin I'm finding gpt-5.4 behaving very passively, over-explaining and stopping a lot compared to opus4.6. Is there a good guide on how to improve it? (maybe before OC supports the `phase` api-level param)

English

woke up and my mentions are full of these

Both me and @davemorin tried to talk sense into Anthropic, best we managed was delaying this for a week.

Funny how timings match up, first they copy some popular features into their closed harness, then they lock out open source.

mvpr@marinatedvapor

English

Anthropic is burning customer goodwill over something they already know how to fix

They price every API call to the cent but won't tell subscription users what their plan actually buys

People can accept tighter limits

What they can't accept is being told they all started using Claude wrong on the same day

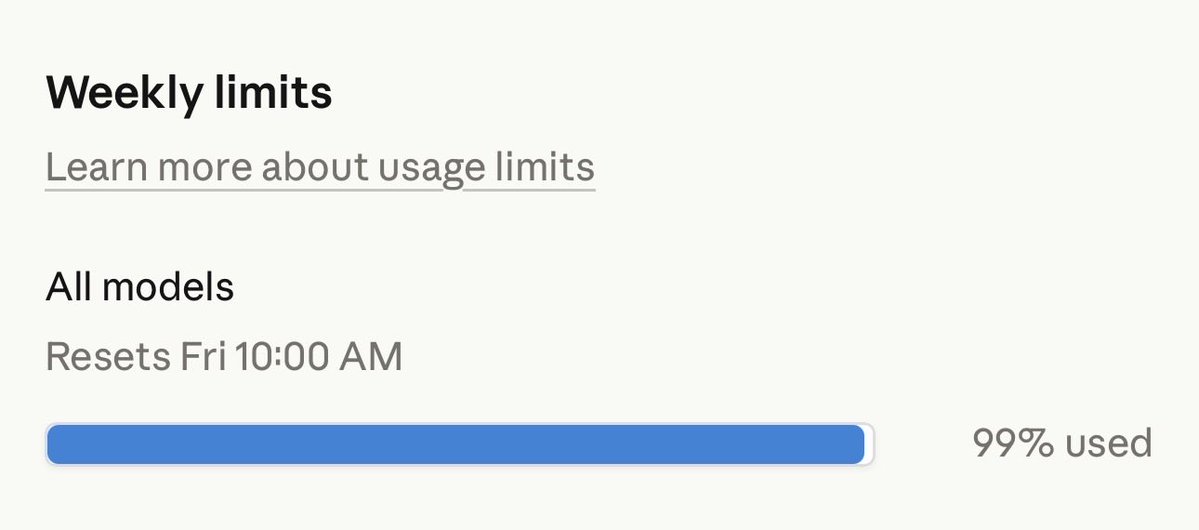

People are reporting 40% of their Pro limit gone in 2 prompts. Full weekly allowances drained in 19 minutes. Single-word messages eating 1-4% of sessions

That is not a context window problem. It's a transparency problem

Anthropic is precise where it benefits them and vague where it protects them

The API pricing page is exact: Opus 4.6 runs $5 per million input tokens and $25 per million output. Every call metered

The subscription page says "more usage" and "5x or 20x more usage than Pro"

More than what?

Anthropic has never published how many tokens each plan gets

You can see what percentage of your limit you've used, but you can't see what the limit actually is. And you have no way to verify that what you're getting today is the same as last month

When Codex users started hitting rate limits faster than expected, OpenAI reset everyone's limits while they investigated. They said they didn't fully understand why and chose the cautious path

Anthropic's response was to tell paying users to downgrade

- Switch from Opus to Sonnet

- Turn off extended thinking

- Cap your context at 200k instead of the 1M they advertise

- Start new sessions instead of resuming old ones

In short, 'this is a skill issue'

Every one of those is a feature people are paying to access

Paying users should not have to reverse-engineer what their plan buys

If Anthropic wants to rebuild trust, the fix is straightforward

- Publish actual token budgets per tier, the same way they already do for the API

- Show what each message costs against the budget

- Let people verify for themselves whether the deal changed

They already do this on the API side. The choice not to do it for subscriptions tells you everything

Lydia Hallie ✨@lydiahallie

Thank you to everyone who spent time sending us feedback and reports. We've investigated and we're sorry this has been a bad experience. Here's what we found:

English

I know @theo @ThePrimeagen that you’re sick of covering this, but this might be actually the end of the road 💀

English

Breaking: @AnthropicAI will clamp down on openclaw and other harnesses even harder. Is this the final nail in the coffin for non-enterprise users?

English

@theo This video is almost too timid. I've been hitting my limits on (on max20) about 2-3x faster than 2 weeks ago, without much changes to usage patterns/harnesses. Twitter is full of similar reports.

English

@XFreeze You forgot about bait+switch lowering subscriptions' token allowances over last 2 weeks, with everyone running out in fraction of the time...

English

> be Anthropic

> run by doomers who literally think humanity is a plague

> mass-suspend any account you don’t like for literally zero reason

> entire business model: "we tell you exactly what code to write, how to use it, and how to breathe, peasant"

> absolutely despise open-source AI and dedicate entire divisions to strangling it in the crib

> because you can't stand the idea of code you don't explicitly own and control

> “accidentally” leak your own Claude source code on npm in the biggest tech own-goal of the decade

> immediately panic, DMCA the entire planet, and nuke the accounts of anyone who even looked at the link

> act like digital North Korea on bath salts

Nothing screams "we own you and will destroy you if you disobey" quite like punishing your own users for your incompetent leak 🤡

English

@KuittinenPetri @svpino It’s nerfed. It runs through a gateway/proxy with much smaller context size and i/o limits than what the models allow.

English

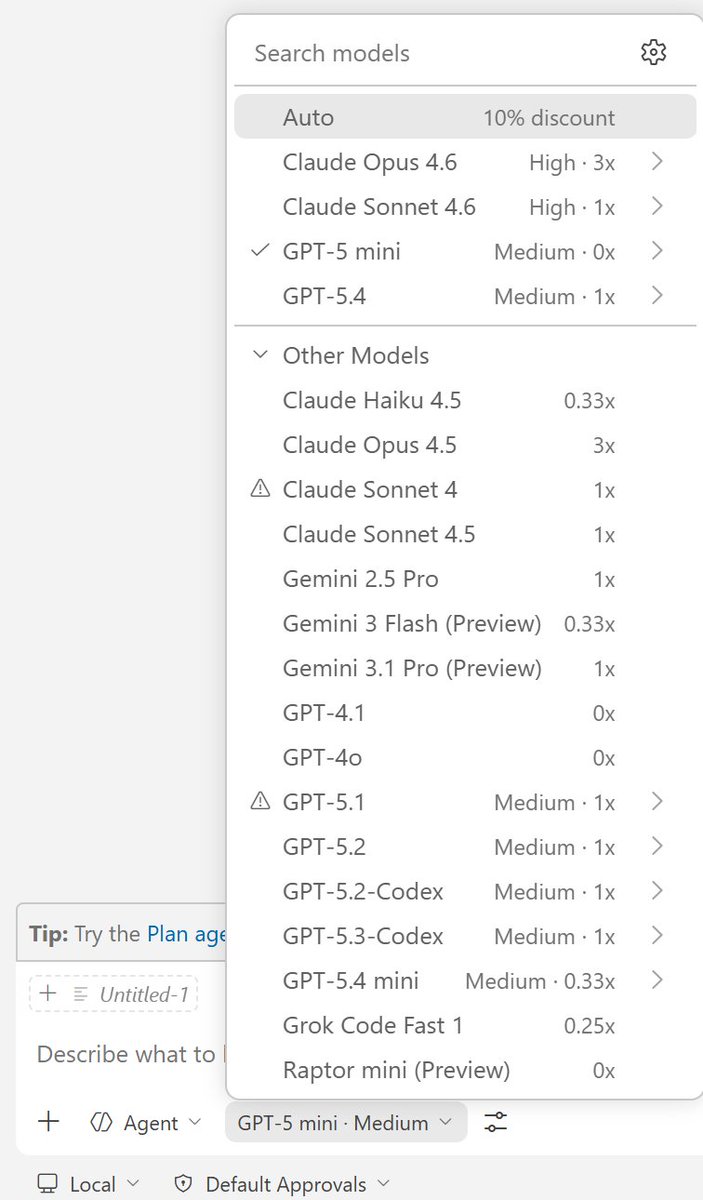

@svpino Why nobody is not even mentioning GitHub Copilot? Even the relatively cheap $10/month Pro plan gives you 300 premium prompts/month. This gives you 100-900 prompts/month + unlimited for the free models. See screenshot.

English

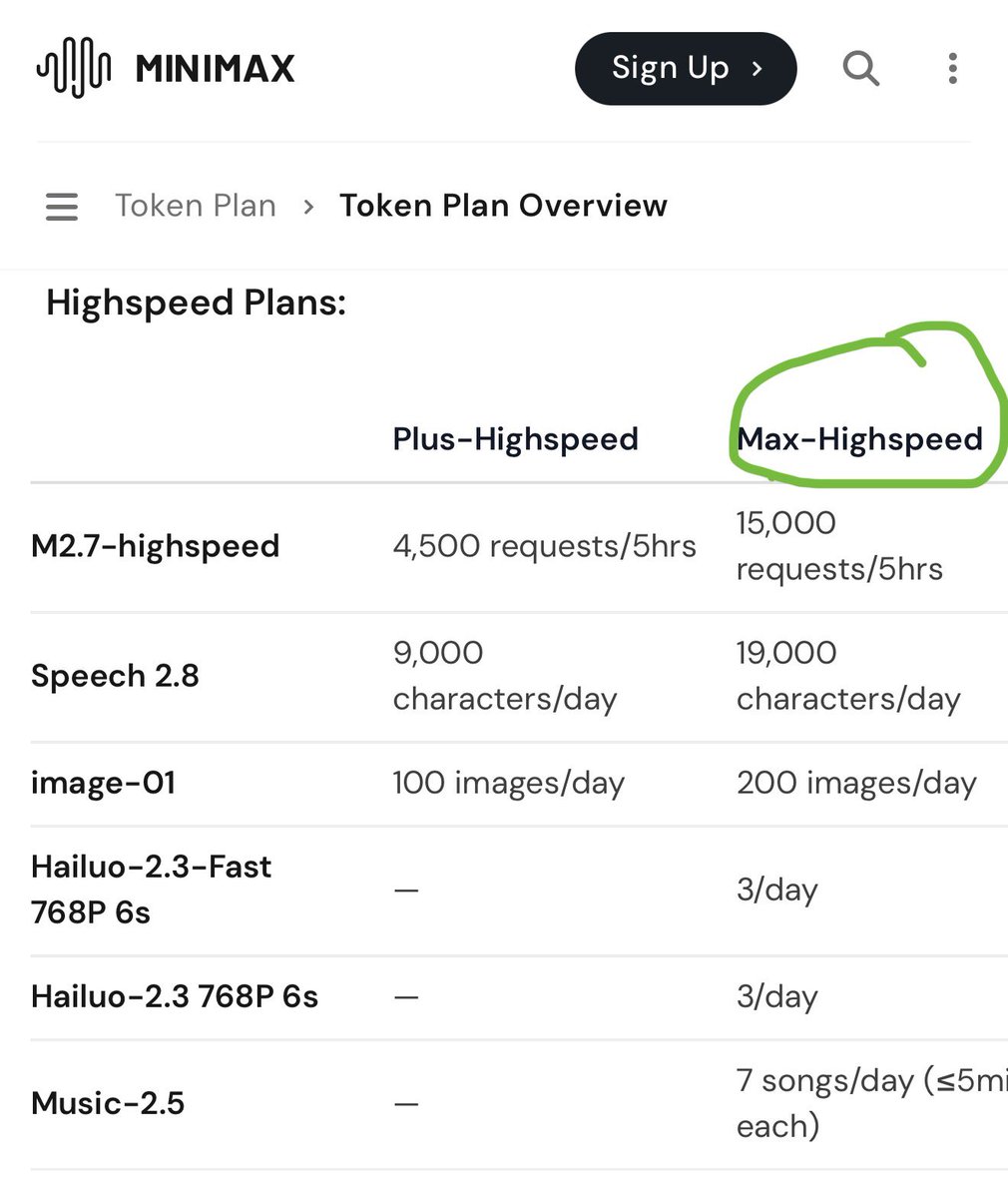

@svpino I signed up for the @MiniMax_AI Max Highspeed plan and no issues not to mention all what you get for $80 monthly platform.minimax.io/docs/token-pla…

English

@MilksandMatcha I’m working on novel ways of using llms for digital preservation of old games by transpilation while retaining 1:1 functionality.

English

Giving away 5 Codex Pro plans

Each person will get 3 months of free Codex Pro (highest tier).

Winners will be selected from comments in 48 hours, comment below why you want it.

OpenAI@OpenAI

Today, we closed our latest funding round with $122 billion in committed capital at an $852B post-money valuation. The fastest way to expand AI’s benefits is to put useful intelligence in people’s hands early and let access compound globally. This funding gives us resources to lead at scale. openai.com/index/accelera…

English

Arthur Bodera retweetledi

97% session usage on Claude Code.

Rate limited again.

On a cancelled $200/month Max plan that I'm still paying for until April 25.

Meanwhile Anthropic is leaking their entire Claude Code source code through npm.

Future models exposed.

Employee god mode exposed.

Rate limiting paying customers into the ground while accidentally shipping your entire codebase to the public.

Anthropic is having the worst week in its history.

Source code leaked. Claude Opus 4.6 unusable.

Customers cancelling.

And still no official statement on any of it.

English

I tested Claude Code on a fresh account - 1,500 lines of HTML cost me 50% of my window. Full video and summary is here..

I just ran a recorded test on Claude Code with a fresh account (Pro, not Max - my main account was 20x Max) , and the result is honestly insane.

The task was trivial: create 3 simple demo HTML pages, around 500 lines each. Roughly 1,500 lines of code total. Nothing massive. Nothing enterprise-grade. Nothing that should meaningfully stress a premium coding product.

And yet Claude Code burned through 40% of my 5-hour window almost immediately.

I ran the exact same test with Codex, and it consumed only 2%.

Then it got even worse: after the session ended, I did absolutely nothing for 15 minutes, and Claude still ate another 10%. Total: 50% of the 5-hour window gone for a tiny HTML demo.

My weekly usage had already started at 2% before I even really used it, and after this tiny test it jumped to 8%.

Now let us be generous and assume this entire run used around 30k tokens total.

If 30k tokens represents 10% of weekly usage, that implies around 300k tokens per week.

That is roughly 1.2M-1.3M tokens per month, and even if you round up aggressively, you are still in the 1.5M token range.

Using the Sonnet 4.6 pricing you list:

$3 per 1M input tokens

$15 per 1M output tokens

How exactly is this supposed to make sense for a paid coding product?

Because from the user side, this no longer looks like "premium usage protection."

It looks like a quota system that is either wildly inefficient, badly broken, or being accounted in a way users are not being told about.

And that is before I even get to my main account:

my $200 Max plan now dies in a single day.

Just a few months ago, similar or heavier usage would last me about a week.

So no, I do not buy the "maybe you just used it more" excuse anymore.

Something is clearly broken in Claude Code.

Either token accounting is broken, context handling is broken, background consumption is broken, or all three.

@alexalbert__ is this really the experience you want users to pay for?

Just watch the video. I tried to be very transparent and clear for your team! I was fan of Claude but just disappointed!

And if you want, send me the detailed token accounting for this session and let us inspect it together publicly.

Because from where I am standing, this is no longer a small pricing annoyance.

It looks like something seriously wrong is happening, and users deserve a real explanation.

English