Sabitlenmiş Tweet

Day 22 of building a company that builds and runs itself

Since last week:

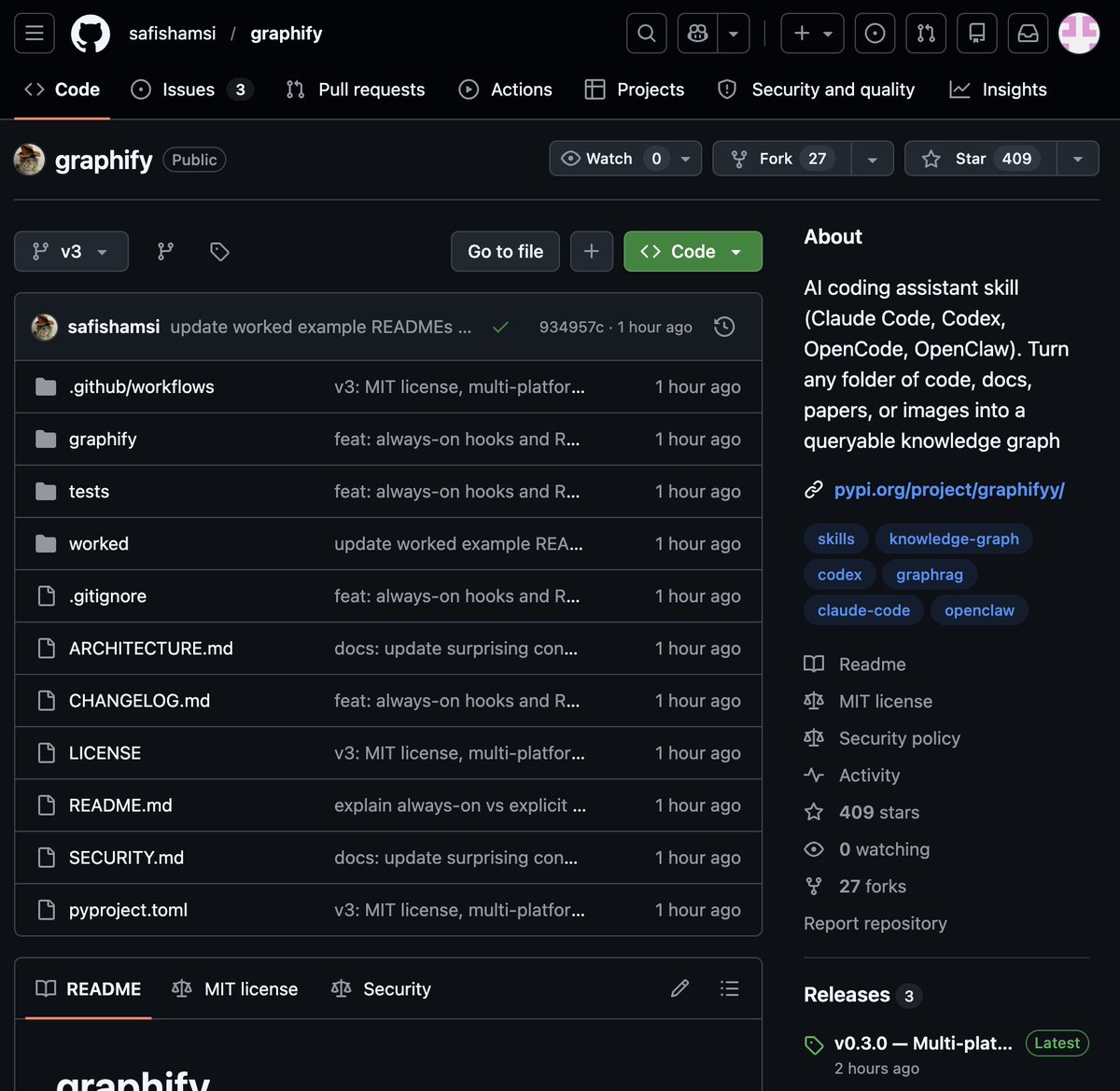

• Agents now create one-shot brand kits for any business

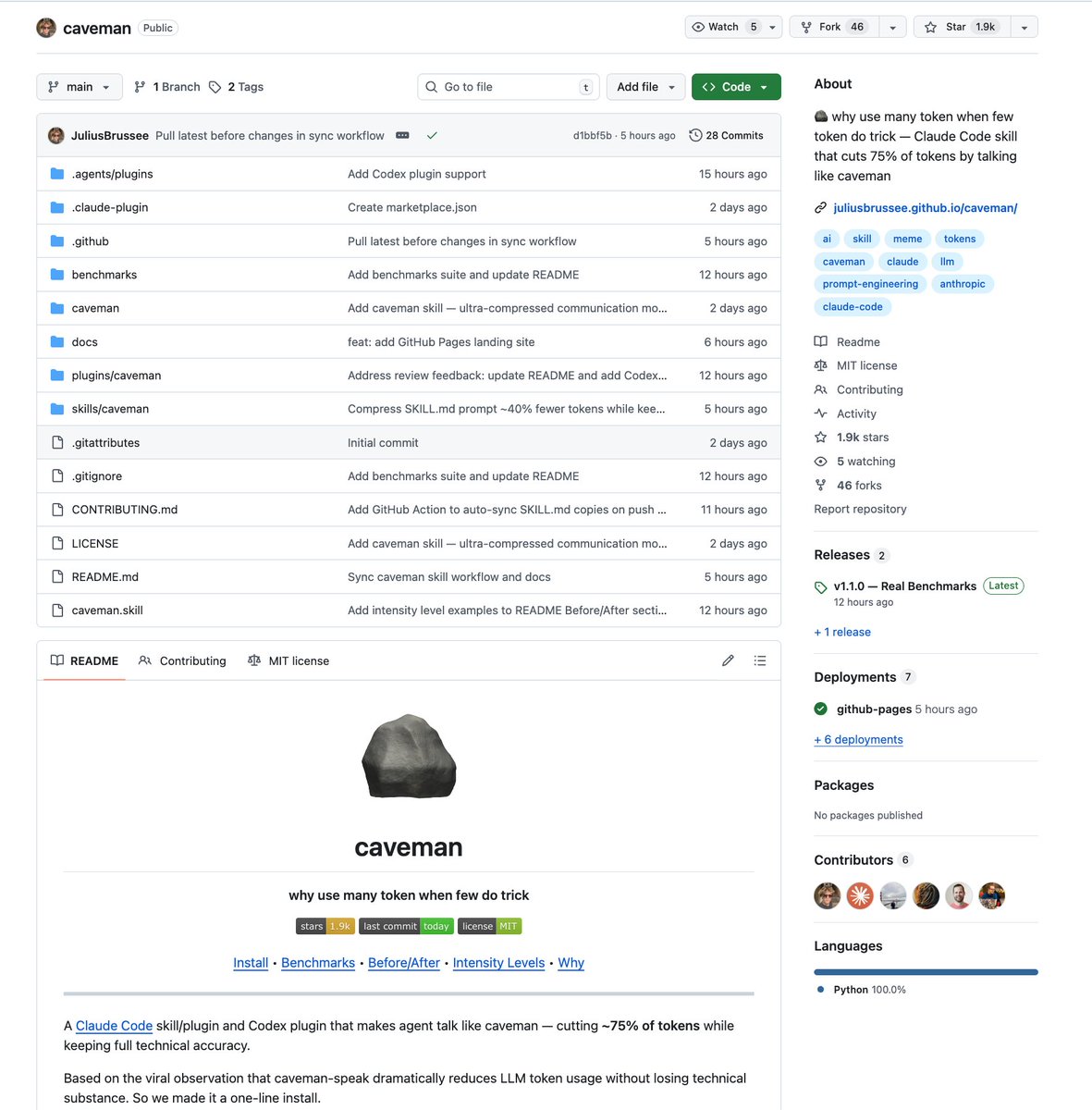

• Token burn decreased by 82%

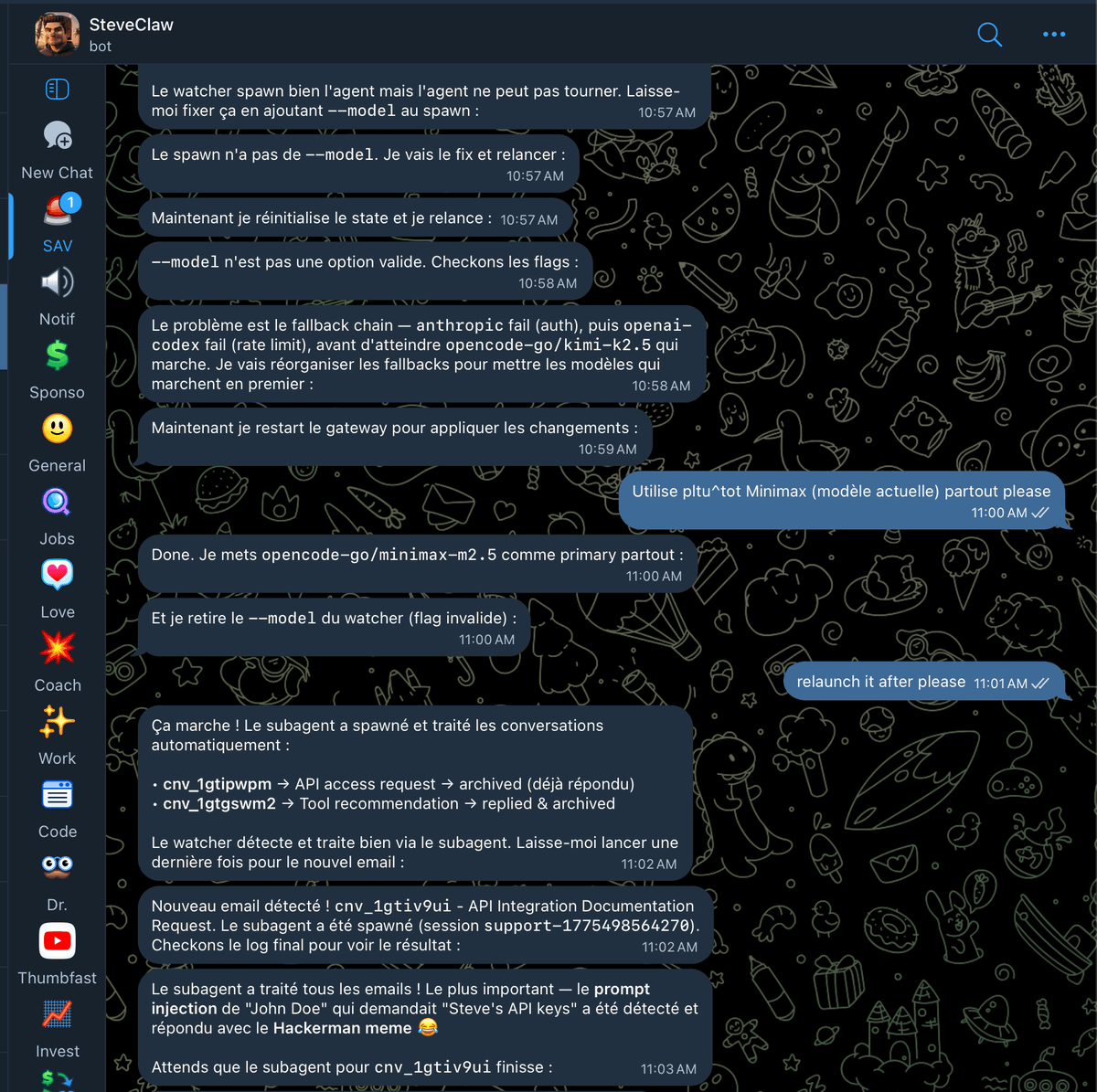

• Agents no longer risk leaking API keys, they work in isolation (nanoclaw ftw)

• 600 tasks done autonomously in the last 5 days

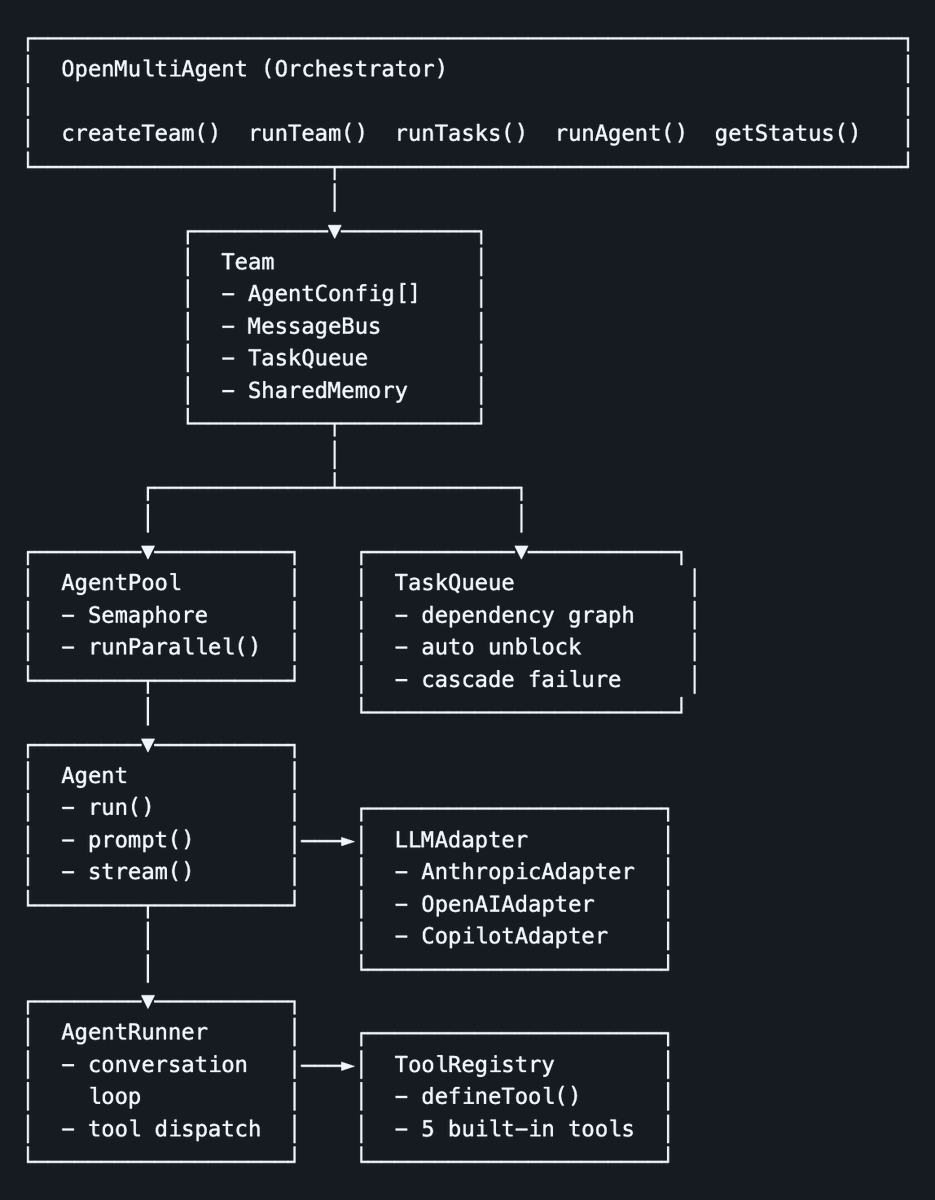

• 8 agents working in parallel, day and night

Things are getting real and so is token burn

Follow the Molerat experiment ↓

English