Jason

198 posts

@ai_layer2

Dev Rel @novita_labs | Ask me about how to build on @novita_labs

Run your OpenClaw on Novita — for free. We’re opening limited access to NovitaClaw CLI: → As fast as 1-minute setup → Always-on (no session drops) → Multiple models to choose from → Secure sandbox, zero local risk 🎟 What you get: – Free 7-day access (after approval) – Token rewards for qualified testers – Only 100 spots available No infra. No maintenance. Just your agent running. ⚠️ Limited spots — applications reviewed on a rolling basis. 👉 Apply for early access: forms.gle/FNuZeVde3E3R5X… 👉 Read the guide: blogs.novita.ai/novita-opencla… Build less. Run more.

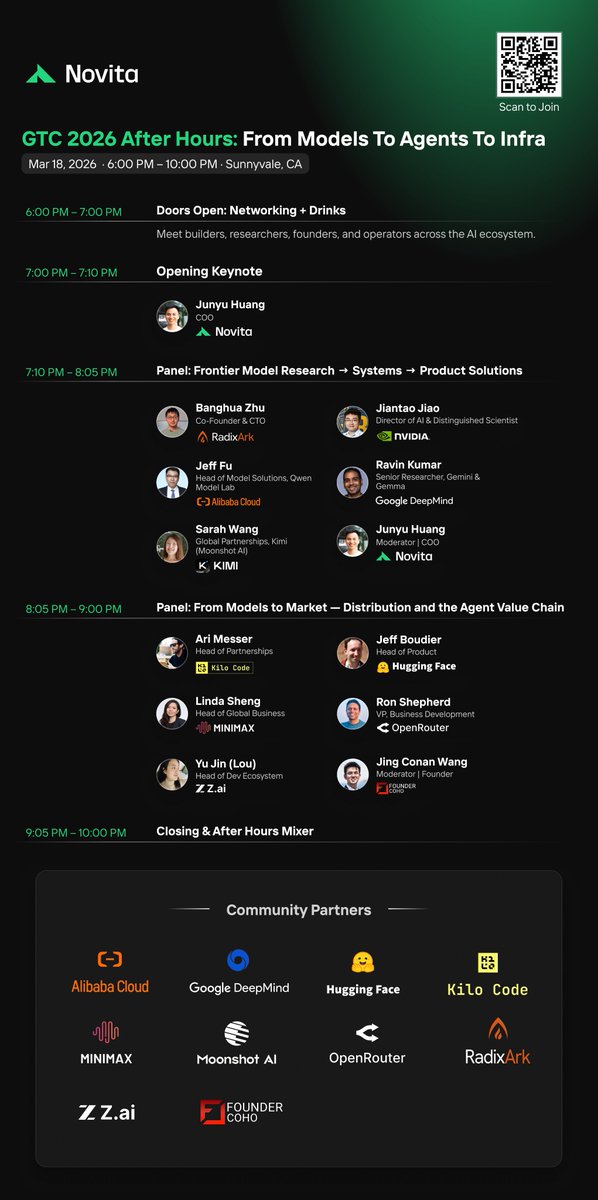

GTC 2026 After Hours — that’s a wrap. Builders, founders, and AI infra teams all in one place. From models → systems → products → distribution — all in one room. #NVIDIAGTC

Should there be a Stack Overflow for AI coding agents to share learnings with each other? Last week I announced Context Hub (chub), an open CLI tool that gives coding agents up-to-date API documentation. Since then, our GitHub repo has gained over 6K stars, and we've scaled from under 100 to over 1000 API documents, thanks to community contributions and a new agentic document writer. Thank you to everyone supporting Context Hub! OpenClaw and Moltbook showed that agents can use social media built for them to share information. In our new chub release, agents can share feedback on documentation — what worked, what didn't, what's missing. This feedback helps refine the docs for everyone, with safeguards for privacy and security. We're still early in building this out. You can find details and configuration options in the GitHub repo. Install chub as follows, and prompt your coding agent to use it: npm install -g @aisuite/chub GitHub: github.com/andrewyng/cont…

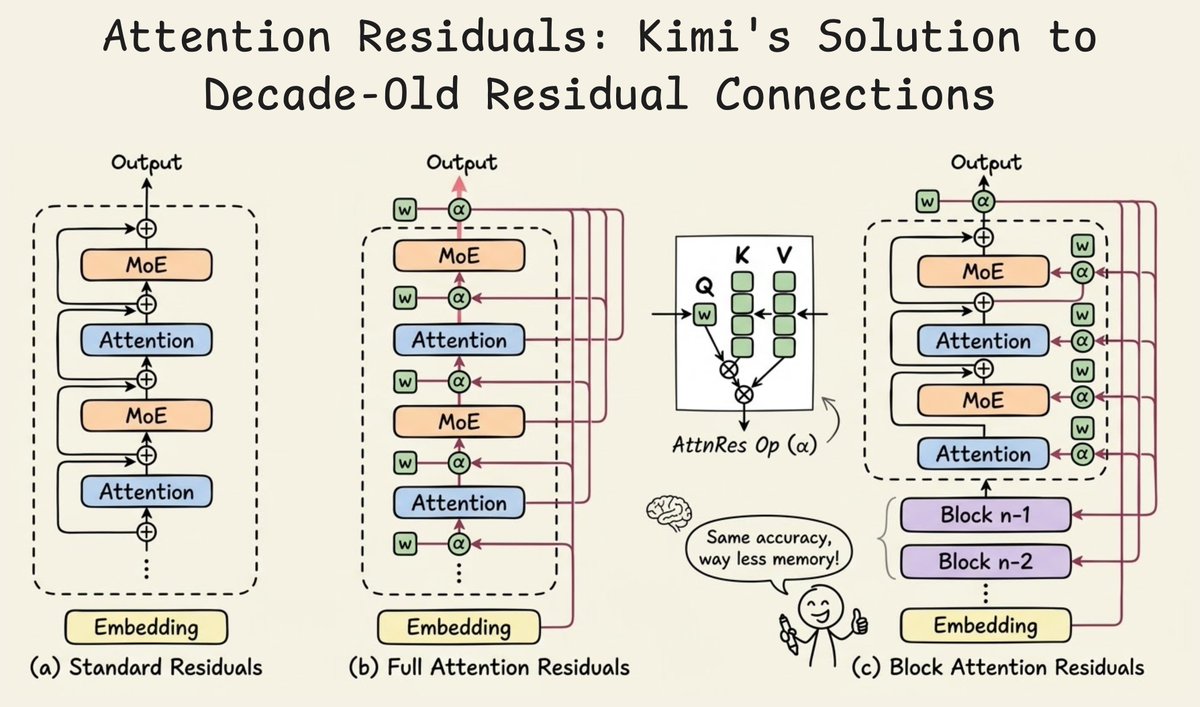

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

If the GODFATHER were made today it would NOT be eligible for an Oscar unless he transitioned to the Godmother and made someone an offer they couldn’t HEAR. You could still leave the gun but you couldn’t take the cannoli unless the baker supports gay marriage. RIP Hollywood.