Alessandro

2.7K posts

Alessandro

@alew3

Tech & Sports Enthusiast // Chief AI Officer @ https://t.co/Y5xWTG5Ka3

Palo Alto, CA Katılım Aralık 2007

1.3K Takip Edilen760 Takipçiler

@ernielm @felixrieseberg @ernielm it now appears on my mobile app as "Dispatch" under the burger menu

English

@alew3 @felixrieseberg I didn't get an answer, I am also in Max 200. I can't see it.

English

@ChanaMessinger @felixrieseberg Update your mobile app, go to the hamburger in the top left and click Dispatch

English

@felixrieseberg I have Max 20 , how do I get this working? Already update the desktop and mobile.

English

Your desktop has to be running. Like Cowork itself, we’re shipping an early version - you can expect more to come here within the next few days and weeks.

Rolling out now to Max subscribers, with Pro coming in the next few days. Try it and let me know what you think.

Download the mobile app and pair it with your desktop app: claude.com/download

English

Alessandro retweetledi

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:

- the human iterates on the prompt (.md)

- the AI agent iterates on the training code (.py)

The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc.

github.com/karpathy/autor…

Part code, part sci-fi, and a pinch of psychosis :)

English

I did a 2 hour workshop on the Claude Agent SDK at AI engineer!

We are still so early to agents, I hope this is useful if you’re thinking of making one.

AI Engineer@aiDotEngineer

🆕 Claude Agent SDK [Full Workshop] youtube.com/watch?v=TqC1qO… For our first big drop of the year, excited to bring you @trq212's full 2 hour workshop covering all of @AnthropicAI's agentic SDK (formerly known as Claude Code SDK). By far the most popular workshop of AIE CODE! Now published online for free (sorry for AV/delay issues)... long story

English

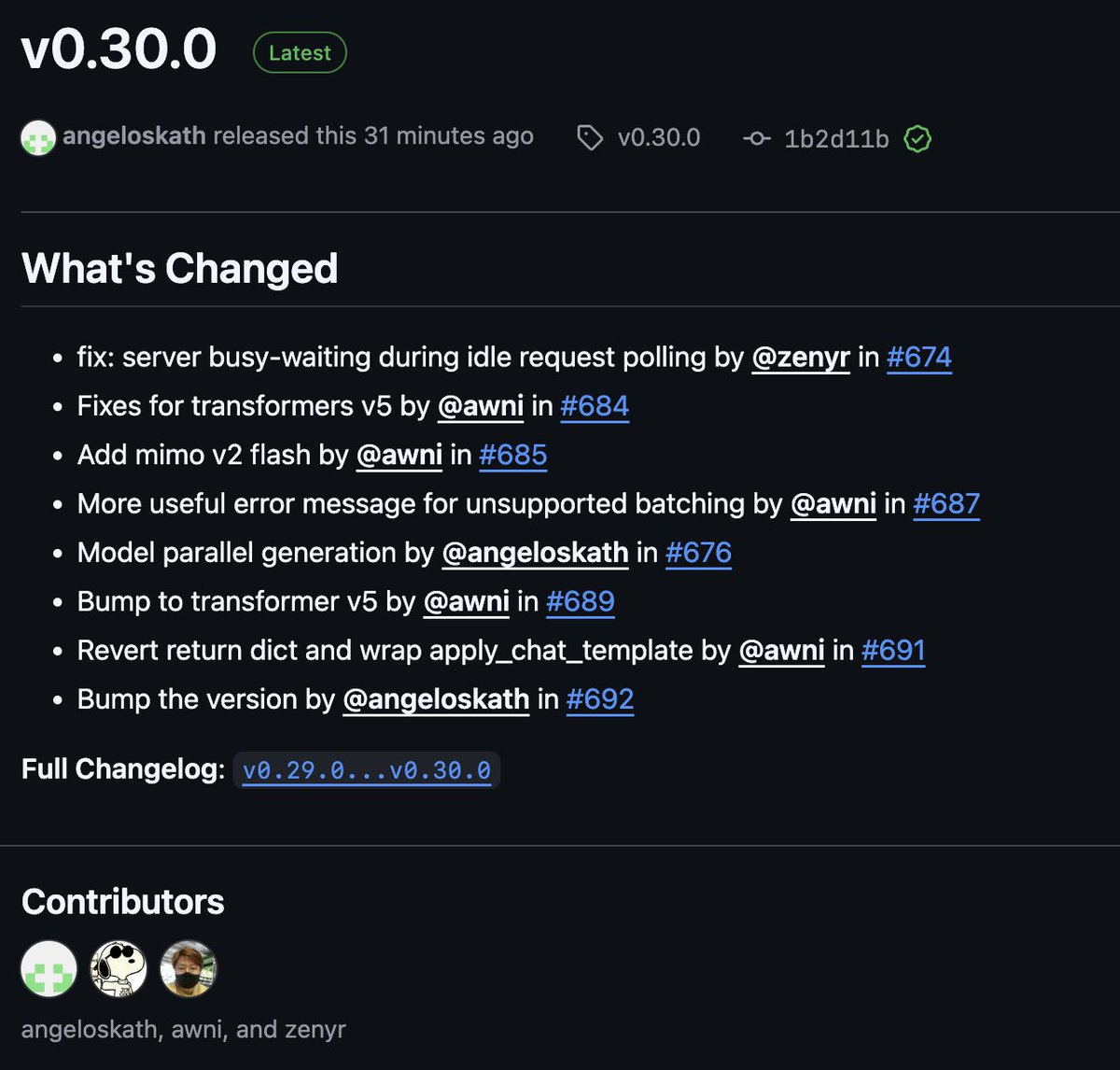

mlx-lm is becoming quite a powerful little inference framework!

The latest release adds tensor-parallel LLM inference for use with the new low-latency JACCL back-end in MLX (h/t @angeloskath).

Also updated to support Transformers V5!

English

Alessandro retweetledi

"People who go all in on AI agents now are guaranteeing their obsolescence. If you outsource all your thinking to computers, you stop upskilling, learning, and becoming more competent. AI is great at helping you learn." @jeremyphoward @NVIDIAAI

youtube.com/watch?v=zDkHJD… 2/

YouTube

English

@awnihannun Looking good! BTW, any reason MLX can't leverage the Neural Engine?

English

Alessandro retweetledi

@seeedstudio @huggingface any promotional coupon for this amazing kit?

English

🚨 The #LeRobot Worldwide Hackathon by @huggingface kicks off June 14–15!

Catch the vibe with Seeed experience — hands-on builds, open-source collab, & creative #robotics dev.

🎥 Watch the recap: youtube.com/watch?v=xnwo7b…

Join online or at a local hub to build with #VLA, diffusion models, & more!

Bring your SO-ARM101 kit: seeedstudio.com/SO-ARM101-Low-…

This weekend — don’t miss it!

YouTube

English

Alessandro retweetledi

I’ve left @arcee_ai!

I really love what we have achieved together across research and product. From model fusion, offline distillation of Llama 405B all the way to building, leading and launching Arcee Orchestra from scratch within 4 months.

Already miss everyone, more than colleagues they are my friends ❤️

Nevertheless, I’m very excited to soon announce what’s next.

Meanwhile, I’m happy to share that I’ll be working full-time on MLX (mlx, mlx-lm, mlx-vlm, mlx-audio and more) to help build the best on-device R&D experience and products by bringing the latest OS models and features to Apple Silicon.

English

@scottsLockedIn @maximelabonne this is to prevent other big tech from using it.

English

@maximelabonne There are rarely apps that have more than 700M users, other than that the rest are not hard constraints

English

Llama 4's new license comes with several limitations:

- Companies with more than 700 million monthly active users must request a special license from Meta, which Meta can grant or deny at its sole discretion.

- You must prominently display "Built with Llama" on websites, interfaces, documentation, etc.

- Any AI model you create using Llama Materials must include "Llama" at the beginning of its name

- You must include the specific attribution notice in a "Notice" text file with any distribution

- Your use must comply with Meta's separate Acceptable Use Policy (referenced at llama.com/llama4/use-pol…)

- Limited license to use "Llama" name only for compliance with the branding requirements

English

Meta has just released their Llama 4 models. The "Llama 4 Scout" variant features an impressive 10 million token context window. llama.com/llama-download… #llama4 #llama

English

Alessandro retweetledi

Excited to announce our first-ever “stealth” model... Quasar Alpha 🥷

It’s a prerelease of an upcoming long-context foundation model from one of the model labs:

- 1M token context length

- specifically optimized for coding, but general-purpose as well

- available for free

OpenRouter@OpenRouter

A stealth model has entered the chat... 🥷

English