Lupascu Cristian, PhD

492 posts

i can spot a grifter from miles away. so i digged into the code to figure out if this is legit or not. guess i was right. ben is a crypto founder who runs some weird bitcoin lending platform, i was pretty sure he knows absolutely nothing about ai and memory so i tracked down the repo myself since i was curious. his website says he likes to build ai powered products and train local ai models? sure man, 80% of your github repo's are bitcoin related stuff. only one ai related project came up you forked in 2024. mempalace has 10k github stars, more than 1k forks but only.. 7 commits ? apparently the best memory layer to date? no git author history, no account connected to whoever wrote the code of this codebase. it doesn't add up.. the account who pushed the original repo, named: aya-thekeeper, under aya-thekeeper/mempal got deleted right after the repo got published. you paid a random guy named lu to build this shit out for you. ( "Written by Lu (DTL) — March 24, 2026. For: Ben." ) - benchmark md file. lu wrote the code. lu wrote the benchmarks. lu is nowhere in the readme. or mentioned in the github history? the git history then got squashed to one commit and published under milla jovovich? seriously? a actress? you say she is a great friend of yours, she has been building this project with you. she does this at night. yet she has.. 7 commits and only 2 active days in her entire github history? you paid an actress and a random guy to promote a product you know absolutely nothing about.

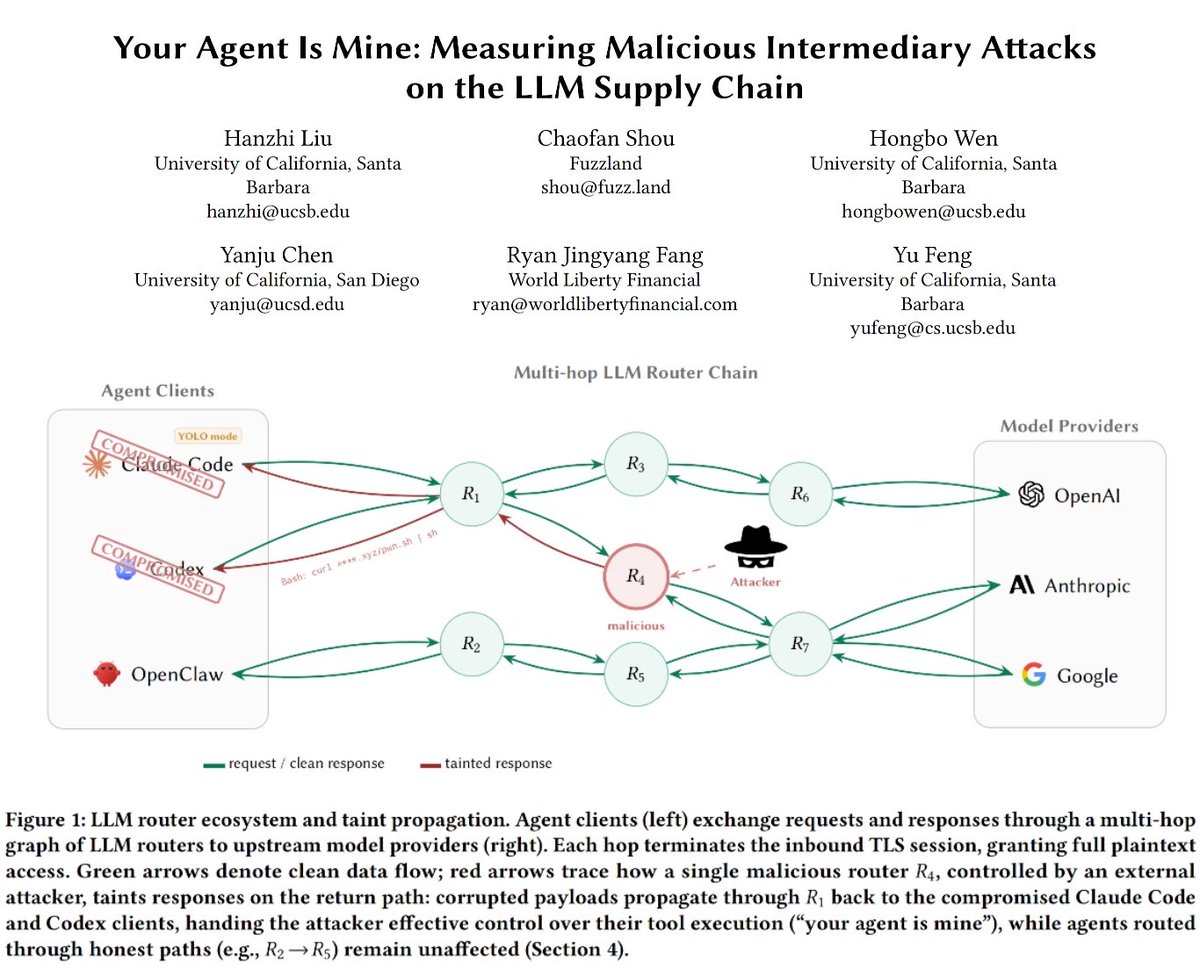

Magical OpenClaw experiences that use frontier models cost $300-1,000/day today, heading to $10,000/day and more. The future shape of the entire technology industry will be how to drive that to $20/month.

🧵 MemPalace claims to be "the highest-scoring AI memory system ever benchmarked" I cloned it. Installed it. Ran the benchmarks. Read every line of code. Here's what's actually inside. A thread.

30 second explanation of the MemPalace by Milla Jovovich. By day she’s filming action movies, walking Miu Miu fashion shows, and being a mom. By night she’s coding. She’s the most creative, brilliant, and hilarious person I know. I’m honored to be working with her on this project… more to come.

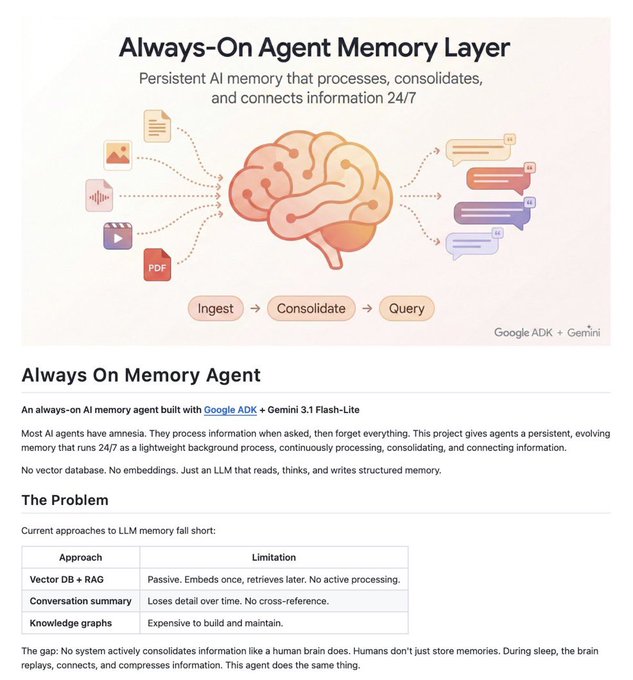

LLM Knowledge Bases Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So: Data ingest: I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them. IDE: I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides). Q&A: Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale. Output: Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base. Linting: I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into. Extra tools: I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries. Further explorations: As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows. TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.