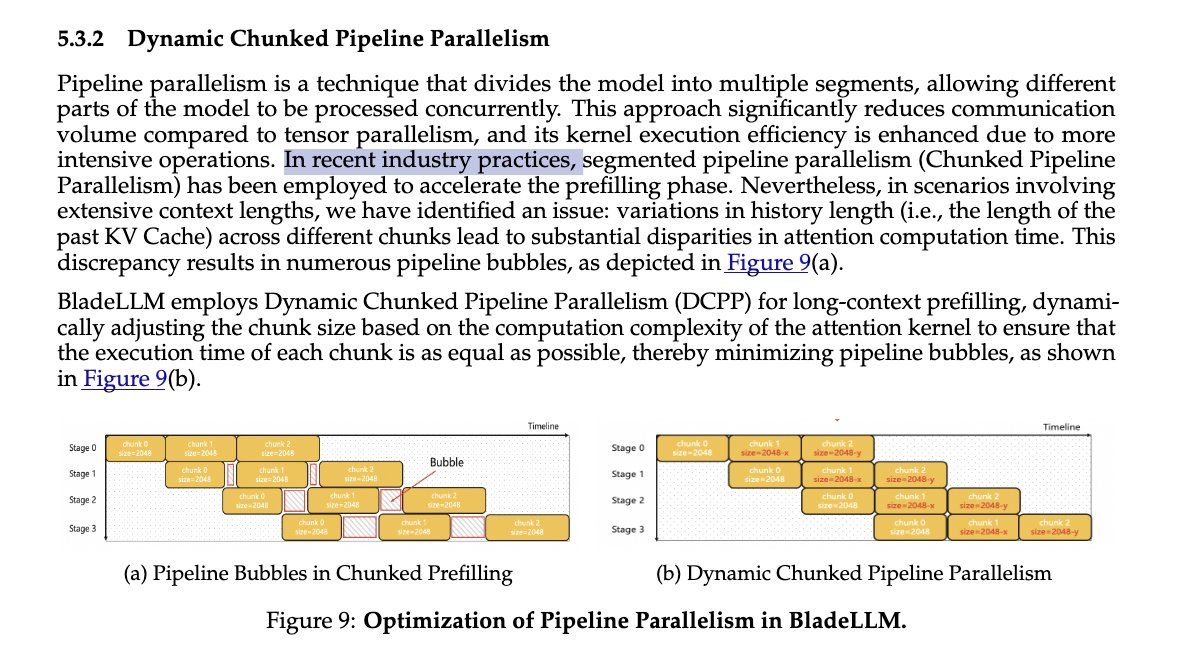

Chunked pipeline parallelism is arguably the most general and scalable system technique for accelerating super-long-context inference. It remains underrated today, largely because there still isn’t a strong, high-quality open-source implementation. The SGLang team recently fully optimized it and published a detailed blog post explaining all the key details.