Jayashree Mohan

76 posts

Jayashree Mohan

@jayashree2912

Researcher | Microsoft Research India

🚨 Are LLM compression methods (𝘲𝘶𝘢𝘯𝘵𝘪𝘻𝘢𝘵𝘪𝘰𝘯, 𝘱𝘳𝘶𝘯𝘪𝘯𝘨, 𝘦𝘢𝘳𝘭𝘺 𝘦𝘹𝘪𝘵) too good to be true and are existing eval metrics sufficient? We've looked into it in our latest research at @MSFTResearch 🧵 (1/n) arxiv.org/abs/2407.09141

🚀 Introducing Metron: Redefining LLM Serving Benchmarks! 📊 Tired of misleading metrics for LLM performance? Our new paper introduces a holistic framework that captures what really matters - the user experience! 🧠💬 github.com/project-metron… #LLM #AI #Benchmark

One of @vllm_project's strengths is that it exposes the ability to trade off latency and throughput. However, higher qps regimes cause significant latency degradation. The underlying reason has to do with inference taking place in two stages: prefilling (processing the input context) and decoding (generating output tokens). Normally in vLLM, these stages don't happen at the same time and so an incoming request will trigger prefill computations that interrupt ongoing decoding (leading to a spike in latency). Chunked prefill breaks prefill computations into multiple "chunks" and batches them along with ongoing decoding computations to avoid interruptions. In a low qps regime, this makes no difference, but in the high qps regime with constant interrupts, this is a big deal. More details in this paper arxiv.org/pdf/2308.16369 by @agrawalamey12. Also in this RFC github.com/vllm-project/v….

Did you ever feel that @chatgpt is done generating your response and then suddenly a burst of tokens show up? This happens when the serving system is prioritizing someone else’s request before generating your response. But why? well to reduce cost. 🧵

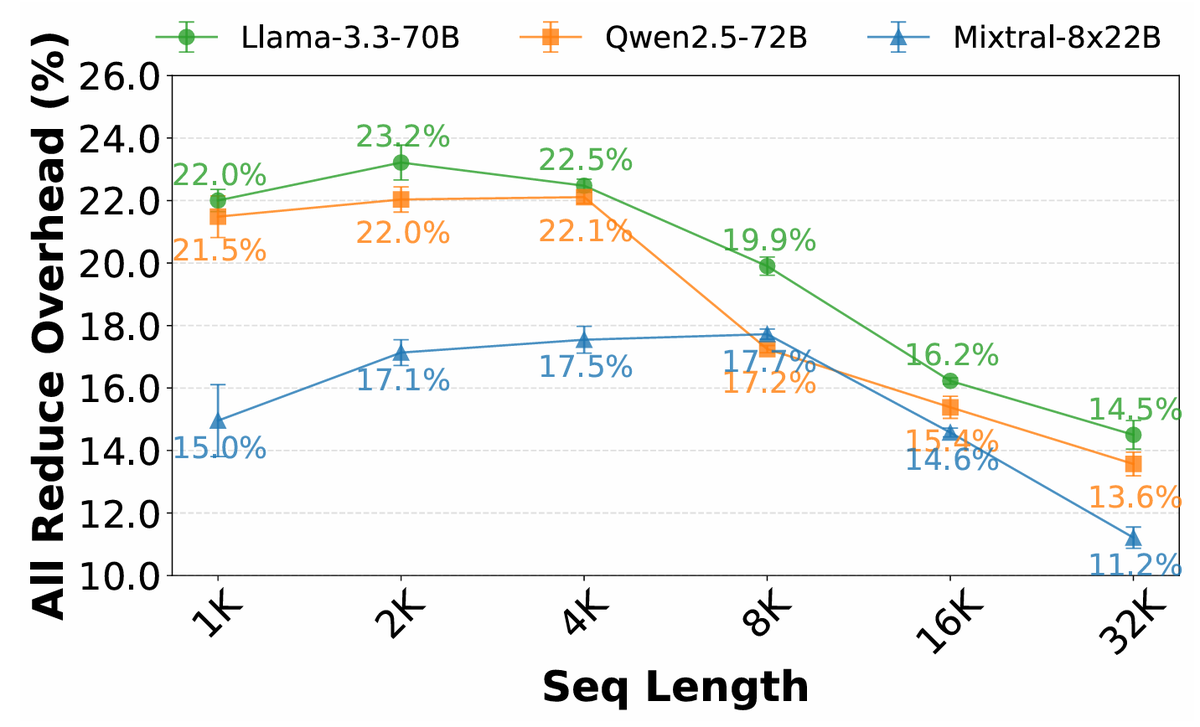

Ever wondered why @OpenAI charges 2x price for output tokens compared to input? Turns out that an output token can be up to 200x more compute time than an input token. Why? We explored this phenomenon during my internship at @MSFTResearch. 🧵

#CFP @EuroSys_conf 2023 will take place in Rome, #Italy. Eurosys adopts a new dual-deadline format. The first of the two deadlines (Spring & Fall) is 11-May-2022 (for abstract). CFP and other details can be found at #cfp" target="_blank" rel="nofollow noopener">2023.eurosys.org/cfp.html#cfp