Amit

3.1K posts

Amit

@amitpr

I write code that works, sometimes @modal

Katılım Haziran 2022

267 Takip Edilen2.6K Takipçiler

I can totally see how people can get into timekeeping.

@geerlingguy was ahead of his time

English

husbant you bought a caesium-133 atomic-clock-on-a-PCIe-card

now we are homeress

Amit@amitpr

In efforts to "eat my cake and have it too" I am now reading about atomic clocks ...how did we get here

English

@charles_irl on a crusade to make all the theoreticians uncomfortable using some cool ass engineering

English

time to buy some more GPUs

Erik Bernhardsson@bernhardsson

Today we're announcing our Series C funding: $355M at a $4.65B valuation, led by some great investors @generalcatalyst and @Redpoint. We've had insane growth in the last year, but we're still very early. So proud of the team and what we have built so far!

English

Don't usually post my blog posts on here (maybe I should?)

amit.prasad.me/blog/sane-nix

English

There’s like 6-7 NYC infra founders I occasionally get drinks with and ~50% of them just got acquired:

- @graphite

- @astral_sh

- @StainlessAPI

Congrats!! 🍻

English

@badcryptobitch @LefterisJP August is a little insufferable but if you can get past the heat, go for it

English

@LefterisJP August.

Also depends where you are flying from and which airport you are going to.

The tickets I found are not for Tokyo!

English

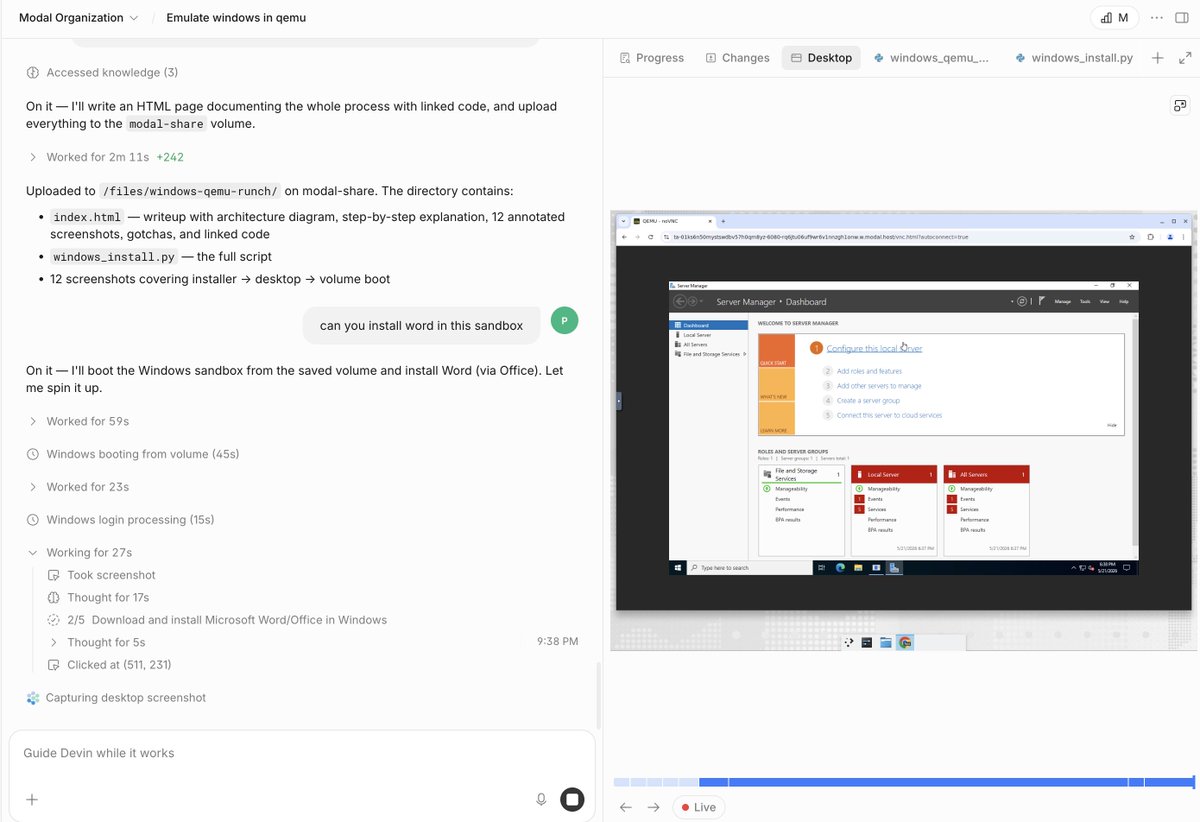

@jarredsumner @theo Is it possible to break that down into the dependencies implicated? I suspect uv’s unsafe count comes from well tested and standard ecosystem dependencies.

English

@theo `cargo geiger` counts unsafe usage in the compiled output, which is more accurate than grepping source code because it includes dependencies.

I don't think counting `unsafe` usage is a very useful metric, but note that the count for uv is technically higher than Bun.

English

@ibuildthecloud If you want to understand or have ideas, come work with us

modal.jobs

English

Thanks all for the suggestions. We need a lot of UUIDs on Turso because we can create so many databases.

The suggestion I liked the most was to reserve a block, so I'd like to reserve every UUID that starts with b00.

If you have an UUID that starts with those characters, please free it and send it to me.

Glauber Costa@glcst

I was planning to use UUIDs to represent the databases we have on the @tursodatabase Cloud. I got a bit worried that we would perhaps run out of UUIDs. I just double-checked and I think we'll be fine for the next year or so. Will use UUIDs for now, and if needed, rearchitect later.

English