Anders Fredriksson

6.1K posts

@andefred

Entrepreneur & investor, Author writing “Speed - Optimizing Startups for Speed of Iteration”. I like AI, and to debunk Tesla FUD. 500 & Alchemist alumni

im fully convinced that LLMs are not an actual net productivity boost (today) they remove the barrier to get started, but they create increasingly complex software which does not appear to be maintainable so far, in my situations, they appear to slow down long term velocity

Before we build ANY MVP, we use the MoSCoW method to define scope. MoSCoW = Must have, Should have, Could have, Won't have. We categorize every feature: → Must have = Core features for phase 1 → Should have = Nice to have but not critical → Could have = Future roadmap → Won't have = Out of scope This simple framework gives us a clear feature list, a lean scope, and zero ambiguity. It's the difference between shipping in 3 weeks vs getting stuck for 6 months.

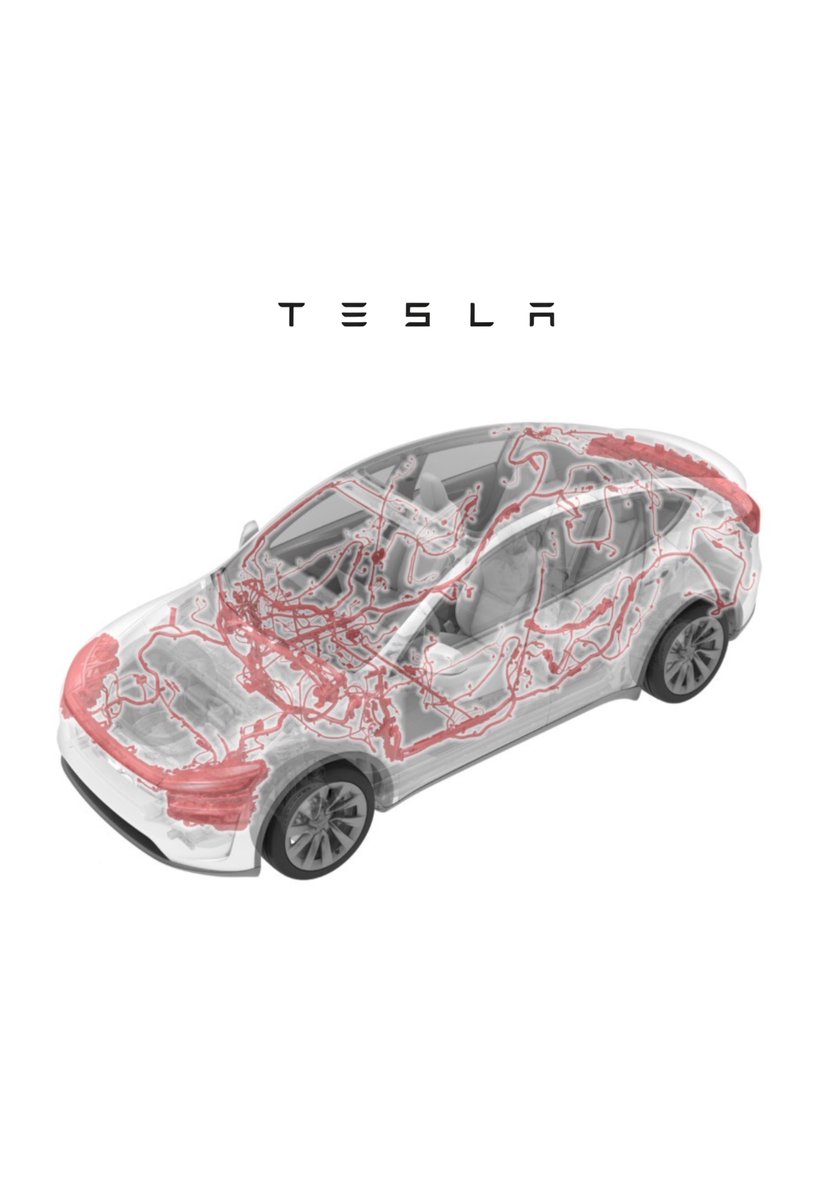

Ford CEO Jim Farley, in a new interview, says he realized Ford had been doing EVs all wrong after his team ripped apart a Tesla: “When we ripped apart a Tesla, I was just absolutely flabbergasted. The Mach-E's wiring harness was 70 pounds heavier and 1.6 kilometers longer. We didn't know what was going on in [Tesla engineers' ] minds. But now we understand. They had no prejudice. We had prejudice. We'd gone to our supply-chain person and said, "Buy another wiring harness." [Tesla] said, "Let's design the vehicle for the lowest, smallest battery." Totally different approach.”

My biggest takeaways from my conversation with @andefred (Serial Startup Founder, Sweden's Most Prominent Internet Entrepreneur 2007) 👇 Full conversation: youtu.be/fr8jZbEKjrw