Andrew Northwall

117 posts

Andrew Northwall

@anorthwall

Serial Entrepreneur | Building Privacy-First AI & Next-Gen Political Tech | The Obstacle Is The Way | Former COO $DJT

Sky-high connectivity is getting an upgrade ✈️📶 We’re teaming up with @Amazonleo to bring its advanced satellite technology on board, powering even more fast, personalized Delta Sync Wi-Fi and seatback experiences from gate to gate. Starting 2028. dl.aero/6018QIHEy

A recent decision complicates the picture on AI privilege waiver. In Warner v. Gilbarco (E.D. Mich., Feb. 10, 2026), defendants tried to force a pro se plaintiff to hand over everything related to her use of AI tools in the litigation. Judge Patti shut it down entirely. The court's reasoning has two layers. First, relevance. The court held the AI materials were "not relevant, or, even if marginally relevant, not proportional" under Rule 26(b)(1), noting that this is a civil case, and not a criminal one (like Heppner), so different rules apply. Defendants had zero evidence plaintiff uploaded anything confidential to an AI platform. The court told defendants, bluntly, that their "preoccupation with Plaintiff's use of AI needs to abate." Second, on work product, Defendants argued that sharing prompts and outputs with ChatGPT waived work product protection. Judge Patti said no. The reasoning: work product waiver requires disclosure to an adversary, not just any third party. And ChatGPT "and other generative AI programs are tools, not persons, even if they may have administrators somewhere in the background." The court agreed with plaintiff that accepting defendants' theory "would nullify work-product protection in nearly every modern drafting environment, a result no court has endorsed." So does this contradict Judge Rakoff's Heppner ruling? Not necessarily. Attorney-client privilege and work product doctrine have fundamentally different waiver standards. Privilege can be destroyed by voluntary disclosure to any third party. Work product requires disclosure to an adversary or in a way likely to reach one. AI platforms aren't adversaries. This means it's entirely possible to lose privilege on your AI conversations while retaining work product protection over the same materials. Different doctrines, different triggers, different facts, different outcomes. I don't think it's realistic for everyone to understand exactly which protection applies, how each can be waived, and how the specific AI platform's terms and privacy policies affect the analysis. People should migrate to defensible positions, no matter the circumstance, and the enterprise agreement point I made after Heppner still stands. We're watching this area of law develop in real time, and the courts aren't going to agree with each other for a while. Buckle up. storage.courtlistener.com/recap/gov.usco…

Your AI conversations aren't privileged. Yesterday, Judge Jed Rakoff ruled that 31 documents a defendant generated using an AI tool and later shared with his defense attorneys are not protected by attorney-client privilege or work product doctrine. The logic is simple: an AI tool is not an attorney. It has no law license, owes no duty of loyalty, and its terms of service explicitly disclaim any attorney-client relationship. Sharing case details with an AI platform is legally no different from talking through your legal situation with a friend (which is not privileged). You can't fix it after the fact, either. Sending unprivileged documents to your lawyer doesn't retroactively make them privileged. That's been settled law for years. It just hadn't been tested with AI until now. And here's what really hurt the defendant: the AI provider's privacy policy (Claude), in effect when he used the tool, expressly permits disclosure of user prompts and outputs to governmental authorities. There was no reasonable expectation of confidentiality. The core problem is the gap between how people experience AI and what's actually happening. The conversational interface feels private. It feels like talking to an advisor. But unless you negotiate for an enterprise agreement that says otherwise, you're inputting information into a third-party commercial platform that retains your data and reserves broad rights to disclose it. Judge Rakoff also flagged an interesting wrinkle: the defendant reportedly fed information from his attorneys into the AI tool. If prosecutors try to use these documents at trial, defense counsel could become a fact witness, potentially forcing a mistrial. Winning on privilege doesn't make the evidentiary picture simple. For anyone advising clients or managing legal risk, this is a wake-up call. AI tools are not a safe space for clients to process their counsel's advice and to regurgitate their legal strategy. Every prompt is a potential disclosure. Every output is a potentially discoverable document. So what do we do about it? First, attorneys need to be proactive. Advise clients explicitly that anything they put into an AI tool may be discoverable and is almost certainly not privileged. Put it in your engagement letters. Make it part of onboarding. Don't assume clients understand this, because most don't. Second, if clients want to use AI to help process legal issues (and they clearly will, increasingly), then let's give them a way to do it inside the privilege. Collaborative AI workspaces shared between attorney and client, where the AI interaction happens under counsel's direction and within the attorney-client relationship, can change the analysis entirely. I'm excited to be planning this kind of approach, and I think it's where the industry needs to head. storage.courtlistener.com/recap/gov.usco…

In case you’re late to my favorite Olympic performance enhancing scandal, here are the details of penis inflate-gate.

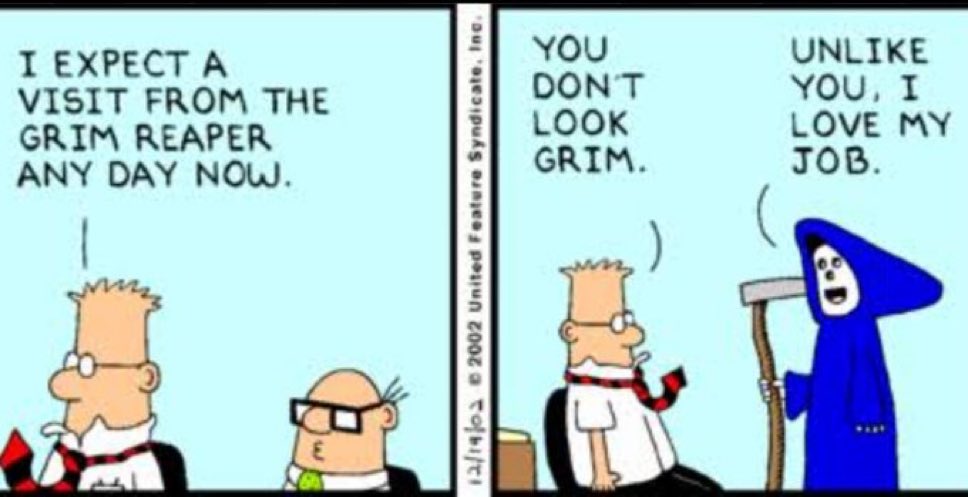

how it feels to navigate an enterprise saas codebase with claude

We are starting to test ads in ChatGPT free and Go (new $8/month option) tiers. Here are our principles. Most importantly, we will not accept money to influence the answer ChatGPT gives you, and we keep your conversations private from advertisers. It is clear to us that a lot of people want to use a lot of AI and don't want to pay, so we are are hopeful a business model like this can work. (An example of ads I like are on Instagram, where I've found stuff I like that I otherwise never would have. We will try to make ads ever more useful to users.)

The stuff of nightmares!!👀

ChatGPT gets prissy when you ask it about completely legal products if they're on their naughty list, because they want to control you. Strongwall.ai believes AI should serve you, not the other way around.