Adam Sadovsky

132 posts

Adam Sadovsky

@asadovsky

CVP, AI at Microsoft AI (past: @GoogleDeepMind, @Google)

🚨Text Leaderboard Update: A new model provider, @MicrosoftAI has broken into the Top 15 this week! 💠MAI-1-preview by @MicrosoftAI debuts at #13. Congrats to the Microsoft AI team! As the Text Arena is one of the most competitive races, breaking into the Top 15 is no small feat. 💪

Big update to our MathArena USAMO evaluation: Gemini 2.5 Pro, which was released *the same day* as our benchmark, is the first model to achieve non-trivial amount of points (24.4%). The speed of progress is really mind-blowing.

Gemini 2.5 Pro is taking off 🚀🚀🚀 The team is sprinting, TPUs are running hot, and we want to get our most intelligent model into more people’s hands asap. Which is why we decided to roll out Gemini 2.5 Pro (experimental) to all Gemini users, beginning today. Try it at no cost at gemini.google.com

Wow we just ran Gemini 2.5 Pro on our evals and it got a new state of the art. Congrats to the Gemini team! Sharing preliminary results here and working on bringing it into Devin:

Think you know Gemini? 🤔 Think again. Meet Gemini 2.5: our most intelligent model 💡 The first release is Pro Experimental, which is state-of-the-art across many benchmarks - meaning it can handle complex problems and give more accurate responses. Try it now → goo.gle/4c2HKjf

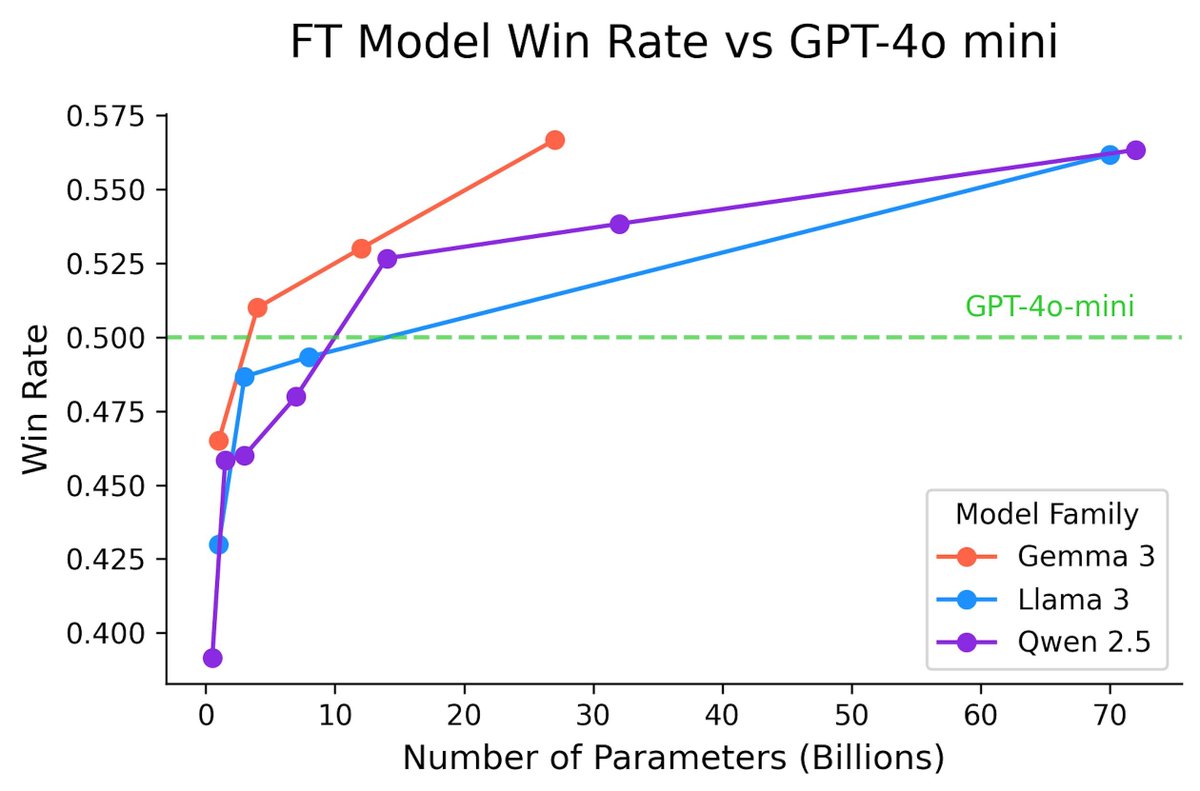

🎉 Congrats to @GoogleDeepMind on Gemma-3-27B, the newest and one of the strongest open models in Arena! 💠 Top 10 overall - beating out many proprietary models with only 27B parameter 💠 2nd best open model only below DeepSeek-R1 💠 128K context window Check out their blog to learn more about Gemma 3. We can't wait to see where this goes next! 🔥👏