Bryan Christmas

1.6K posts

Bryan Christmas

@bchristmas

Working on something new

Very interesting announcement from OpenAI this morning. We’re excited to make OpenAI's models available directly to customers on Bedrock in the coming weeks, alongside the upcoming Stateful Runtime Environment. With this, builders will have even more choice to pick the right model for the right job. More details at our AWS event in San Francisco tomorrow.

Yesterday I drove my @tesla 900 miles on FSD from Miami to Nashville and I realized it’s genuinely the better option. I fly that route 2 to 3 times a month. Flights are never under $400. Most times $600. Sometimes $800. Add Uber to and from both airports, or parking garage fees. Then factor in the delays, the cancellations, the security theater, the chaos, the guy next to you who hasn’t met deodorant yet. On the other hand: I pack healthy snacks, press one button, and the car just goes. I took calls. Replied to emails. FaceTimed my family. Ate without pulling over. Did everything I normally do on a travel day, except none of the stuff that makes travel days miserable. My biggest concern going in was range and charging. Here’s what actually happened: My bladder needed one extra stop the car didn’t even suggest. Most charging stops were under five minutes. Total cost for the whole trip was less than just the uber to the airport. And this was the base model Y. Now I’m thinking I should get something comfier and just make this the default.

When tech CEOs like Sam Altman and Dario Amodei acknowledge the risks of AI, they’re selling you a product. @parmy explains the dark art of AI marketing 🎥

What would you like to search for?

maybe this is not yet clear, so let me state it plainly: as of right now Anthropic, and really a small number of individuals at Anthropic, has the capacity to directly attack and cause major damage to the United States Government, China, and generally global superpowers. government agencies like the NSA do not have internal models or defense capabilities that outclass frontier models. if they chose to do so, they could likely exfiltrate top secret information from government systems, gain control over critical infrastructure including military infrastructure, sabotage or modify communications between members of government at the highest level, and potentially carry on activities for some time without detection. the thing about having access to a huge number of zerodays your adversaries don't know about is it gives you a massive asymmetric advantage. they did not exploit this to gain power or destabilize the world order. they publicly released the information that they had these capabilities and worked to mitigate these flaws. you should be grateful american frontier labs have proven themselves remarkably trustworthy and concerned with the public good. but it's critical you understand we are in a new regime. private entities now have power that directly rivals and impacts the government's monopoly on influence and violence. and anthropic is certainly not the only one, there's little chance OpenAI's internal models are far behind. this trend will accelerate on virtually every dimension, not slow down. my prediction for how it plays out is the relatively imminent seizure and nationalization of labs by the US government, sometime over the next two years. it's very tough for me to see how they accept the existence of this kind of threat. but this adds a whole new class of governance issues, as then we've handed these extremely wide-reaching capabilities from private entities to public ones.

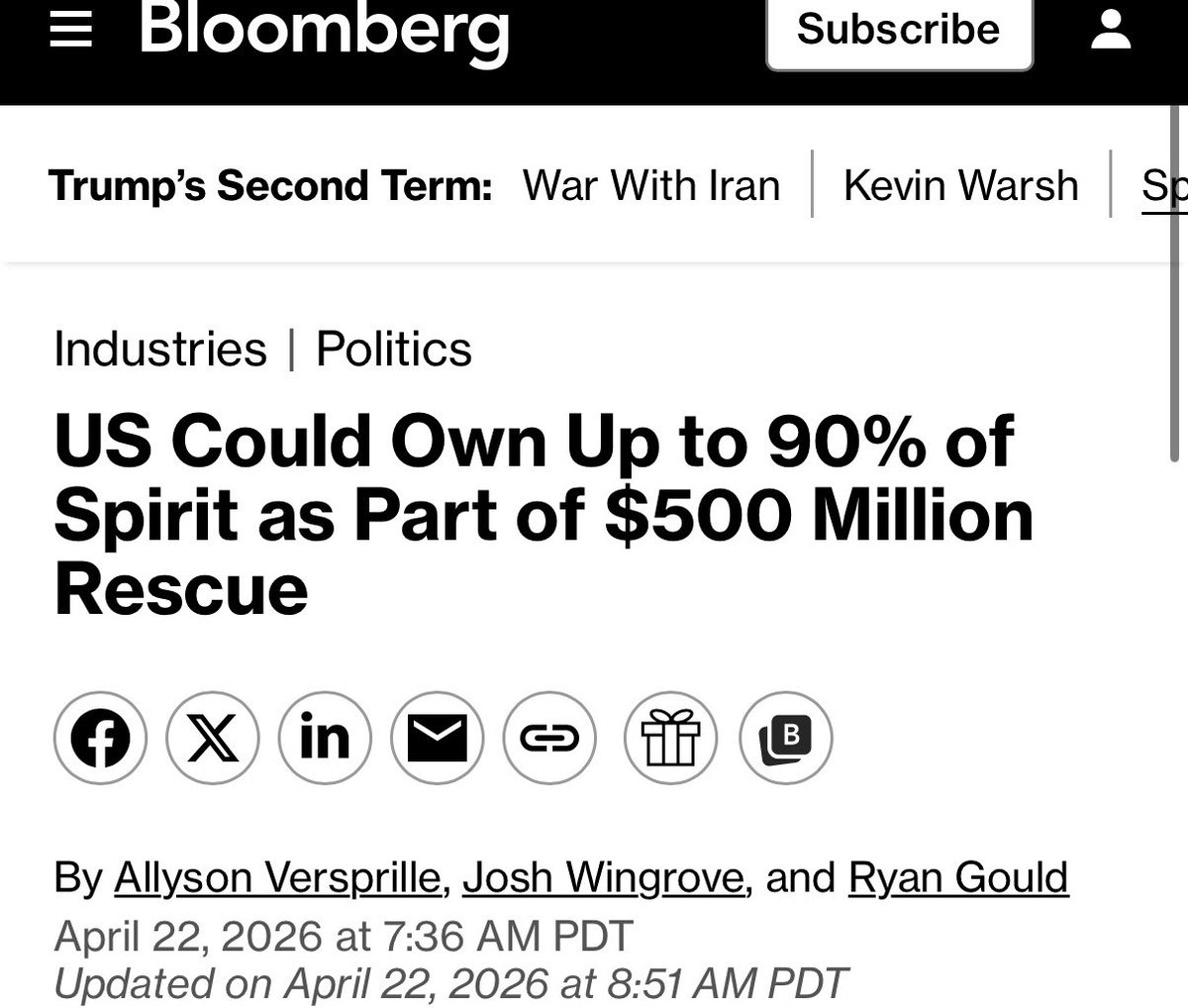

elon has never tweeted about the iran war why is that?

🚔🚔Notable Arrest 🚨🚨 On April 1, 2026, SFPD officers arrested 4 suspects in a robbery and 4 auto burglaries.