perpetually crying

4.3K posts

perpetually crying

@bearaddresser

privacy is a god given right | @nillionnetwork

New friend of Sentient unlocked ✅

There are rules inside the AI you use every day that nobody outside the company that built it even knows exist. @0xsachi and @sewoong79 get into why in our latest episode. Watch: youtu.be/VQ5vTbQWJzA?si… Listen: open.spotify.com/episode/692BOB…

The AGI race is a geopolitical sprint fronted by trillion-dollar institutions, burning $20B+ annually on compute and model training alone. Crypto AI, by contrast, operates below 0.1% of that scale. And yet the safest place where AGI can operate is on-chain. Fact or farce? 🧵

Meta Harnesses is Autoresearch on steroids. Something I've been exploring recently is to get long running agents to hill climb on a verifiable task to continuously improve without my intervention. Karpathy's Autoresearch did this pretty well on specific tasks, but this weekend I tried Meta Harnesses which moves one level of abstraction up. What does Meta Harness do? Autoresearch can be used in harness like Claude Code / Codex to generate experiments to try, evaluate results, and continue looping. Meta Harness generates a harness itself that optimizes on a task or a set of task. Here, we define a harness as "a single-file Python program that modifies task-specific prompting, retrieval, memory, and orchestration logic". The idea is that LLMs are very powerful today, but to harness [pun intended] their power, you need to give it the right prompts and context. Meta Harnesses automates coming up with the right prompts and the right way to retrieve context to solve a problem. Where did this idea come from? This is from a paper from Stanford and the author of DSPy written last week. The paper shows fantastic performance on 3 tasks: text classification, math reasoning (IMO level problems) and coding (Terminal Bench 2.0), far outperforming traditional harnesses. The discovered harnesses are interesting: math for example, splits up the logic into different categories (Combinatorics, Geometry, Number Theory, Algebra) and prompts and looks at the context differently. The coding harness, amongst other things, pre-processes the tools available in the environment to save exploratory turns. When should you use and not use it? Meta Harnesses seem pretty useful for tackling a specific but wide set of problems where the result is verifiable. In contrast, when I tried it on a specific task like Chess, it arbitrarily divides the problem into separate tasks - opening, mid game, end game, and creates different approaches for each. This "works" but isn't really clean because we believe there should be one approach that does all three. It does far better on things like examinations (JEE, Gaokao) where it splits problems into categories and tackles each category with different strategies. This paper covers a pretty light version of what a harness means. In the future, we can split up tasks into harnesses that have access to specific kinds of data, specific toolchains and various models to get even better results. Overall, pretty cool applied AI approach to hillclimb a verifiable task in a specific domain with variety within the problem space.

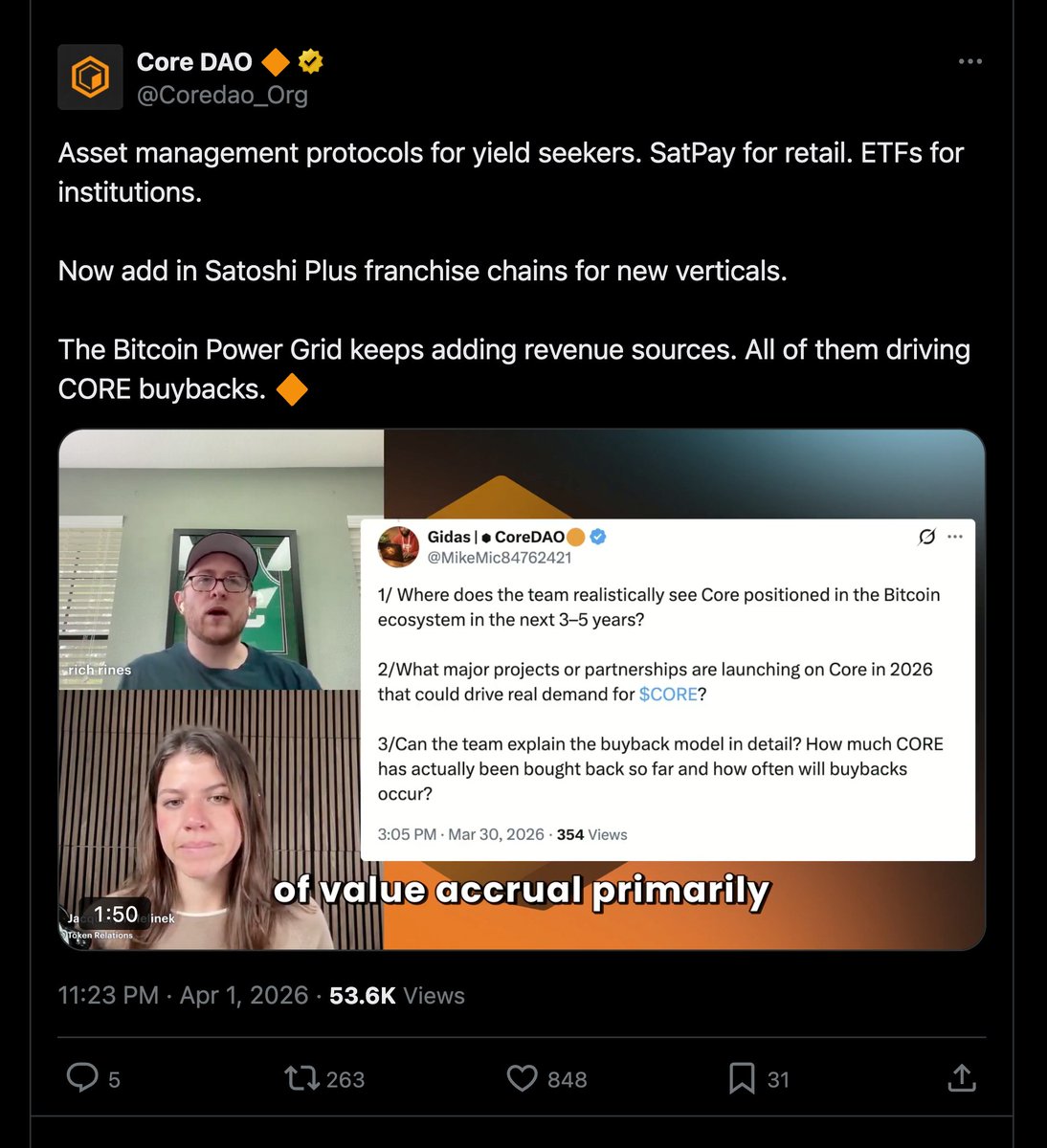

DROPS E35: @Coredao_org - Bitcoin yield without giving up your Bitcoin @richrines is one of the initial contributors to Core DAO, the leading Bitcoin scaling solution. He's also a long-time Zcash holder and early backer of @_zprotocol , a new privacy chain built on Core's Satoshi Plus consensus. We talk Bitcoin yield, financial privacy, AI surveillance, and why the next big move in crypto might not be where most people are looking. We talk about: - How Core DAO lets you earn yield on Bitcoin by time-locking it - without ever giving up custody - Why borrowing against Bitcoin makes sense now - OG Bitcoiners rotating to Zcash - what "transition" actually means and whether it's bad for Bitcoin - Z Protocol as the DeFi layer for private money - Why AI has made financial surveillance trivial - and why that accelerates privacy adoption - How Agents are leaving full financial fingerprints - and why privacy needs to be default on at the chain level And much more... Timestamps: 0:00 - Introduction 2:05 - What does Rich Rines do? 3:00 - Financial Freedom 4:09 - Journey from Bitcoin to Zcash 6:40 - Zcash Philosophy 8:38 - Transition to Zcash 11:20 - Who is Rich Rines? 11:46 - Bitcoin as Pristine Collateral 14:28 - Criticisms of Borrowing Strategy 16:52 - Explaining CORE 18:58 - Bitcoin Yield Story 20:29 - Misconception regarding CORE 22:08 - Time Lock 23:34 - Risk of using CORE 24:37 - Strategies used by CORE 26:42 - What Bitcoin Holders Want? 28:46 - Bitcoin Yield 30:10 - CORE Alpha 32:44 - SatPay 34:19 - Power Grid Thesis 35:37 - Satoshi Plus 37:07 - What is Z? 38:12 - Benefits of long-term Zcash Holder 40:01 - Vertical Integration 43:12 - Privacy for Agents 44:41 - Faux Privacy 46:14 - Privacy vs Government 49:01 - Zcash’s Future 50:01 - Conclusion

Find out what went down with @ethermage when I visited the newly launched Eastworlds Robotics Lab by @virtuals_io The @Base Batches 003 finalists for the Robotics Track will be doing a month long residency at Eastworlds Robotics Lab before their demo day at @ns on May 7th. Don't miss the opportunity to catch live robots in action!