Ben Gras

14.4K posts

Ben Gras

@bjg

Security researcher at Intel. Proud dad & husband. Loves hacking & building. PhD in systems security from @vu5ec. https://t.co/fm0WTj0bkU

52.3191,4.8724 Katılım Temmuz 2007

1.3K Takip Edilen2K Takipçiler

@NicoInberg @erikmauritz Ik herinner me zeker WOL en Nina Brink's champagne dansje nog. Ik zit nu even de Wikipedia van het de WOL beursgang te lezen en vind - door een moderne bril misschien - eigenlijk de terechtheid waarmee NB zwart werd gemaakt achteraf misschien niet zo'n uitgemaakte zaak. Wat jij?

Nederlands

Herinnert u zich deze nog?

Vandaag 26 jr geleden kwam World Online naar de beurs. @erikmauritz ziet analogie met OpenAI straks. Of toch niet?

Lees hier meer: deaandeelhouder.nl/columns/wordt-…

Nederlands

Ben Gras retweetledi

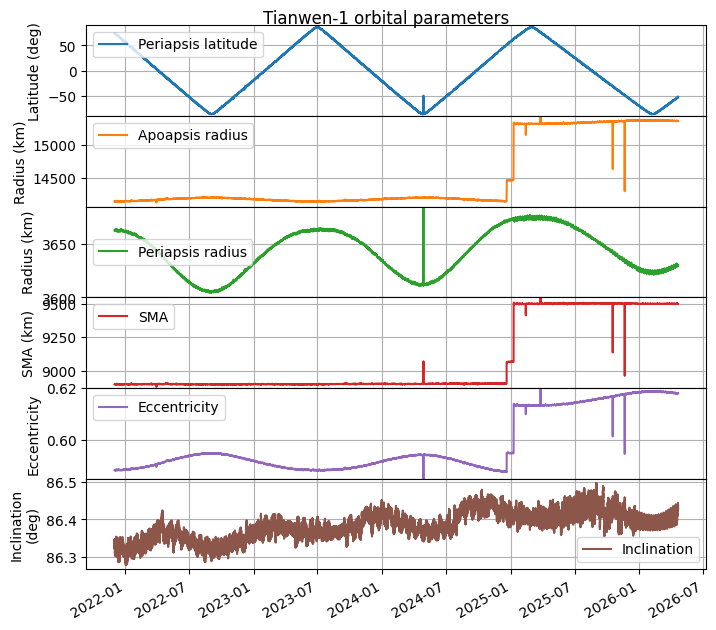

Following up on yesterday's announcement by @amsatdl about the lack of signals received from Tianwen-1 since 2025-12-23, I have published a short post that contains the orbital state based on telemetry received up to 2025-12-22.

English

Ben Gras retweetledi

The ‘number station’ sending mystery messages to Iran ft.com/content/86c4a4… via @ft

English

Ben Gras retweetledi

Ben Gras retweetledi

If you ever feel like you're late to the game, consider that in the 1890s many scientists thought physics as a field was completely solved (quote below is from Albert Michelson in 1894).

On the front of intelligence science, it feels more like the 1870s. For the first time we have something that is starting to really work (however primitive it may be), which we can use as a springboard for the next few decades of discoveries.

English

Ben Gras retweetledi

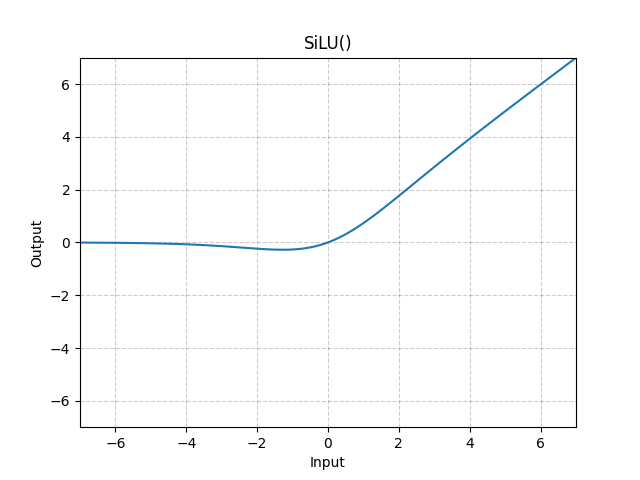

I always lost performance when I tried to use silu/gelu activations in my RL value networks, and I finally understand why.

If the pre-activation values are small, the smooth curve through zero is basically a linear activation, destroying the representation power of the network. You need a batch/layer/rms norm on the preactivations to put them in the range the smooth activations are designed for.

Internal norms generally hurt performance on our RL tasks, but combining them with a smooth activation at least works basically as well as a raw relu (but slower). So, not actually a win, but the lightbulb of understanding was good!

English

Ben Gras retweetledi

@MuhammadAdilIn1 @PrismNdss26 @akulgoyal00 TL;DR -- @PrismNdss26 is basically a gift-wrapped starter kit for conducting effective systems research on real-world security operations, an area that frankly needs more attention and talent. Join us!

English

@NicoInberg Ik ben blij dat deze reactie het eerste is dat ik ervan zie, ipv de gebruikelijke pearl clutching. Fijn Femke!

Nederlands

Ben Gras retweetledi

Ben Gras retweetledi

Just got this link on my discord - kickstarter.com/projects/bitma… - passing it along because this book looks fun!

English

@JoshDWalrath The plateauing at 90% is a bit telling - 10% of us are uggos.

Not you though buddy.

English

I know what I look and sound like, so I'm that exception...

Alps@alpaysh

if you have 300+ followers on X dot com, there's a high chance that a secret mutual has a crush on you statistically

English

Ben Gras retweetledi

Ben Gras retweetledi

Ben je een journalist of activist en vrees je, waar ter wereld ook, de veiligheidsdiensten: Lockdown Mode (‘isolatiemodus’ voor iPhone) is your friend

404media.co/fbi-couldnt-ge…

Nederlands

Ben Gras retweetledi

Marty at Id did ask for a video from me, but there was a miscommunication about when he needed it, so I didn’t get it done by the actual anniversary…

id Software@idSoftware

35 years of shareware, slipgates, and slaying. Thank you to everyone who’s stepped into our worlds.

English

Ben Gras retweetledi

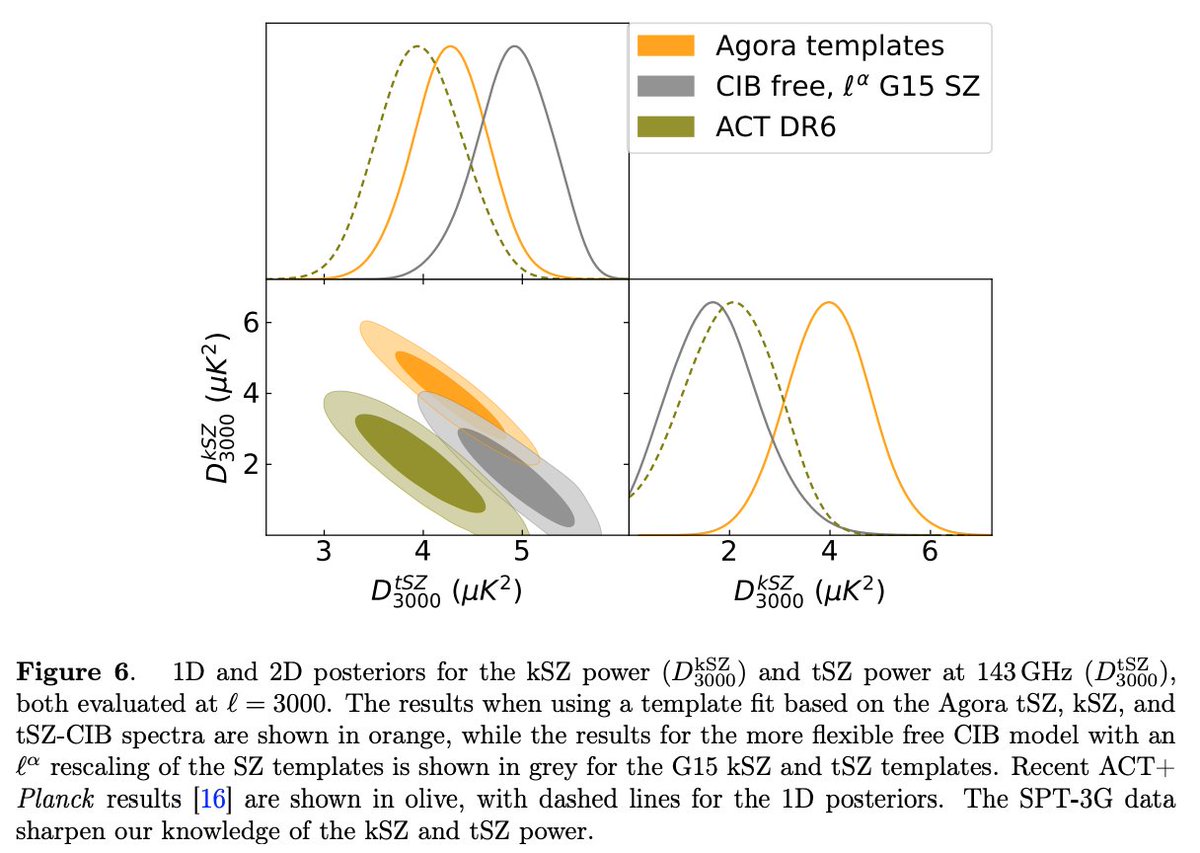

New SPT-3G constraints on CMB secondary anisotropies, including the thermal and kinematic SZ effect! "At ℓ=3000, we find thermal SZ power at 143 GHz of DtSZ=4.91±0.37μK2 and kinematic SZ power of DkSZ=1.75±0.86μK2" @NSF #southpole #Antarctica arxiv.org/abs/2601.20551

English

Ben Gras retweetledi

A conventional narrative you might come across is that AI is too far along for a new, research-focused startup to outcompete and outexecute the incumbents of AI. This is exactly the sentiment I listened to often when OpenAI started ("how could the few of you possibly compete with Google?") and 1) it was very wrong, and then 2) it was very wrong again with a whole another round of startups who are now challenging OpenAI in turn, and imo it still continues to be wrong today. Scaling and locally improving what works will continue to create incredible advances, but with so much progress unlocked so quickly, with so much dust thrown up in the air in the process, and with still a large gap between frontier LLMs and the example proof of the magic of a mind running on 20 watts, the probability of research breakthroughs that yield closer to 10X improvements (instead of 10%) imo still feels very high - plenty high to continue to bet on and look for.

The tricky part ofc is creating the conditions where such breakthroughs may be discovered. I think such an environment comes together rarely, but @bfspector & @amspector100 are brilliant, with (rare) full-stack understanding of LLMs top (math/algorithms) to bottom (megakernels/related), they have a great eye for talent and I think will be able to build something very special. Congrats on the launch and I look forward to what you come up with!

Flapping Airplanes@flappyairplanes

Announcing Flapping Airplanes! We’ve raised $180M from GV, Sequoia, and Index to assemble a new guard in AI: one that imagines a world where models can think at human level without ingesting half the internet.

English