Sabitlenmiş Tweet

Looking to discuss cybersecurity or AI with an expert?

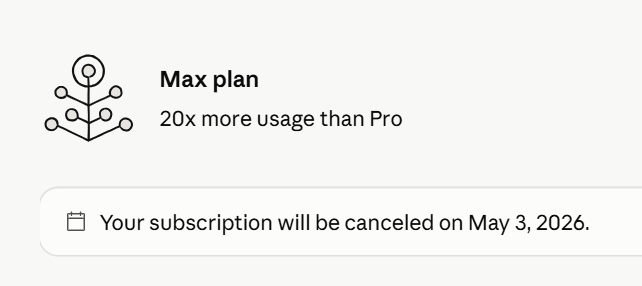

I've blocked off a few slots per month where I'm doing 30 minutes for $30.

Bring your topic or issue and we'll dive right in, if there's a bit to read beforehand, send and I'll make an effort to go through things.

Need an NDA? There's one in the scheduling process, it's optional.

You can click on my profile, and then follow the Calendly link (circled in red on the profile photo in this post).

English