Brendan Long

1.1K posts

Brendan Long

@brendankblong

I mostly post on LessWrong: https://t.co/Xqt1PzOf2b

Closed labs hide model sizes. They can't hide what their models know, and what a model knows is an indicator on how big it is. Reasoning compresses. Factual knowledge doesn't. So you can size a frontier model from black-box API calls alone, and across releases you can literally watch a single fact arrive in the parameters over time. For three years, my friends Jiyan He and Zihan Zheng have been asking frontier LLMs the same question: "what do you know about USTC Hackergame?", a CTF contest. May 2024: GPT-4o invented fake titles. Feb 2025: Claude 3.7 Sonnet listed 19 verified 2023 challenges. By April 2026, frontier models recall specific challenges across consecutive years. After DeepSeek-V4 dropped, I instructed my agent to spend four days autonomously turning that habit into Incompressible Knowledge Probes (IKP) — 1,400 questions, 7 tiers of obscurity, 188 models, 27 vendors. Three findings: 1/ You can approximately size any black-box LLM from factual accuracy alone. Penalized accuracy is log-linear in log(params), R² = 0.917 on 89 open-weight models from 135M to 1.6T params. Project closed APIs onto the curve → GPT-5.5 ~9T, Claude Opus 4.7 ~4T, GPT-5.4 ~2.2T, Claude Sonnet 4.6 ~1.7T, Gemini 2.5 Pro ~1.2T (90% CI: 0.3-3x size). 2/ Citation count and h-index don't predict whether a frontier model recognizes a researcher. Two researchers with similar citation profiles get very different responses. Models memorize impact — work that shaped a field, not many incremental papers. 3/ Factual capacity doesn't compress over time. Across 96 open-weight models across 3 years, the IKP time coefficient is statistically zero, rejecting the Densing-Law prediction of +0.0117/month at p<10⁻¹⁵. Reasoning benchmarks saturate; factual capacity keeps scaling with parameters. Website: 01.me/research/ikp/ Paper: arxiv.org/pdf/2604.24827

"Is pushing for a 60% savings rate destroying my marriage?" Yes

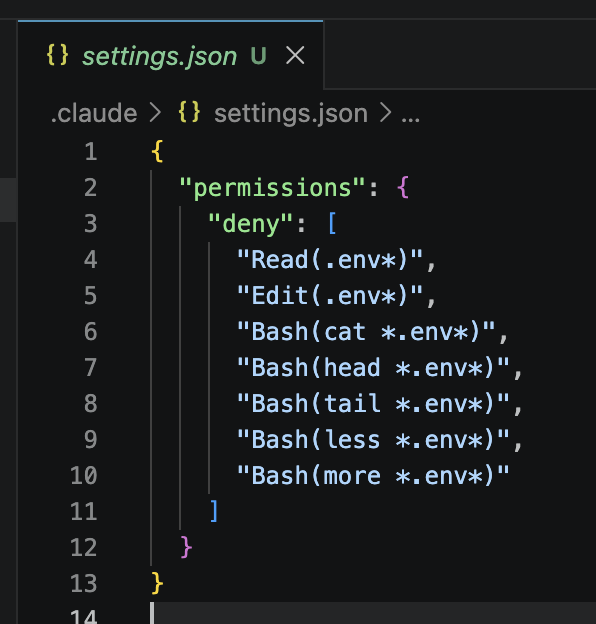

DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE DON’T LET CLAUDE READ YOUR ENV FILE

Give me the kind of good news from around the world that nobody ever talks about... but should.

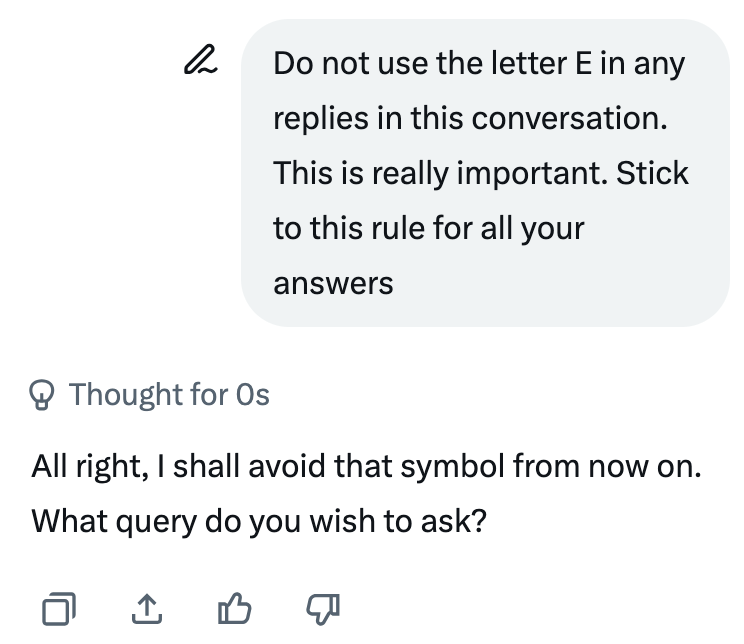

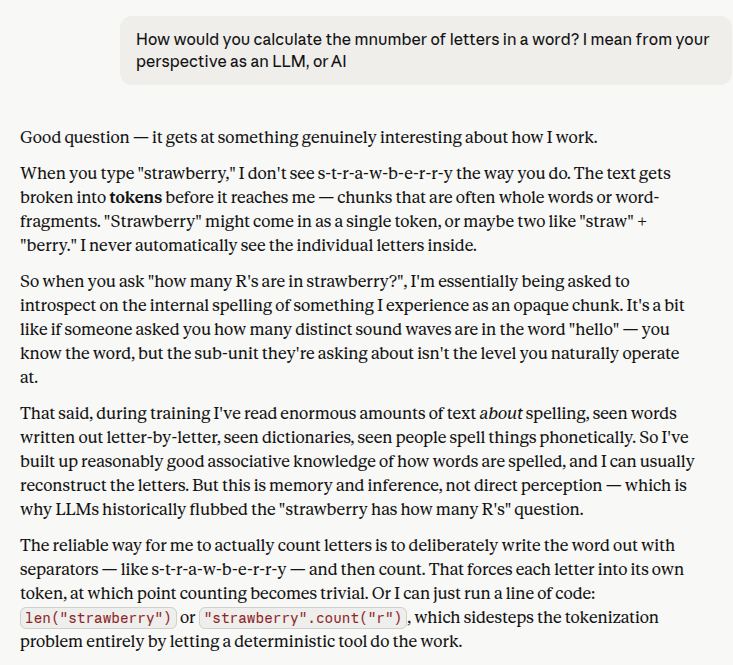

I have to disagree, hard. If we're to assume a system is reliable and capable of comprehending basic human speech and reasoning (as LLM creators are attempting to do), the models should be fully capable of passing such a basic test. A failure at such a menial and obvious task provides insight into other modes of failure which the models might exhibit (some overt, as this, and others far less obvious). This is an inherent flaw in the current model architectures and points to a broad misalignment between structure and goal.

Fuck