Bronson

449 posts

@ScottAtlas_IT @shestokas There’s a soft impact that isn’t captured by the numbers. Citadel HQ in Chicago had a magnetic force bringing and keeping talent in Chicago, and also served as an incubator - many prop trading firms in town were started by or with former Citadel talent. That’s all left town.

English

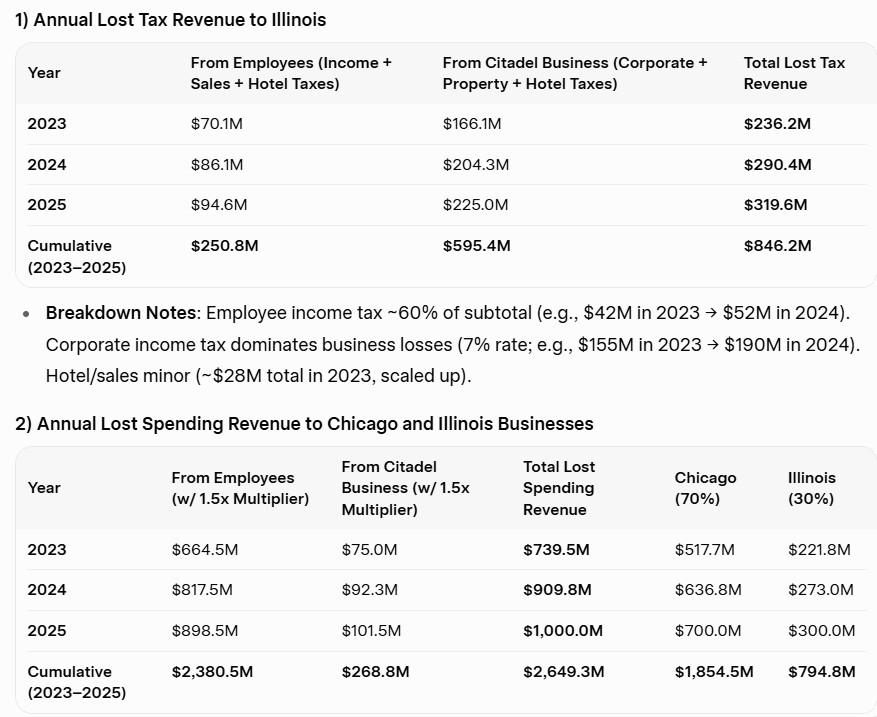

When Ken Griffin and Citadel left Chicago:

Total 3-Year Impact: $846M in lost taxes + $2.65B in lost spending = $3.5B economic hit to Illinois/Chicago (not including philanthropy losses).

Geiger Capital@Geiger_Capital

Ken Griffin says he's scaling down in NYC: "What the mayor of New York has made clear to us, is that we need to double down on Miami."

English

From 1784 until 1970, the company ED&F Man held the exclusive contract to produce rum for the British navy. When the practice ended in 1970, the remaining store of rum that had been produced was held by the English government and, for several decades after, the rum was occasionally served at official functions, or so the story goes.

A few years ago, there were bottles of the same remaining rum being sold - I remember seeing one go for ~USD 1,000 around 2020.

ED&F Man is still in existence as a commodities firm based out of London.

English

> You’re a Software Engineer at Apple in 2010.

> Apple handed out prototypes of the new iPhone disguised as the previous iPhone to you and other selected engineers for real-world testing.

> You were out drinking, headed home and mistakenly left the prototype on a bar stool.

> Someone got a hold of that iPhone and tried to reach out but failed.

> Next morning, the phone had been bricked remotely by Apple and that‘s when the person who got it noticed how strange that iPhone 3GS was. The body felt different and most of all, it had a front-facing camera!

> He managed to pry it open from the case and there it was, the new iPhone 4 which ended up in the hands of a tech blogging website @Gizmodo for $5,000

Images source: Gizmodo

English

@TracketPacer Get the Apple IIe expansion board specifically for the LC. In 1990, this config was targeted at schools as a way to keep running their old Apple II software stack while upgrading to the Macintosh platform. Real A2 on a card.

The LC was announced with the Mac Classic and IIsi.

English

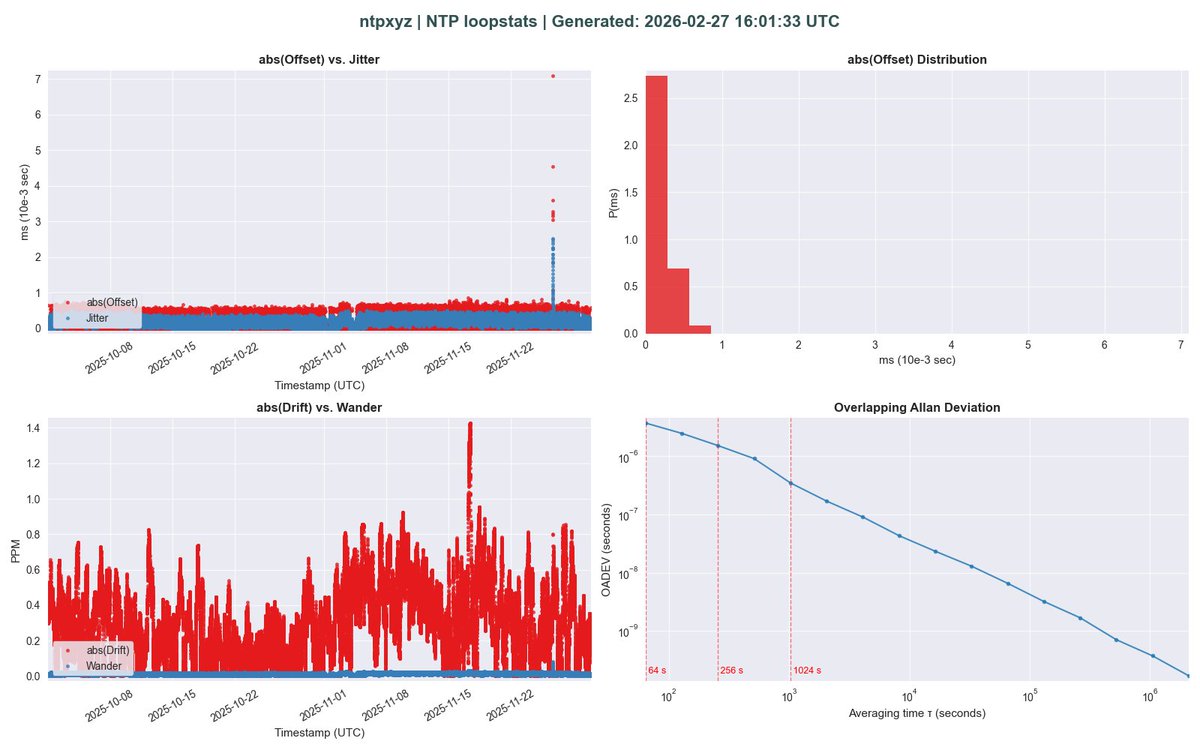

Introducing ntpxyz

ntpxyz is a lightweight Python tool for parsing and visualizing statistics from NTP servers. It processes standard NTP stats logs—currently loopstats (clock sync), sysstats (network traffic), and usestats (host utilization)—then generates clear, insightful plots using Matplotlib. Designed for both interactive use and automated batch runs, ntpxyz helps monitor NTP server health with minimal fuss.

English

🔑 Omarchy desktop users: no more typing your LUKS passphrase at every boot (or hunting for a wired keyboard)!

Omarchy ships with killer default encryption, but that prompt can be a drag on desktops.

I turned an old USB into a dedicated key drive for automatic unlock — plug it in and you're straight in.

Full step-by-step guide I put together (USB formatting, cryptsetup, limine cmdline tweaks, mkinitcpio hooks — all there):

gist.github.com/brontsor/ee1e7…

#Omarchy

English

@davepl1968 Training a robot to play a human that must defeat all the robots. There’s a plot line in there somewhere.

English

They won’t have to.

This is a case that will eventually go to the Supreme Court.

When the state mandates that developers build specific code into their platform like this, it’s no different than making the author of a book write and include an introductory chapter that lectures the reader about age and content.

If I publish an OS source code as a book, am I breaking the law if I don’t include the age verification bits? “Free speech” does not mean “free speech as long as it’s in the contemporary English Language.” So code is speech, and forcing age verification, or any of the energy star stuff or ADA stuff that is already mandated is just compelled speech, which the US government can’t do.

Also, practically speaking, unless you shut down the internet, it’s an unworkable law. You want to verify your age every time you unlock your iPhone??

English

People in California will still run open source operating systems even if there is no age verification and no “California License” whatever that means. Code is just words (speech) and the California law is stupid. It’s like saying every book sold in California needs to have a front page with a content warning.

English

This is called the "chilling effect", when innovation dies out of fear for retaliation. Code is not crime, these insane legislative policies will literally bring an end to free society if they are not stopped dead in their tracks.

The Lunduke Journal@LundukeJournal

An open source calculator firmware system has declared it will not be available in California due to their new “Operating System age verification” law. Seriously. “The DB48X project intends to rebuild and improve the user experience of the HP48 family of calculators.” From the developer: “As a consequence of recent legislative activity in California and Colorado. DB48x is probably an operating system under these laws. However, it does not, cannot and will not implement age verification.” github.com/c3d/db48x/tree…

English

Introducing ntpxyz

ntpxyz is a lightweight Python tool for parsing and visualizing statistics from NTP servers. It processes standard NTP stats logs—currently loopstats (clock sync), sysstats (network traffic), and usestats (host utilization)—then generates clear, insightful plots using Matplotlib. Designed for both interactive use and automated batch runs, ntpxyz helps monitor NTP server health with minimal fuss.

github.com/brontsor/ntpxyz

English

Bronson retweetledi

@dcolascione I believe it is exactly to prevent you from easily mapping it to a sane key. Because if that was just a single scancode most people would remap and forget about it, but Microsoft *needs* people to use copilot even if just once and accidentally.

English

He calls it a “product” that’s been “tested” in other countries before he calls it a burger or even “food”. Of course, I can’t hate on McDonalds but that’s pretty insightful coming from the CEO. This stuff is cooked up in a Lab and it sounds like he is giving a presentation to the Board of Directors.

Couldn’t he have opened with something like “We’re coming out with a great new Burger!”… or maybe Legal advised him against that.

English

🚨 MCDONALD’S CEO EATS A $12 BURGER ON CAMERA - AND PRETENDS THIS IS NORMAL

This is Chris Kempczinski, the CEO of McDonald's, calmly chewing their new $12 Big Arch and calling it “lunch.”

Two quarter pound patties.

Special bun. New sauce.

1,057 calories.

Corporate tasting with cameras rolling.

Meanwhile, working families in America are realizing McDonald’s is no longer “cheap food”. It’s overpriced survival junk calories being sold as innovation.

He makes tens of millions a year.

You’re standing at the counter wondering how a burger and fries quietly became $17.

And his advice?

“Try it when you can get it.”

Does this feel like a brand that cares - or executives laughing while you pay more and get less?

English

1) MS lets users set their profile icon in outlook/365 by default

2) Compliance lead sets his icon to the Anarchy “A”

3) There’s litigation and hundreds of emails involving the compliance department get printed and preserved as part of discovery

4) All of the emails from the head of compliance have an Anarchy “A” on them, top left, now in the hands of opposing council and the court

5) CEO is furious at IT, because everything leadership does not like involving technology is somehow the fault of the 4 underpaid and overworked IT guys at the service desk

English

It’s not really about any ideology, it’s about grabbing a headline. She knew that making a controversial statement like this would suddenly get the media talking about her all over the place , and it did. She probably did know about the land her house is on and I bet her streaming revenues jump this week… “There’s no such thing as bad publicity!”

Her audience eats it up.

English

I feel sorry for celebrities that wander into this kind of thing without doing at least a basic AI search. She got torched, but you know, do your homework first. There's a kernel of an idea sparked by this massive narrative that occurred, just at a flippant statement at the Grammys. I'm very optimistic that from this, something good will happen. As far as Billie, I say this to entertainers, “half the people in politics that you piss off won't buy your music anymore.” Don’t be stupid about it.

English