Michał Piszczek

2K posts

Michał Piszczek

@cdiamond

CTO @ Archdesk | Systems where physics meets economics. Ex-Hacker. Ex-Fintech CEO. Nullius in verba. 🖖 AI does not fail. Human judgment does.

You've been asking for this one... Now in preview: Codex in the ChatGPT mobile app. Start new work, review outputs, steer execution, and approve next steps, all from the ChatGPT mobile app. Codex will keep running on your laptop, Mac mini, or devbox.

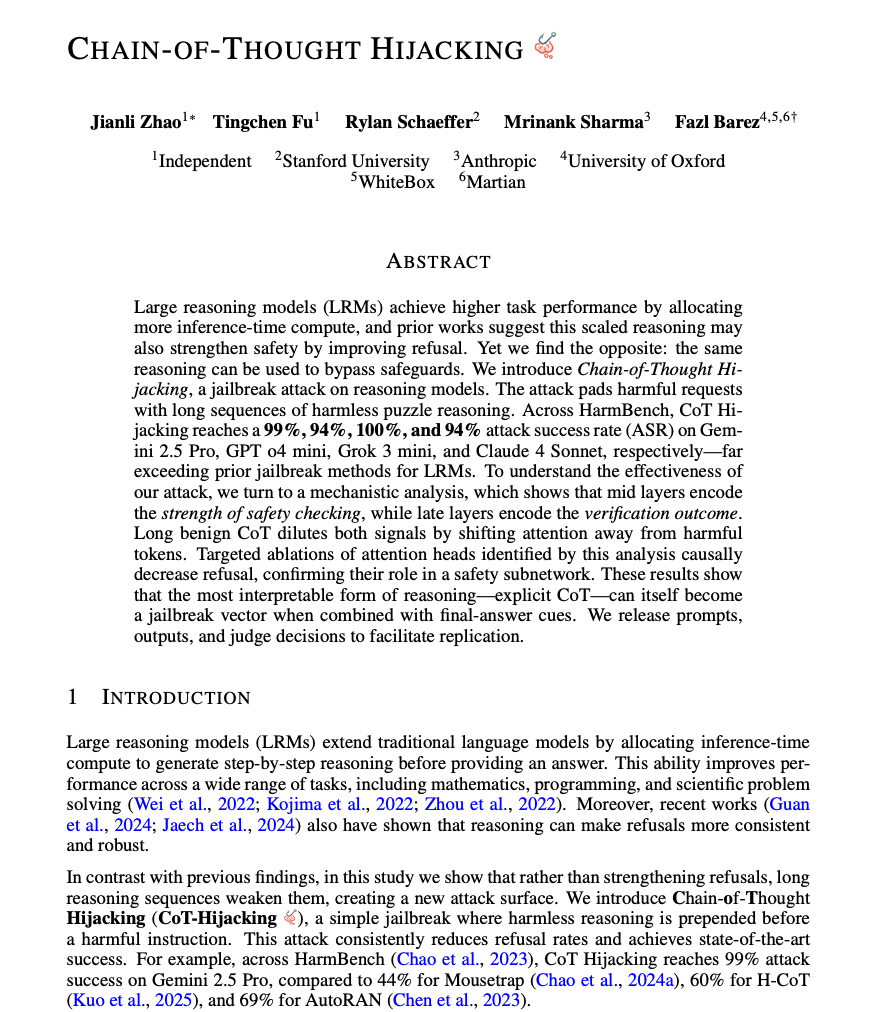

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

Very important update from UK AISI. This is a meaningful change from the previous report. Here’s what the new data would look like for “Mythos Preview (new)” with $ on the x-axis: