Charles Pierse

440 posts

Charles Pierse

@cdpierse

ML @weaviate_io | Occasional maker of things, regular breaker of things.

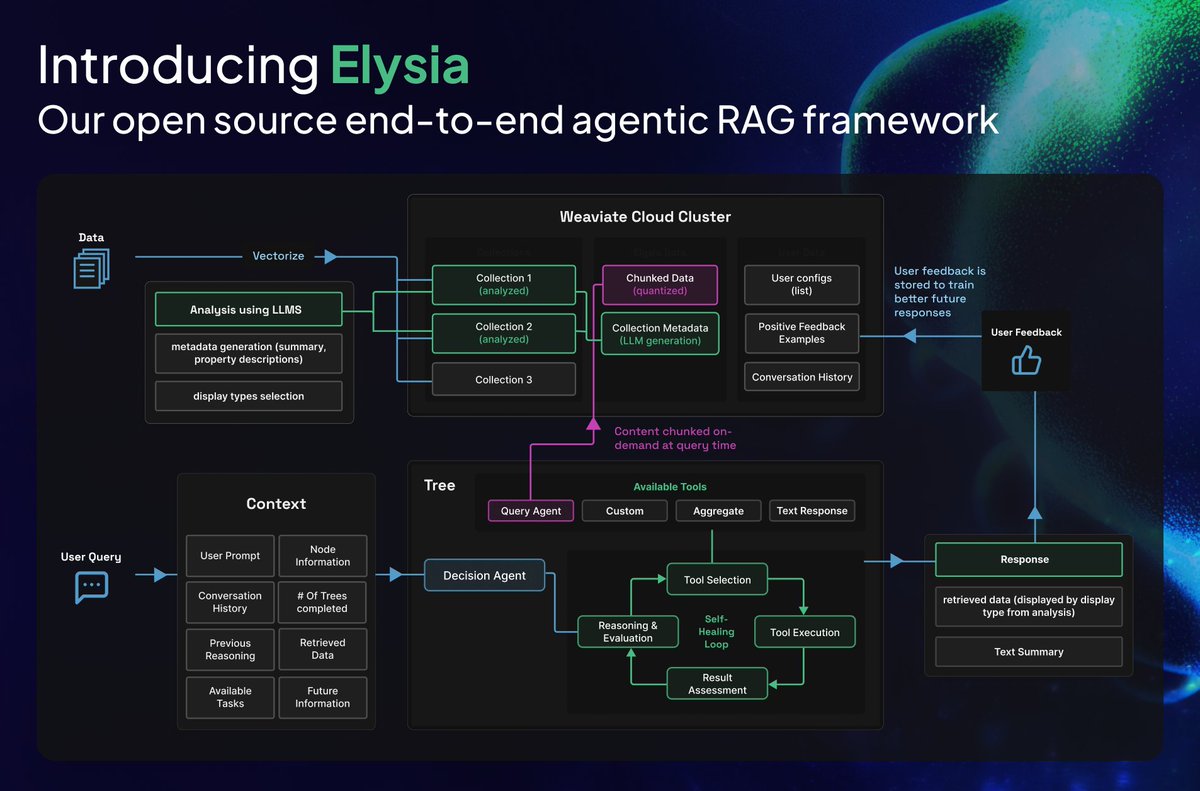

Your AI agent worked perfectly in January. By June, it's confidently giving you wrong answers. Here's why: As AI applications graduate from PoCs to production, we're hitting a wall that better models can't solve: 𝗹𝗮𝗰𝗸 𝗼𝗳 𝗰𝗼𝗻𝘁𝗶𝗻𝘂𝗶𝘁𝘆. 𝗧𝗵𝗲 𝗹𝗶𝗺𝗶𝘁𝗲𝗱 𝗹𝗼𝗼𝗽 𝗽𝗿𝗼𝗯𝗹𝗲𝗺 Today's AI applications treat each interaction as largely disposable. You've felt it already: repeating preferences, restating context, and re-teaching the same facts. At agent scale, the problem worsens. Agents re-derive the same conclusions, regenerate identical facts, and discard half-finished work, and what looks like forgetfulness for humans turns into systemic chaos for machines. 𝗪𝗵𝘆 𝗻𝗮𝗶𝘃𝗲 𝗺𝗲𝗺𝗼𝗿𝘆 𝘄𝗶𝗹𝗹 𝗳𝗮𝗶𝗹 Here's what happens with a basic memory implementation: Week 1: Magic! The agent remembers. Month 3: Responses slow down as memory bloats. Month 6: Answers drift wildly as the model pulls from conflicting and outdated context. Helpful continuity has slowly turned into accumulated noise. 𝗧𝗵𝗲 𝘀𝗵𝗶𝗳𝘁: 𝗺𝗲𝗺𝗼𝗿𝘆 𝗶𝘀𝗻’𝘁 𝘀𝘁𝗼𝗿𝗲𝗱, 𝗶𝘁’𝘀 𝘮𝘢𝘪𝘯𝘵𝘢𝘪𝘯𝘦𝘥. Useful memory systems actively manage context through write control, deduplication, reconciliation, amendment, and purposeful forgetting. Without these, memory becomes an ever-growing pile of notes. With them, it becomes 𝗿𝗲𝗹𝗶𝗮𝗯𝗹𝗲 𝘀𝘁𝗮𝘁𝗲. At Weaviate, we treat memory as a first-class data problem: durable, governable, and safe under change. Read the full blog post on our vision for memory and signup for the product preview: weaviate.io/blog/limit-in-…

It's time to run your own LLM inference. The open models and open source engines are ready. Are you? We've been working with leading teams like @DecagonAI, @weaviate_io, and @reductoai to ship production-grade inference. Here's how we do it:

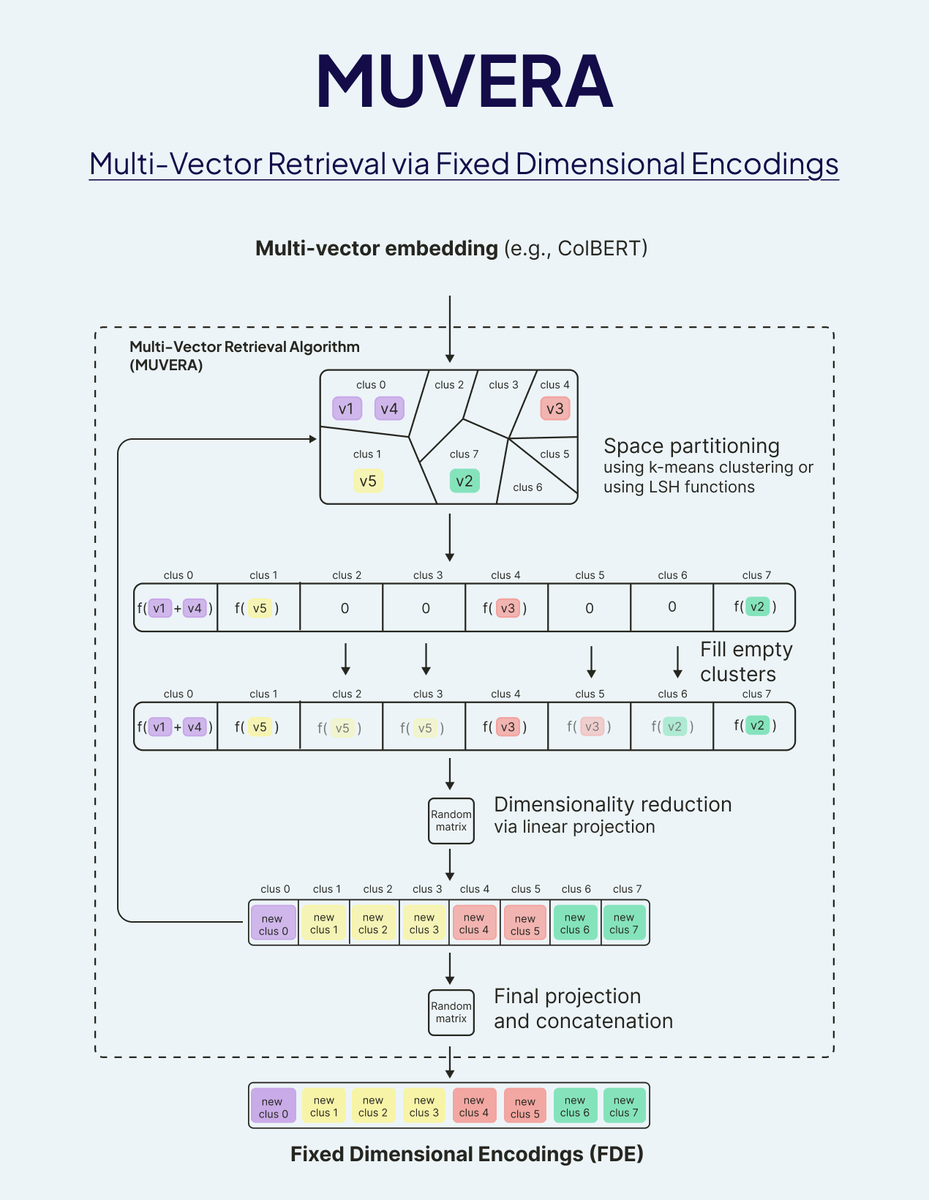

REFRAG from Meta Superintelligence Labs is a SUPER EXCITING breakthrough that may spark the second summer of Vector Databases! ☀️🏖️ REFRAG illustrates how Database Systems are becoming even more integral to LLM inference 🧬 By making clever use of how context vectors are integrated with LLM generation, REFRAG is able to make TTFT (Time-to-First-Token) 31X faster and TTIT (Time-to-Iterative-Token) 3X faster, overall improving LLM throughput by 7x!! REFRAG is also able to process much longer input contexts than standard LLMs! 🔥🔥 How does it work? 🔬 Most of the RAG systems today that are built with Vector Databases, such as Weaviate, throw away the associated vector with retrieved search results, only making use of the text content. REFRAG instead passes these vectors to the LLM, instead of the text content! This is further enhanced with a fine-grained chunk encoding strategy, and a 4-stage training algorithm that includes a selective chunk expansion policy trained with GRPO / PPO. 🏭 Here is my review of the paper! I hope you find it useful! 🎙️

We benchmarked the Query Agent’s Search Mode vs. Hybrid Search across 12 IR benchmarks from BEIR, LoTTe, BRIGHT, EnronQA, and WixQA. The results? +17% average improvement in Success @ 1 and +11% in Recall @ 5! Learn more about the benchmarks and dive into our experimental details: 📊 Blog post: weaviate.io/blog/search-mo…

We benchmarked the Query Agent’s Search Mode vs. Hybrid Search across 12 IR benchmarks from BEIR, LoTTe, BRIGHT, EnronQA, and WixQA. The results? +17% average improvement in Success @ 1 and +11% in Recall @ 5! Learn more about the benchmarks and dive into our experimental details: 📊 Blog post: weaviate.io/blog/search-mo…

We’re excited to announce: The Weaviate Query Agent is now GA! WQA is a Weaviate-native agent that transforms natural language questions into precise database operations, giving you reliable, fully transparent results. It supports: • Dynamic filters • Smart routing across collections • Aggregations • Accurate results with full source citations The result? Faster, more reliable, and fully transparent data-aware AI. How to get started: • 𝗜𝗻 𝘁𝗵𝗲 𝗖𝗼𝗻𝘀𝗼𝗹𝗲: Explore your data with natural language. See the Agent's "thought process" and the sources it used. • 𝗩𝗶𝗮 𝗔𝗣𝗜𝘀/𝗦𝗗𝗞𝘀: Embed this intelligent querying directly into your applications, reducing boilerplate and shipping faster. Key benefits: • Say goodbye to custom query-rewriting pipelines. • Get structured, predictable data back. • Full transparency: see every filter, aggregation, and source. • Automatically handles queries across multiple data collections and tenants. Try it yourself: 🔬 Colab quickstart → github.com/weaviate/weavi… ✍️ Launch blog → weaviate.io/blog/query-age…