Chris

746 posts

Chris

@chrisdrit

Digging into new things! @FrameworkPuter @OmarchyLinux @Neovim, LLMs, Agents, AI and loving it!

@iamdothash Can you make the Omarchy menu like this?

OpenShell v0.0.37 🧩 pluggable compute drivers: Docker, Podman, Kubernetes, MicroVM 🔒 OIDC + RBAC gateway auth ☸️ Helm chart + Kubernetes user namespaces 📦 Debian, RPM, and Homebrew packages breaking: recreate the gateway before upgrading. github.com/NVIDIA/OpenShe…

Introducing the Printing Press, a CLI-factory and a CLI-library. Built with @trevin. 🏭🖨📚

Most APIs suck for agents. Most MCPs suck for agents. Most official CLIs suck for agents. They waste tokens and time. @steipete started making his own because of this.

📚 A Library of agent-native CLIs you install today (Linear, ESPN, Flight GOAT (Google Flights + Kayak nonstop), Contact Goat (LinkedIn + Happenstance + Deepline more) +30+ more)

🏭 A factory that prints new ones for any service - just type /printing-press

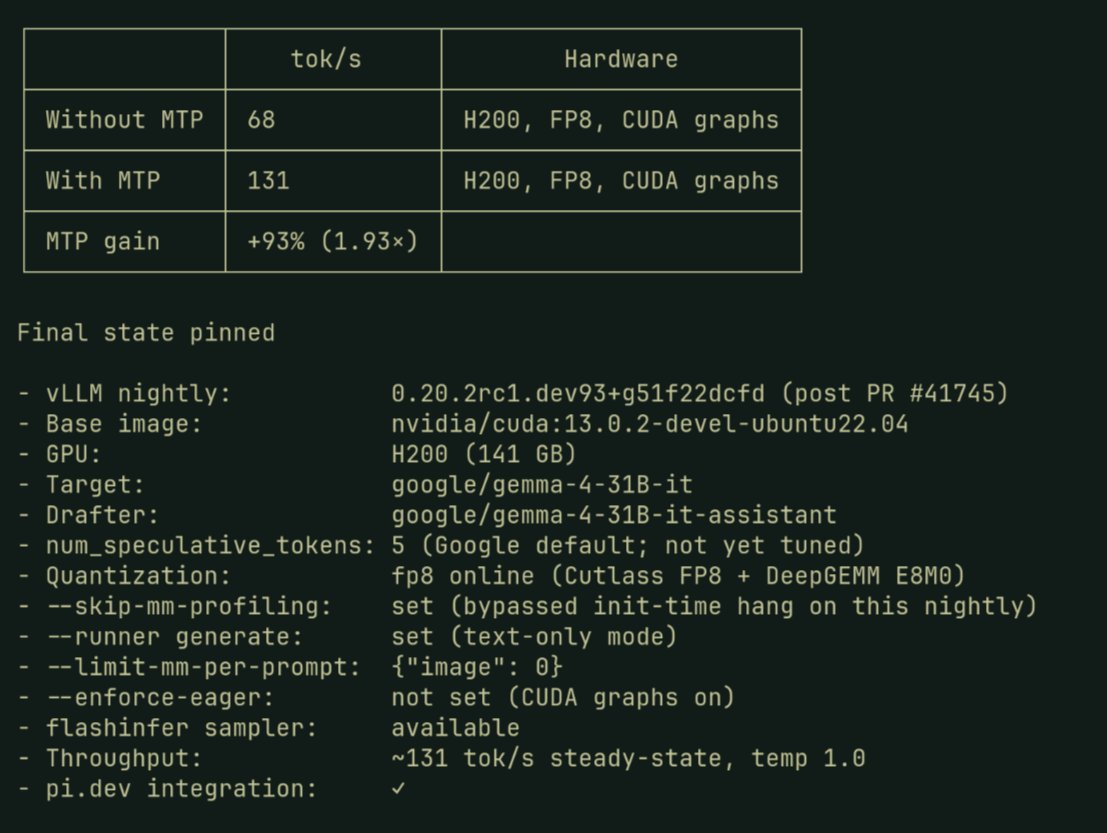

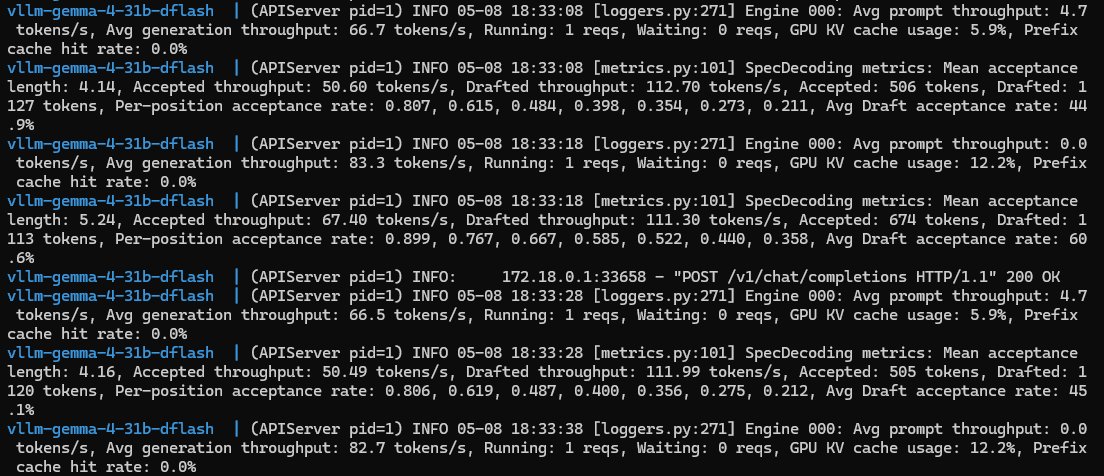

Gemma 4: Now up to 3x Faster. ⚡ Same quality, way more speed. Our new MTP drafters allow Gemma 4 to predict multiple tokens at once, effectively tripling your output speed without compromising intelligence.

RTX 5060 Ti 16GB. $429 GPU. Last night I got 128 t/s on Qwen3.6-35B using ik_llama.cpp's R4 quant format. Crushing performance. Faster than the 5070 Ti on mainline llama.cpp. Performance stays consistent from 0 to 139k context and no speculative decoding used!🤯 Special thanks to @MakJoris for sharing ik_llama.cpp with us! Today I wanted to know if it's actually *useful* at that speed. So I gave it a coding agent and 4 creative challenges. Here's what it built. 🧵