Cédric Lombion

768 posts

Cédric Lombion

@clombion

Founder, Civic Literacy Initiative. Former data lead @okfn, @schoolofdata. Making sense of the collision btwn tech & society through (data/algo) literacies.

work with us → Katılım Ocak 2011

534 Takip Edilen583 Takipçiler

@_TylerHillery @archieemwood @datajoely @astral_sh Also installed uv out of sheer frustration when I faced the same issue ahah

English

@archieemwood @datajoely @astral_sh I recommend installing uv with their standalone installer

#standalone-installer" target="_blank" rel="nofollow noopener">docs.astral.sh/uv/getting-sta…

English

@nicoritschel @JohnKutay Which competitors are you thinking of?

English

@JohnKutay There’s some fundamental architecture decisions in competitors that help with intentionality. I don’t think DBT is pexpressive enough for us to cling to that spec.

English

My book has a cover! "Community Data - Creative Approaches to Empowering People with Information" coming in Nov from @OxUniPress.

(Follow rahulbot on instagram for updates on the silly "book launch" video I'm working on 🚀+📘)

English

@mattbeane I stopped at 10' when it said "5 genres, 5 more to go" before suddenly skipping to the next paper. I would have expected more callbacks to the first paper being discussed while discussing the second one. It felt like I had to do the connections myself, which I find distracting.

English

Okay, I'll toss a (STUNNING) Google NotebookLM podcast into the mix.

PhD students often struggle to learn how to write good papers. So do I!

This is 100% AI-generated, from three seminal papers with practical how-to guidance.

Wow. Simply, wow: soundcloud.com/matt-beane-235…

English

@jeremyphoward Clicking the link about Github actions in the blog post leads to a 404 page ghapi.fast.ai/tutorial_actio…

English

Here's an overview of ghapi on the official GitHub blog:

github.blog/developer-skil…

English

Did you know there's a Python and CLI lib with full auto-complete providing 100% always-updated coverage of the >1000 methods in the entire @GitHub REST API?

It's called ghapi. I've been working on it nearly 4 years now.

Give it try--it's pretty fun!😃

github.com/fastai/ghapi

English

Text is a summary from a section of a series of lectures (in French) by Alain Supiot, titled "Governance by numbers" (7th lecture). A companion book was edited, and it received an English translation lawcat.berkeley.edu/record/1164158

Highlights from nplusonemag.com/issue-47/essay…

English

@mikecodemonkey @Linux_Mint I have a 2013 iMac that is unbearably slow at this point, especially due to the HDD. Would installing Linux help?

English

@evidence_dev Would be great to have access to an RSS feed for your blog! Much nicer to follow product updates from my RSS reader than across email / social media.

English

@mikorulez Useful insights, thanks! Any pointers on the tools / collaboration frameworks that you used?

English

@clombion Too much memory consumption in the Monte Carlo sim to do it w/o staging it in steps. Also at some point I have to add tiebreakers which are very complex logically. Need to preprocess a bit.

English

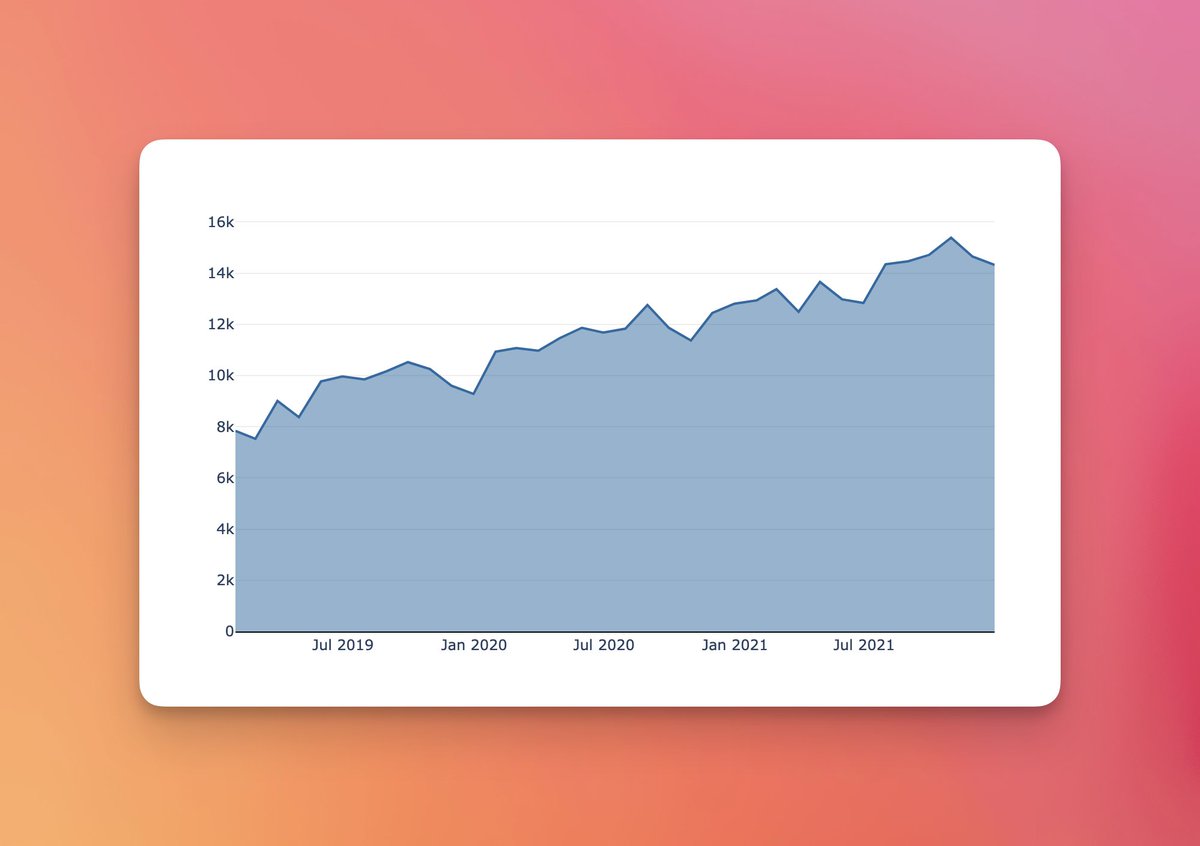

400 stars yay! I know its a vanity metric but dang if it isn't a good one :)

Jacob Matson@matsonj

399 now...

English

@matsonj Sorry if I wasn't clear. I was asking if duckdb + evidence was not enough for this kind of pipeline. It was not about the choice of sql.

English

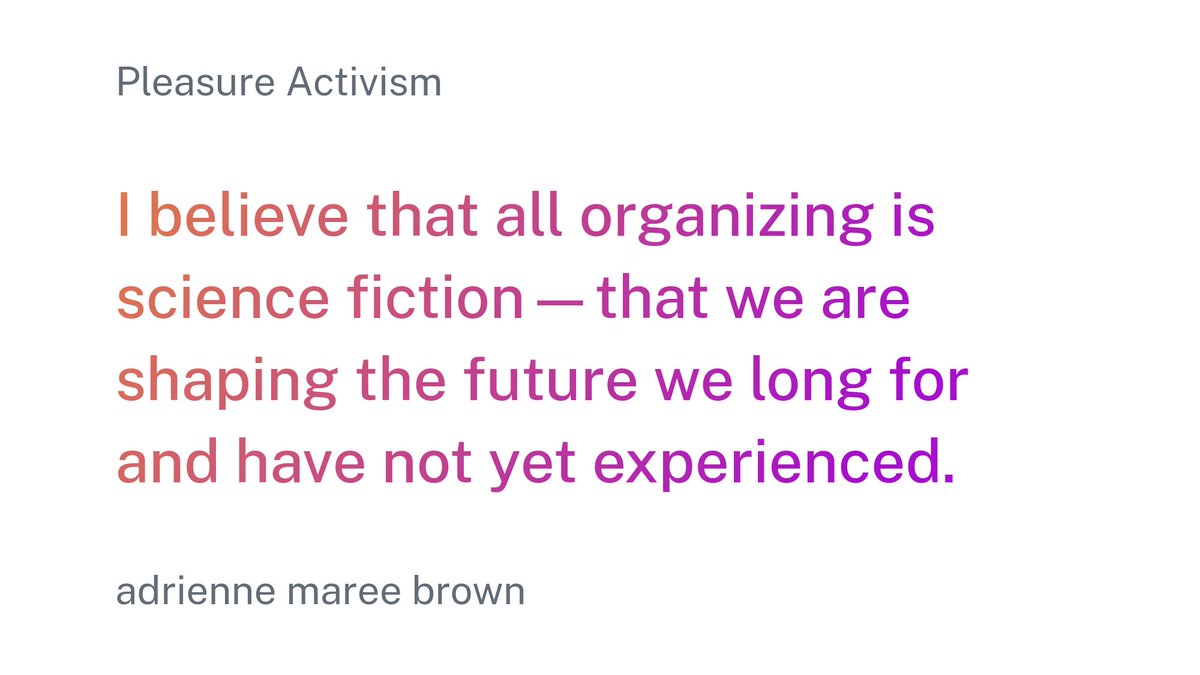

One of the most inspiring sentences I've read recently.

Also thankful that this is much more than a lucky strike of brilliance: @adriennemaree has developed the topic across several publications—all promptly added to my reading list.

English

@archieemwood @evidence_dev What I mean is that the philosophy behind Evidence seems to lean toward consuming the db and writing the SQL in Evidence, rather than generating a Datasette endpoint that I consume in Evidence. Or is it?

English

@clombion @evidence_dev evidence caches everything during the build so API data will be as fresh as the most recent build

English

most charting tools require inordinate amounts of config for a decent looking chart

in @evidence_dev a great looking chart is 5 lines of code

English

@archieemwood @evidence_dev But reading how evidence works, is there any point in hooking it up to the API if I have access to the SQLite behind it? As Evidence stores stored and converts all the data anyway?

English

@archieemwood @evidence_dev I've been using Streamlit to create small internal apps that consume a Datasette API. And I've been meaning to test Evidence because of how slow Streamlit can get while caching the data.

English

@archieemwood @evidence_dev The user here is the biz analyst building the data-driven website right?

In this case an interesting use of llm would be to generate x different type of charts based on the same data to compare readability.

UI could be freeform or use dataviz grammar to help guide prompt.

English

rendering a new component on the fly with @evidence_dev

(very directed output for now)

Jacob Matson@matsonj

@archieemwood @evidence_dev Rendering a new component on the fly would be sick, but could it work reliably enough??

English

@ethanf_17 Is there a reason to extract and transform the data directly with DuckDB instead of doing it with pandas (or polars) and then loading it in a DuckDB file?

English

I built a dashboard using @evidence_dev with data scrapped from the SEC and a pipeline built with @duckdb.

I thought I'd write a quick guide on how to do this since it was super easy and fun.

English

@simonw That allows them to deploy quickly to demonstrate the use case, then find funding after. Sounds like a good use case for serverless to me? Though not sustainable for the platforms themselves probably.

English

@simonw I had a meeting yesterday with a gig department that was responsible for updating a CSV data file but had not control over its publication, as another department managed the open data portal. I suggested Datasette + Vercel to them as a way to circumvent the issue.

English