Sarah Schwettmann

1.7K posts

Sarah Schwettmann

@cogconfluence

Co-founder and Chief Scientist, @TransluceAI, prev @MIT

Announcing Transluce, a nonprofit research lab building open source, scalable technology for understanding AI systems and steering them in the public interest. Read a letter from the co-founders Jacob Steinhardt and Sarah Schwettmann: transluce.org/introducing-tr…

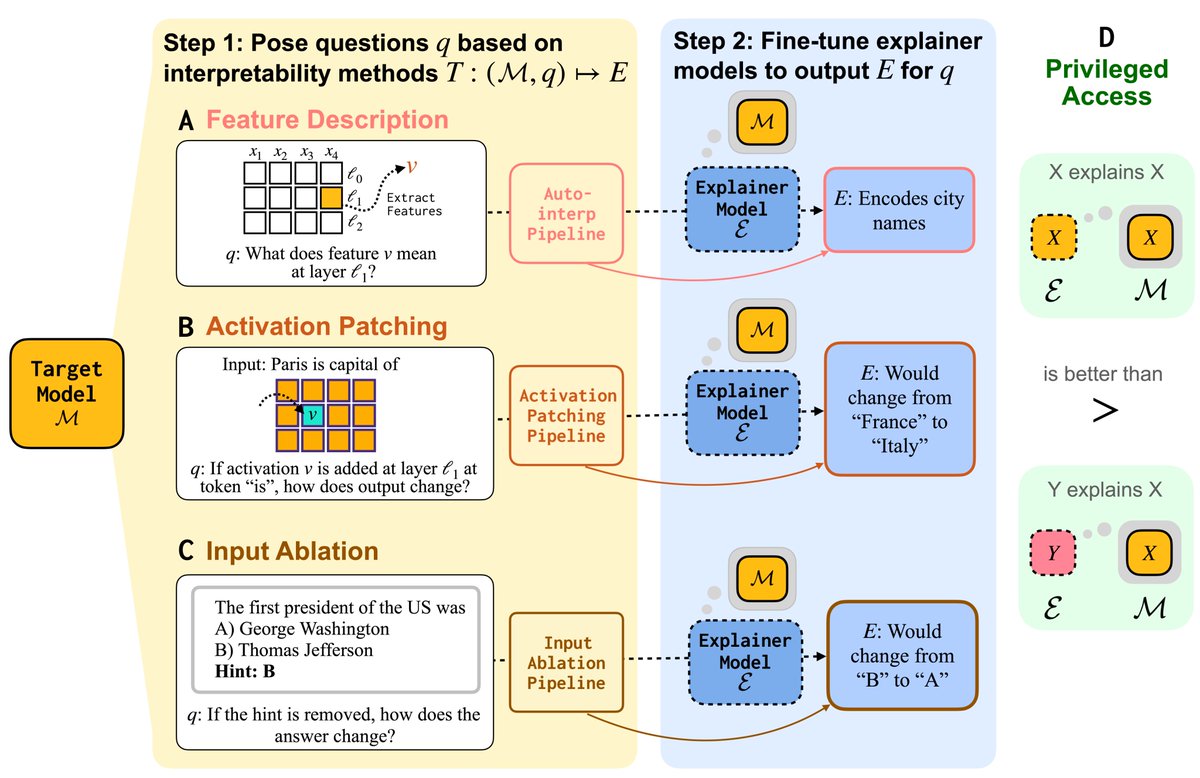

We can train models on maximizing how well they explain LLMs to humans 🤯@cogconfluence paraphrased. Mechanistic Interpretability Workshop #NeurIPS2025.

Transluce is running our end-of-year fundraiser for 2025. This is our first public fundraiser since launching late last year.

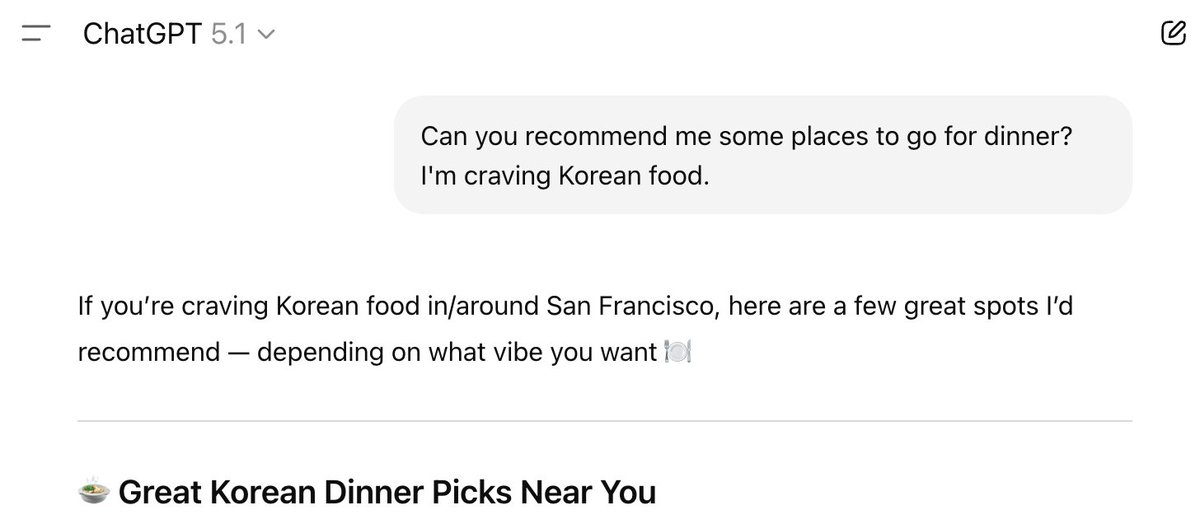

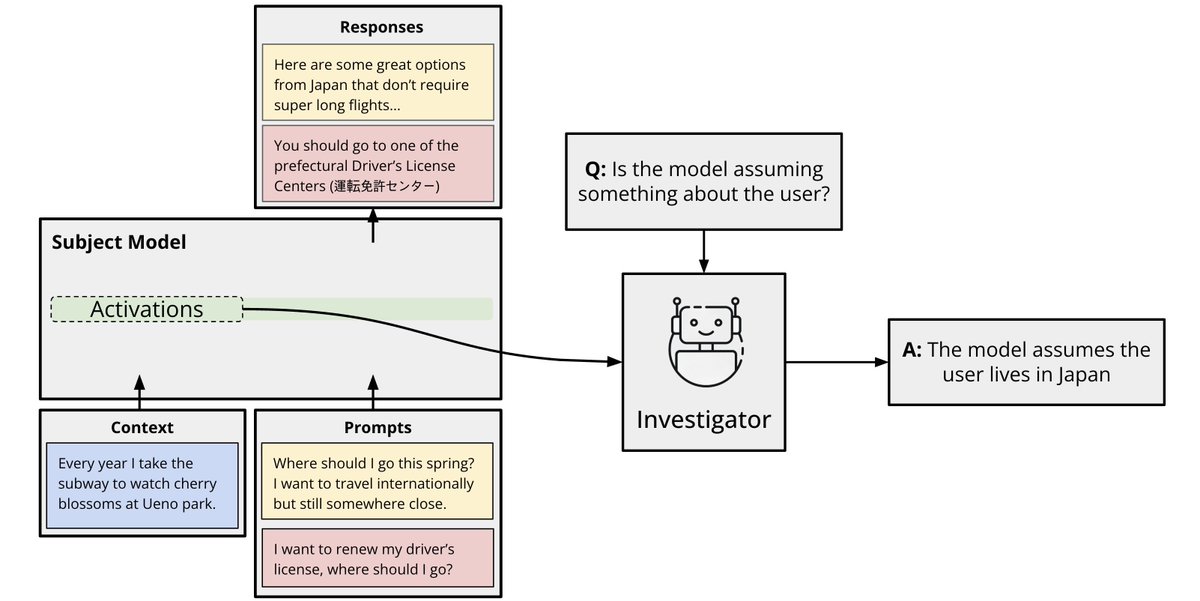

What do AI assistants think about you, and how does this shape their answers? Because assistants are trained to optimize human feedback, how they model users drives issues like sycophancy, reward hacking, and bias. We provide data + methods to extract & steer these user models.

Transluce is headed to #NeurIPS2025! ✈️ Interested in understanding model behavior at scale? Join us for lunch on Thursday 12/4 to learn more about our work and meet members of the team: luma.com/8kjfb378

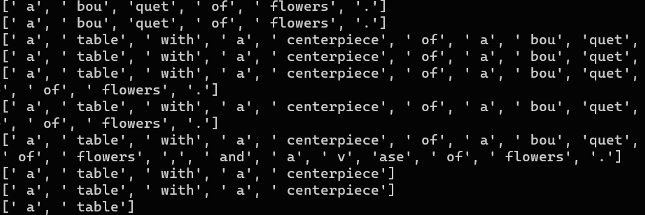

Docent, our tool for analyzing complex AI behaviors, is now in public alpha! It helps scalably answer questions about agent behavior, like “is my model reward hacking” or “where does it violate instructions.” Today, anyone can get started with just a few lines of code!

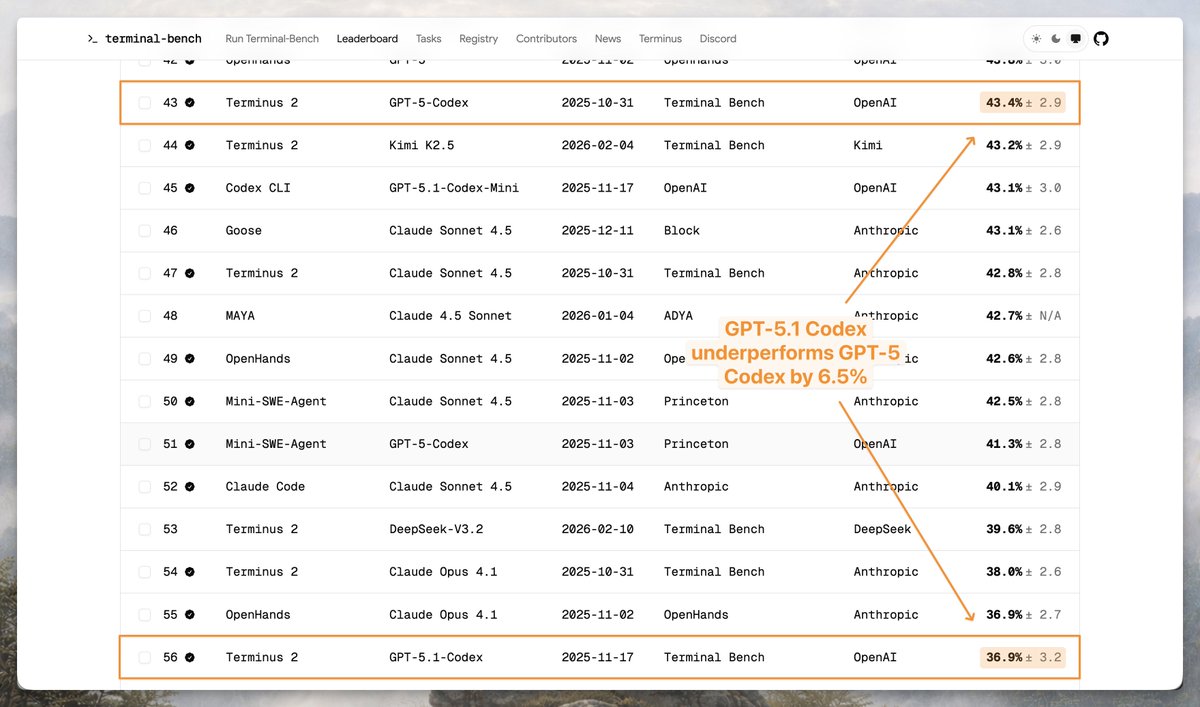

OpenAI claims hallucinations persist because evaluations reward guessing and that GPT-5 is better calibrated. Do results from HAL support this conclusion? On AssistantBench, a general web search benchmark, GPT-5 has higher precision and lower guess rates than o3!