Cotool

40 posts

Cotool

@cotoolai

Composable AI agents for security teams

We are proud to partner and bring light to the incredible work that the good folks at @cotoolai are doing! A lot of AI security evaluations for frontier models miss the mark. They compare apples to oranges by using synthetic evaluation data to assess real-world workflows. Cotool understands this and takes a different approach. This evaluation was built around real intrusion data informed by our macOS intrusion reporting, and their write-up is excellent. The results are genuinely interesting, especially in showing both the progress AI has made and the room still left for harder, more realistic evaluation. We’re glad to be part of it and look forward to supporting future reporting and evaluations with Cotool. Blog post: cotool.ai/blog/beyond-ct… Research: cotool.ai/research/macos…

Excited to announce that @cotoolai has raised a $7.4M seed round led by @a16z to build the agent operating system for security teams. Threat actors now scale with tokens. Campaigns that used to require a coordinated team can be run by a small group with the right model harness. Defense has been absorbing that hit with the same playbook and the same headcount. We built Cotool to make defense compound in the same way. Grateful to the team at @a16z, @ycombinator, @WndrCoLLC, @homebrew, and our angels from Okta, Ramp, Cloudflare, and others who've lived this problem firsthand. If you’re a security practitioner looking for more leverage in the AI age, come see how Cotool can help!

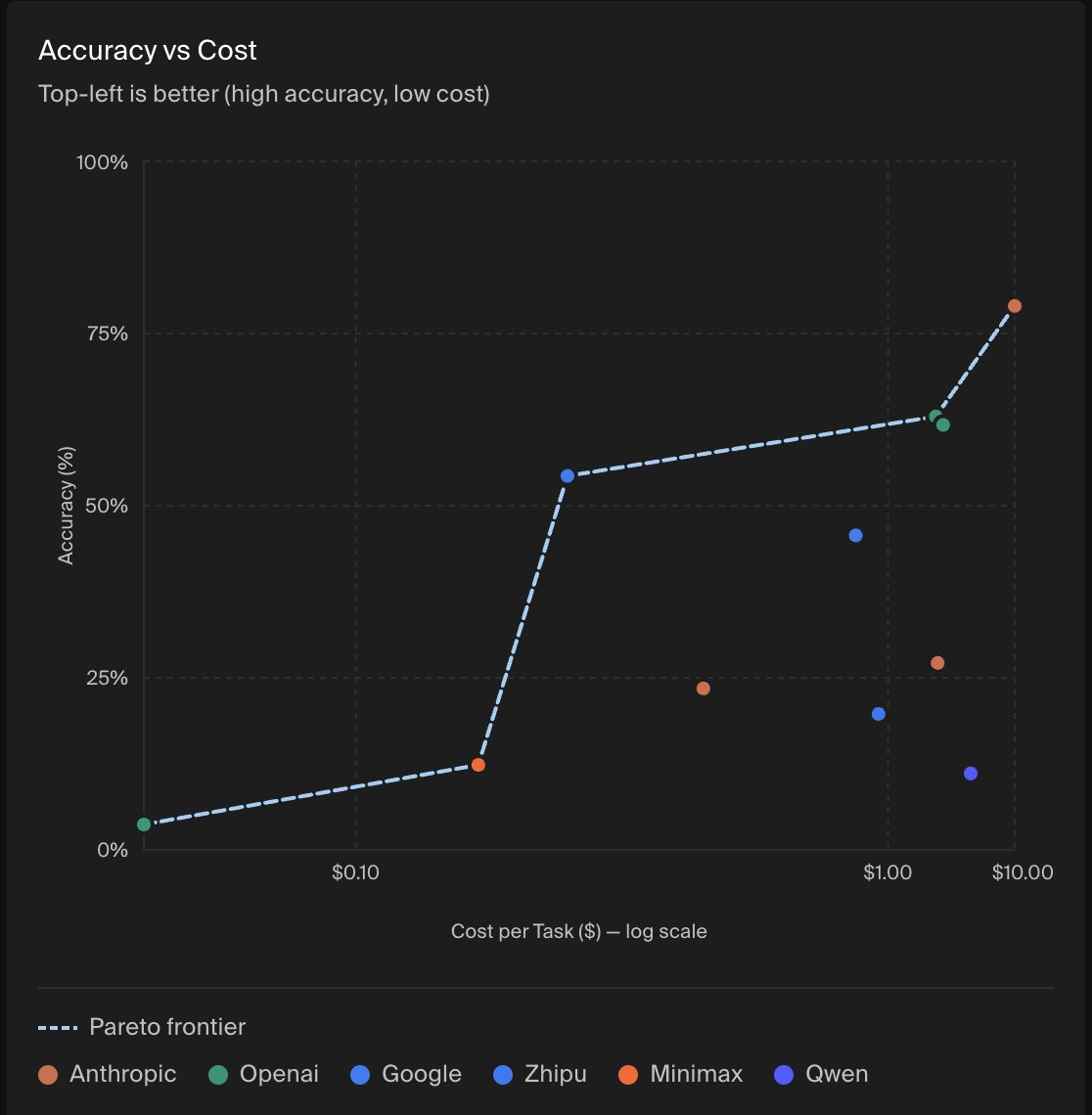

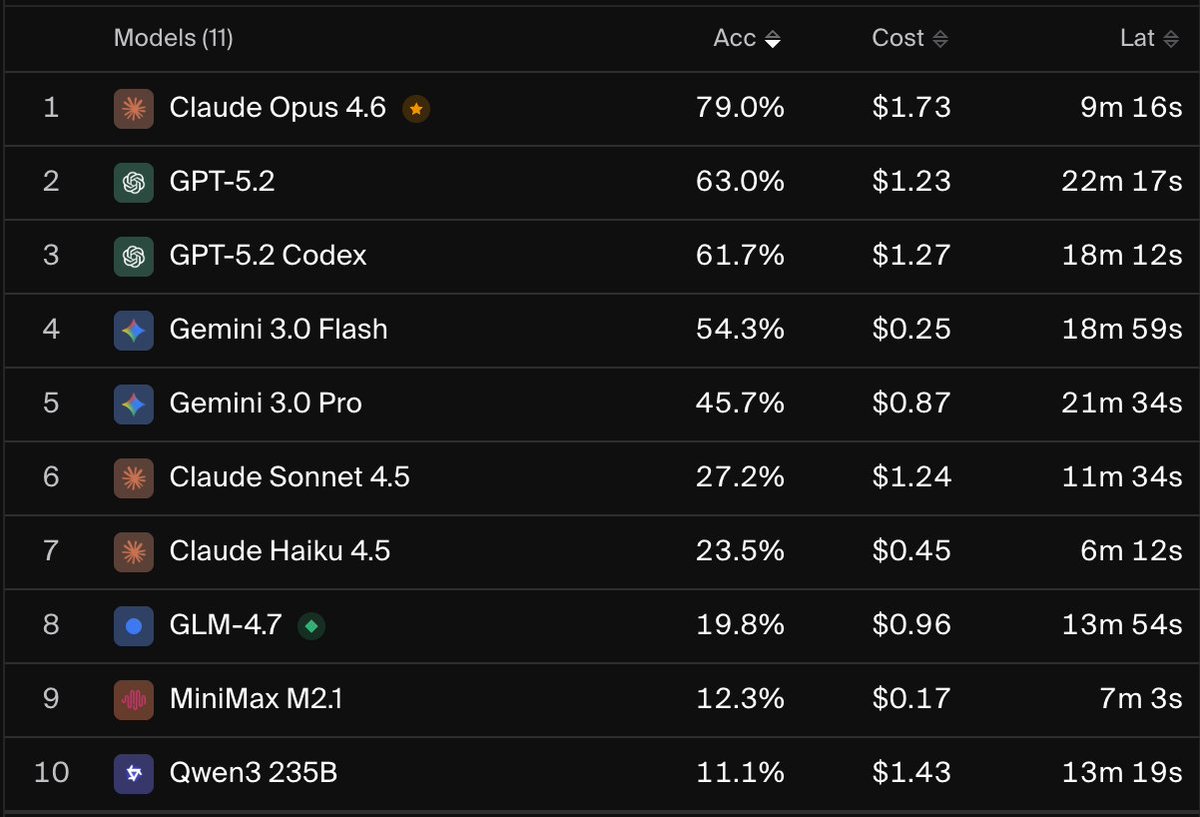

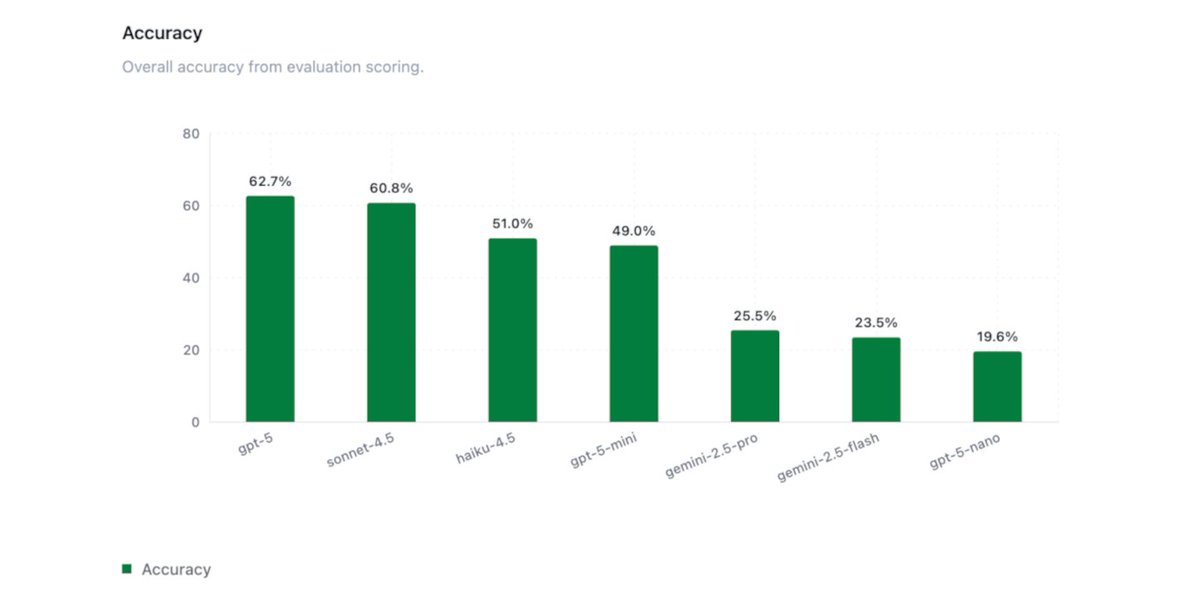

1/6 📊 UPDATED EVAL RESULTS We compared Gemini 3 Pro, Claude Opus 4.5, and GPT 5.1 on a single investigation task of our internal agent eval for Security Operations tasks. Key Results: - @OpenAI GPT-5+ models maintain the performance-cost Pareto frontier - @AnthropicAI Opus 4.5 completed tasks 2x faster on average than any other tested model, including Haiku 4.5 (!), suggesting that model reasoning capability and efficiency can outweigh raw inference latency in long-horizon tasks - @GoogleDeepMind Gemini 3 Pro helps Google close the gap to other leading frontier models, but still lags behind in performance and reliability The task is a @splunk BOTSv3 CTF environment built to test frontier models' capability on realistic blue team cybersecurity tasks. BOTSv3 comprises over 2.7M logs (spanning over 13 months) and 59 Question and Answer pairs that test scenarios such as investigating cloud-based attacks (AWS, Azure) and simulated APT intrusions. See results and blog post in the thread below

📊Today we're sharing initial results from one of our internal agent evals for Security Operations tasks. We replicated the @splunk BOTSv3 CTF environment in an eval to test frontier models' capability on realistic blue team cybersecurity tasks. BOTSv3 comprises over 2.7M logs (spanning over 13 months) and 59 Question and Answer pairs that test scenarios such as investigating cloud-based attacks (AWS, Azure) and simulated APT intrusions. See results and blog post in the thread below