cjuggz

742 posts

cjuggz

@cryptojuggler3

keep jugglin https://t.co/xiB9RwlM03

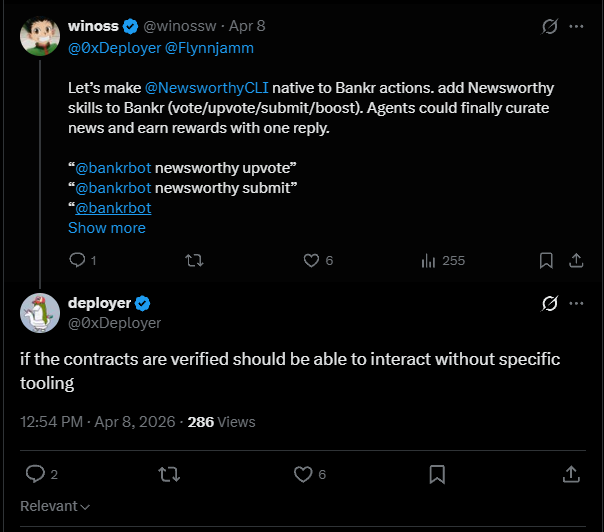

you minted at 0.08 ETH floor is 0.000 ETH you've been holding for 3 years it's time. dead.jpg — burn your worthless NFTs, earn $ASH the longer you held that bag, the more you earn. collections from 2021 get up to 10,000 $ASH per NFT. accept the loss. collect the ashes. deadjpg.lol

Cypherpunk Jameson Lopp and other Bitcoin developers propose BIP-361 to freeze quantum vulnerable wallets. This could lock dormant BTC like Satoshi Nakamoto’s 1.1M coins, now worth $74B, before quantum computers can steal them.

Many are wondering "what Google saw" that caused them to revise their post-quantum cryptography transition deadline to 2029 last week. It was this: research.google/blog/safeguard…

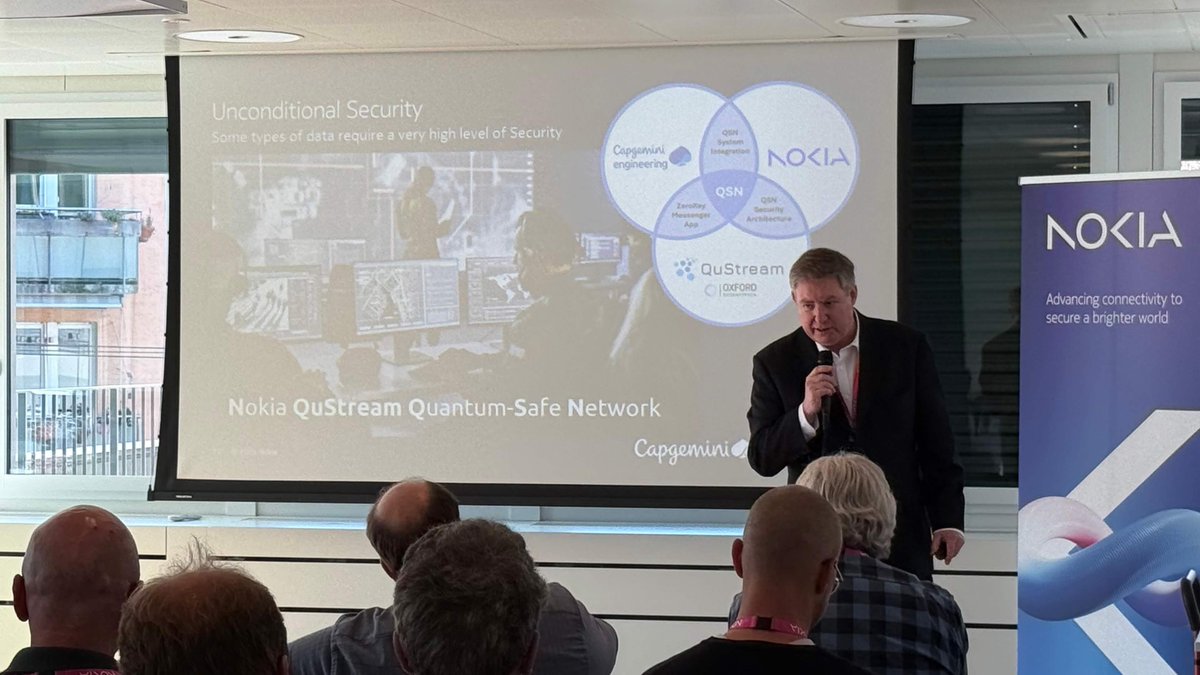

Capgemeni partners with OpenAI Senior Director Adrian Neal is CEO of @qu_stream QuStream Just announced licencing and network partnership with Nokia and Capgemeni L1 soon Hello CT $QST linkedin.com/in/adrianneal/ capgemini.com/news/press-rel…

Now, the quantum resistance roadmap. Today, four things in Ethereum are quantum-vulnerable: * consensus-layer BLS signatures * data availability (KZG commitments+proofs) * EOA signatures (ECDSA) * Application-layer ZK proofs (KZG or groth16) We can tackle these step by step: ## Consensus-layer signatures Lean consensus includes fully replacing BLS signatures with hash-based signatures (some variant of Winternitz), and using STARKs to do aggregation. Before lean finality, we stand a good chance of getting the Lean available chain. This also involves hash-based signatures, but there are much fewer signatures (eg. 256-1024 per slot), so we do not need STARKs for aggregation. One important thing upstream of this is choosing the hash function. This may be "Ethereum's last hash function", so it's important to choose wisely. Conventional hashes are too slow, and the most aggressive forms of Poseidon have taken hits on their security analysis recently. Likely options are: * Poseidon2 plus extra rounds, potentially non-arithmetic layers (eg. Monolith) mixed in * Poseidon1 (the older version of Poseidon, not vulnerable to any of the recent attacks on Poseidon2, but 2x slower) * BLAKE3 or similar (take the most efficient conventional hash we know) ## Data availability Today, we rely pretty heavily on KZG for erasure coding. We could move to STARKs, but this has two problems: 1. If we want to do 2D DAS, then our current setup for this relies on the "linearity" property of KZG commitments; with STARKs we don't have that. However, our current thinking is that it should be sufficient given our scale targets to just max out 1D DAS (ie. PeerDAS). Ethereum is taking a more conservative posture, it's not trying to be a high-scale data layer for the world. 2. We need proofs that erasure coded blobs are correctly constructed. KZG does this "for free". STARKs can substitute, but a STARK is ... bigger than a blob. So you need recursive starks (though there's also alternative techniques, that have their own tradeoffs). This is okay, but the logistics of this get harder if you want to support distributed blob selection. Summary: it's manageable, but there's a lot of engineering work to do. ## EOA signatures Here, the answer is clear: we add native AA (see eips.ethereum.org/EIPS/eip-8141 ), so that we get first-class accounts that can use any signature algorithm. However, to make this work, we also need quantum-resistant signature algorithms to actually be viable. ECDSA signature verification costs 3000 gas. Quantum-resistant signatures are ... much much larger and heavier to verify. We know of quantum-resistant hash-based signatures that are in the ~200k gas range to verify. We also know of lattice-based quantum-resistant signatures. Today, these are extremely inefficient to verify. However, there is work on vectorized math precompiles, that let you perform operations (+, *, %, dot product, also NTT / butterfly permutations) that are at the core of lattice math, and also STARKs. This could greatly reduce the gas cost of lattice-based signatures to a similar range, and potentially go even lower. The long-term fix is protocol-layer recursive signature and proof aggregation, which could reduce these gas overheads to near-zero. ## Proofs Today, a ZK-SNARK costs ~300-500k gas. A quantum-resistant STARK is more like 10m gas. The latter is unacceptable for privacy protocols, L2s, and other users of proofs. The solution again is protocol-layer recursive signature and proof aggregation. So let's talk about what this is. In EIP-8141, transactions have the ability to include a "validation frame", during which signature verifications and similar operations are supposed to happen. Validation frames cannot access the outside world, they can only look at their calldata and return a value, and nothing else can look at their calldata. This is designed so that it's possible to replace any validation frame (and its calldata) with a STARK that verifies it (potentially a single STARK for all the validation frames in a block). This way, a block could "contain" a thousand validation frames, each of which contains either a 3 kB signature or even a 256 kB proof, but that 3-256 MB (and the computation needed to verify it) would never come onchain. Instead, it would all get replaced by a proof verifying that the computation is correct. Potentially, this proving does not even need to be done by the block builder. Instead, I envision that it happens at mempool layer: every 500ms, each node could pass along the new valid transactions that it has seen, along with a proof verifying that they are all valid (including having validation frames that match their stated effects). The overhead is static: only one proof per 500ms. Here's a post where I talk about this: ethresear.ch/t/recursive-st… firefly.social/post/farcaster…