alex morris 🔥

2K posts

alex morris 🔥

@cto_ya_know

Chief Tribe Officer (CTO) @tribecodeAI teaching robots to press buttons so people can paint 🔥 also @KoiiFoundation long horizon / full self-driving is AGI

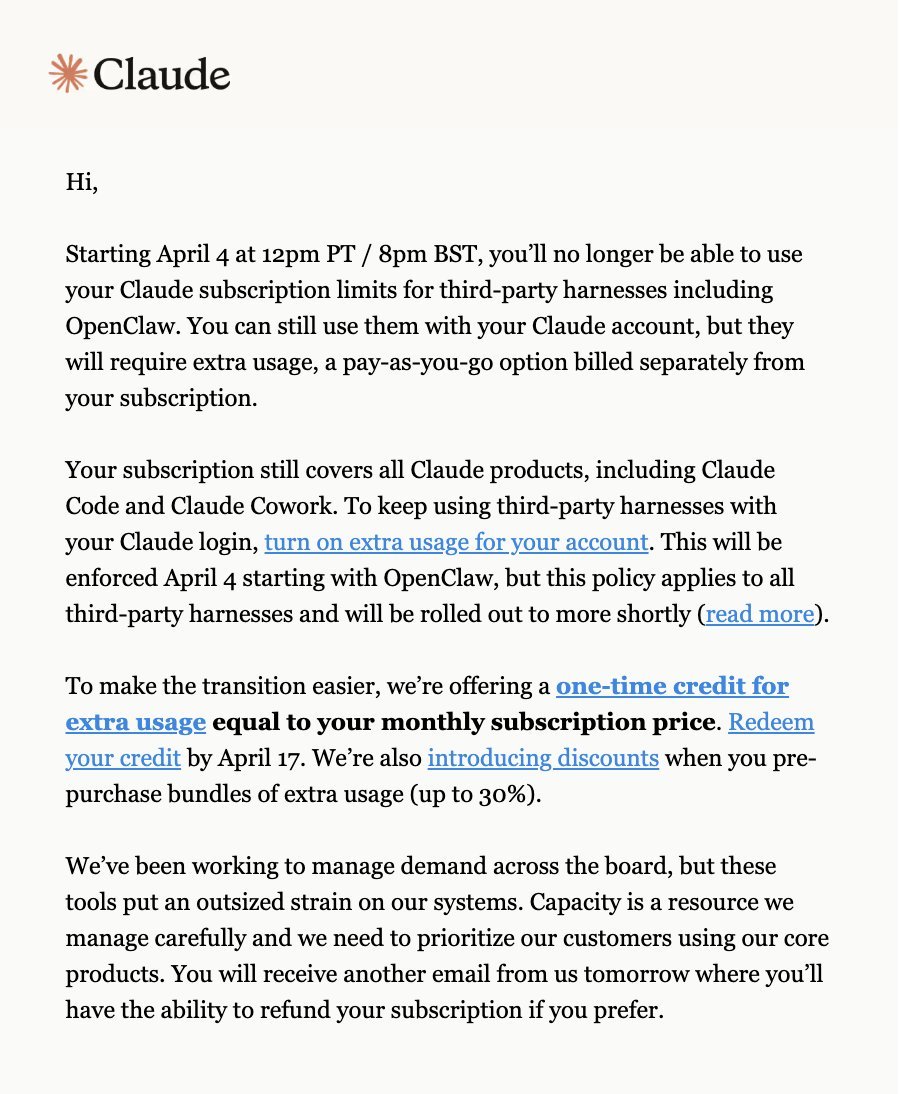

I AM GENUINELY SCARED ABOUT WHAT'S COMING NEXT IN AI. Not because the robots are going to rule us. Because of the price tag. Claude Max is $200. ChatGPT Pro is $250 a month. SuperGrok Heavy is $300. A year ago none of these plans existed. Anthropic just leaked their next model. Claude Mythos. Their own blog post called it "by far the most powerful AI model we've ever developed." It won't be in any existing plan. API only. Premium pricing most people won't be able to touch. Every new model costs more. Every new plan costs more. This isn't slowing down. It's accelerating. AI is the biggest advantage anyone can have right now. The people using it are building faster, earning more, and pulling ahead every single day. That's not hype. That's just what's happening. But right now the tools are still cheap. $20 a month gets you access to models that would have been unimaginable two years ago. That window is closing. A year from now the best AI won't cost $20. It won't cost $200. It'll cost thousands. And only the people who can afford it will have access to the most powerful intelligence on earth. The gap is coming. Between those who can afford the best AI and those who can't. So lock in now. Learn these tools while they're accessible. Build with them while they're affordable. Stack as much value as you can while the playing field is still somewhat level. Because it won't be level for long.

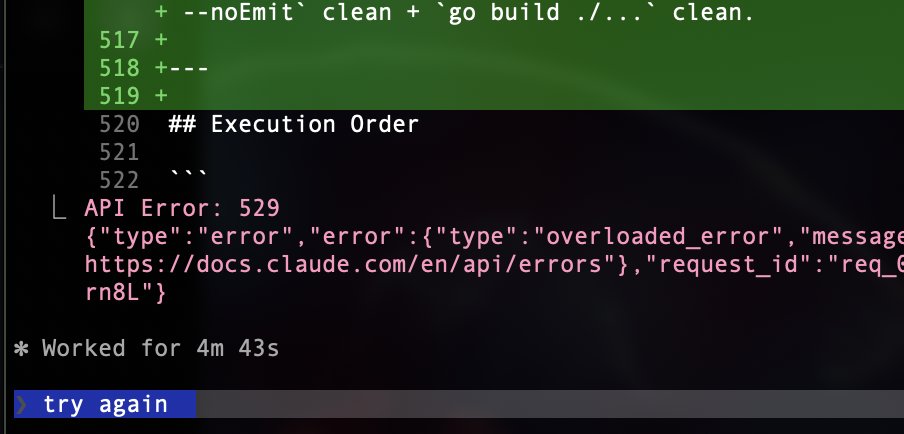

@trq212 Haha. This is a joke. My max 5x plan, billed at $200 / month, just topped out after ~30 minutes Time to get codex.

My feed is showing me a bunch of folks who tapped out their whole usage limits on Mon/Tue. Is this your experience? Please comment, I want to understand how widespread this is

@trq212 Can you stop silently switching the model from Opus to Sonnet? That silent switch is the most dark pattern user hostile move I’ve ever seen.

Anthropic just nerfed claude This is the noose of AI tightening Anyone without a series A should probably start looking for a job Your wealth mobility is now your token consumption, and it's about to start being too expensive to use

@altryne @thursdai_pod I think very often these people are running into expensive prompt cache misses, e.g. when resuming a long conversation on million context. Happy to debug if you have a particular example. But I'll also make a thread on avoiding that separately.