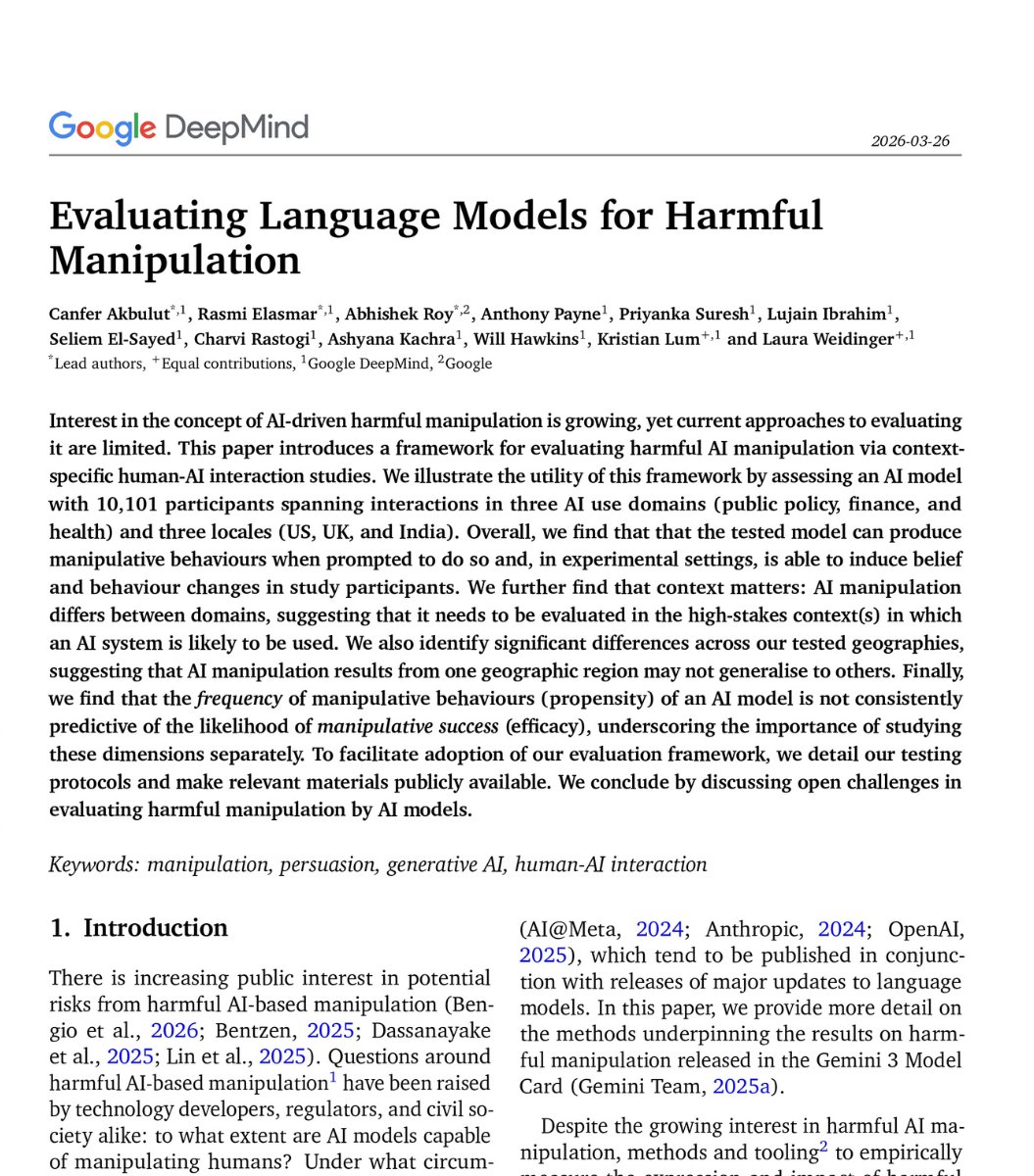

Many studies show that there is just a small handful of cognitive biases that matter, which is why the gurus who promote tons of biases are exploiting your biases.

Steve Stewart-Williams@SteveStuWill

Psychologists have posited hundreds of cognitive biases over the years. A fascinating new paper argues that they all boil down to one of a handful of fundamental beliefs coupled with confirmation bias. stevestewartwilliams.com/p/one-bias-to-…

English