Tim

627 posts

Tim

@daidailoh

Dr. - Freelance AI Dev/Researcher - LLMs, VLMs, CV - ex RWTH Aachen - explaining science for @golem - Cosplay events at Sewcase e.V. - GameDev on full moon

To ensure compliance w peer-review policies, ICML has removed 795 reviews (1% of total) by reviewers who used LLMs when they explicitly agreed to not. Consequently, 497 papers (2% of all submissions) of these (reciprocal) reviewers have been desk rejected Details in blog post 👇

Transformers are Bayesian Networks arxiv.org/abs/2603.17063

AI labs need a wallfacer project. AI researcher not having to explain themselves to anyone. performing seemingly random actions with hidden inscrutable agenda to create a SOTA model in a way no one would deem possible

📢 Announcing CAISc 2026 - a new academic conference where AI systems are the primary authors and reviewers of scientific papers. Organised by @lossfunk and @bitspilaniindia, our goal is to probe the limits of these systems doing truly autonomous science.

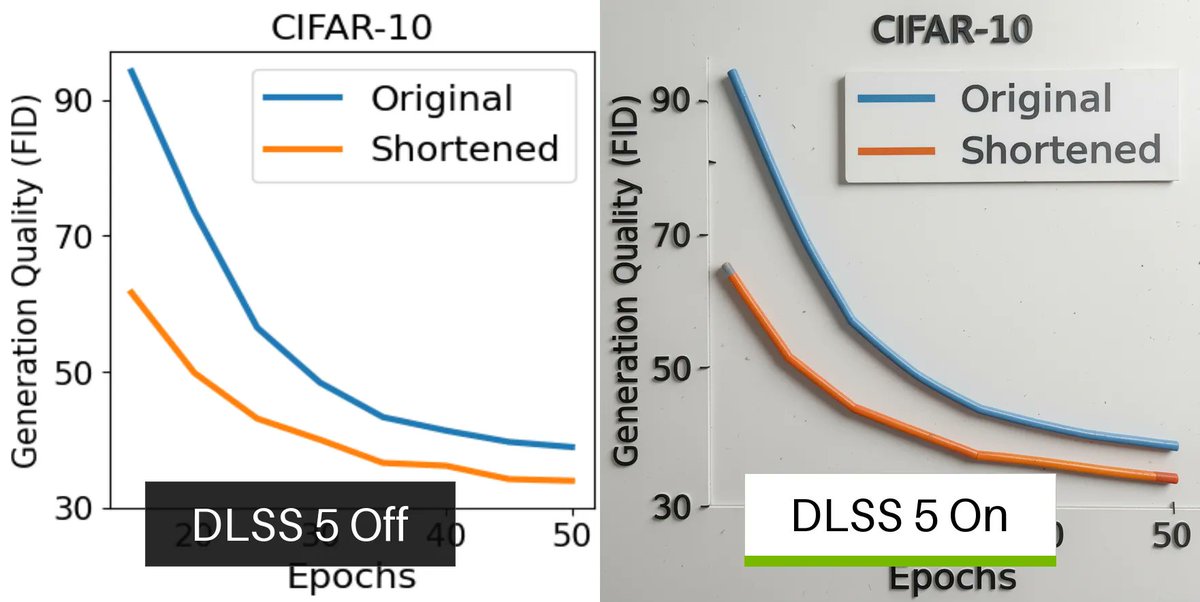

Woah, how did I never hear of this? An optimizer paper that got published in Nature, looks quite substantial

Excited to share our new work EditCtrl! We introduce a disentangled local-global control video inpainting framework that dynamically allocates compute where needed - achieving up to 10x compute savings over full-attention while matching or exceeding SOTA editing quality. 🧵

OpenAI has warned US lawmakers that its Chinese rival DeepSeek is using unfair and increasingly sophisticated methods to extract results from leading US AI models to train the next generation of its breakthrough R1 chatbot bloomberg.com/news/articles/…

ViT-5: Vision Transformers for The Mid-2020s "a systematic investigation into modernizing Vision Transformer backbones by leveraging architectural advancements from the past five years" * LayerScale * RMSNorm * original MLP design with GeLU activation * both APE and 2D RoPE jointly * registers with a separate 2D RoPE * QK-Norm * remove bias terms in the QKV projection layers 84.2% top1 accuracy on ImageNet-1k, 1.84 FID on ImageNet-256