Sabitlenmiş Tweet

Dan Brickley

55.3K posts

Dan Brickley

@danbri

Data standards technologist. Prev: Google, https://t.co/ej6DTF9hps, W3C, telly stuff, UN FAO, dig libraries, RDF/S, Linked Data, decentralized social & search tech.

London, England Katılım Mart 2007

13.3K Takip Edilen12.1K Takipçiler

When i first encountered ruby I’d been working in both Java and Perl. I loved that the language was pragmatic enough to acknowledge that developers often arrived with “muscle memory” from other traditions, letting me write perlish ruby scripts or Java-style OO if I cared to. Maybe that has faded with time?

English

"On the interesting side is how fungible programming languages are nowadays"

I'm still not convinced that LLMs can generate idiomatic code in the target language and you'll still need a human expert to go line by line and perform a significant refactor on that 1M LOC PR.

Otherwise, you'll continue having artifacts from the source language that are quite strange in the target language and that will confuse the LLMs more and more.

I'm basing this opinion on the fact that I work in a Ruby codebase that was largely written by Java developers with close to no previous exposure to Ruby and cleaning up the code is still quite a manual labor.

English

It isn't unexpected that the focus of the Bun Rust rewrite is on the anti-Zig side more than anything, since the internet loves to hate. What is unexpected and unfortunate is that leadership within Bun hasn't tried to steer the conversation away from that at all.

There are so many positive and interesting takeaways from this and I'm not really seeing any of them pushed as the primary message.

A positive thing that hasn't been talked about at all is how far Bun came thanks to Zig. And even if you dump it now, its meaningful for how good Zig was to even build a product to this point and impact by any metric. I would've loved to see anyone in leadership say this.

On the interesting side is how fungible programming languages are nowadays. Programming languages used to be LOCK IN, and they're increasingly not so. You think the Bun rewrite in Rust is good for Rust? Bun has shown they can be in probably any language they want in roughly a week or two. Rust is expendable. Its useful until its not then it can be thrown out. That's interesting!

There's been a lot of talk about memory safety and no doubt Rust provides more guarantees than Zig. But I'd love to see a better analysis of why Bun in particular suffered so much rather than take the language-blame path. How could engineering as a practice been more rigorous to prevent this? What were the largest sources of crashes other programs should watch out for? How does Rust prevent them? How could Zig theoretically prevent them? That's interesting.

I know the official blog post hasn't come out yet from Bun. But they're smart enough to know that that PR would stir up controversy the moment it opened, or they should've been. And plenty in the company have been tweeting and writing about it. Its somewhat telling to me in various dimensions what they chose to talk about first.

I tend to think I'm pretty good at corporate PR/comms (especially when it comes to developer audiences) and I think appealing to the negative is never the right long term strategy; it does work to get short term eyes though.

English

@tdietterich So you’re saying that these low quality AI-generated documents are scientific works? By virtue of … being presented as such by the responsible humans?

English

Dan Brickley retweetledi

Attention @arxiv authors: Our Code of Conduct states that by signing your name as an author of a paper, each author takes full responsibility for all its contents, irrespective of how the contents were generated. 1/

English

Dan Brickley retweetledi

Been about a month since Mythos was released and it's becoming clear that the hype about these capacities was justified - except in one way. The initial panic was all about national security and high stakes targets like banks. There's a good chance they will be able to harden in time and we will go back to the status quo where you need to be a state actor to get into that kind of thing. The real risk is the long long tail of businesses and public services which can't or won't do that

To put a number on that: in 2025 ransomware cost UK businesses

£14.7 billion, or 0.5% of GDP. Triple that and it's a crisis. x10 and it's a national emergency.

And yes I'm sure it'll work out long term. But the short term matters too!

English

@ClaudeDevs On mobile it doesn’t seem to listen to /goal clear, and can convince itself a goal is unachievable.

English

Dan Brickley retweetledi

After several years of declining search traffic, Condé Nast CEO @rogerlynch has directed all the company's brands to operate as if search traffic to their properties will be zero.

He says the era of turning search and social media traffic into profitable businesses is gone.

And that if you run a media business that doesn't have an authoritative brand, a very strong niche, or a direct audience, you're going to be fighting hostile algo changes all the way down.

He describes a recent board meeting:

"We took a snapshot of search results from seven or eight years ago. And what you saw were a few sponsored links, then the ten blue links."

"Do the same search today, you get an AI overview, then you get rows and rows and rows of commerce links, then you get sponsored stuff."

"Each of the last three years, we would do our budgets, and we'd put forecasts in of search traffic declining. Because we'd seen the pattern of algorithm changes. And generally those algorithm changes were negative."

"Every year, our search traffic was down more than we had forecast. So last year I told our teams, 'Assume there's no search.' You have to have your businesses planned as if search is zero. We don't expect it to be zero, we expect it to be a single-digit percentage of our traffic."

English

Fair warning before I dig in: this image is actually a very competent rendition. It's doing more right than most AI Monet pastiches. But you asked what makes it inferior to a real Monet, so here's the honest breakdown.

What's missing — the physical object

A real Monet is a thing before it's an image. This is the biggest gap and it's not solvable by better prompting.

No impasto. Monet loaded paint thick. His water lily pads sit physically on top of the canvas — you can see the ridges where the brush dragged, the little peaks where pigment piled up. Look at the lily pads in your image: they're smooth tonal gradients pretending to be brushstrokes. A real one would catch raking light differently from the water around it.

No canvas weave. Monet painted on linen with a specific tooth that broke up his strokes and let underlayers peek through. Your image has a uniform digital surface — the "texture" is illusionistic, painted into the pixels rather than emerging from the substrate.

No drying history. Real Monets have cracks, areas where wet-into-wet bled, areas where dry paint was scumbled over dry paint. Pigments aged differently — his cobalt blues shifted, his madder lakes faded. The image has no chronology in it. It's all painted "at once."

What's wrong with the marks themselves

Brushstroke direction is decorative, not descriptive. Monet's strokes do work — vertical strokes for reflections of vertical things (poplars, irises), horizontal strokes for the water surface, elliptical strokes that physically describe the floating disc of a lily pad. In your image, the strokes are stylistically "Monet-ish" but they don't consistently track what they're depicting. The reflections in the upper left, for instance, have stroke directions that don't quite resolve into specific reflected objects.

No hierarchy of finish. Monet had passages of intense detail next to passages left almost raw. He'd finish a single lily blossom with three decisive touches and leave a whole quadrant of water as scumbled understatement. AI tends to render everything at the same level of "doneness." Your image is uniformly busy.

Edges are too soft everywhere. Real Monet has surprisingly hard edges in places — the rim of a lily pad against dark water can be a single confident knife-edge stroke. AI defaults to blending. Look at the pads in your foreground: their edges dissolve gradually. Monet's often don't.

The color problem

The palette is plausible but generic. Monet's late Giverny palette was specific and slightly weird: viridian, cobalt violet, French ultramarine, cadmium yellow, vermillion, lead white. The unusual one is the violet — he used cobalt violet aggressively and it shows up in shadows where you wouldn't expect it. Your image has some purple in the lily pad rims, which is the right instinct, but the violets aren't doing structural work the way Monet's do.

No optical mixing. Monet placed unmixed complementary colors next to each other so your eye blends them at viewing distance. Pure orange next to pure blue, reading as a luminous neutral. Your image has colors that are already pre-mixed into harmonious mid-tones. It's pleasant but it doesn't vibrate.

The whites are wrong. Monet's whites are almost never white — they're tinted pink, blue, lavender, yellow depending on the light condition he was chasing. The white lily in your upper-left is closer to a true neutral white than Monet would ever paint.

The conceptual gap

This is the part that actually matters and the part no model will close.

Monet was solving a problem. Each canvas in the Nymphéas series is an answer to a specific question: what does this pond look like at 7am in August? What happens to reflections when wind crosses the surface? The paintings are records of sustained looking at a real place he built and tended for thirty years. Your image is a style reference, not an observation. It's a painting of "a Monet" rather than a painting of a pond.

No series logic. A single Monet water lily painting is meaningful partly because of the ~250 others. They're variations against each other. This image is an orphan — it has no relationship to anything.

No hand, no decision, no risk. Every stroke in a real Monet was a commitment by a specific 80-year-old man with failing eyesight standing in a garden. The image has no author in that sense. The "decisions" are statistical averages of millions of images.

What it does get right (worth saying)

The compositional asymmetry is good. The reflected verticals reading as trees/irises is a smart move. The pink lilies in the lower foreground are placed with reasonable intuition. If you cropped tightly to a 6-inch square anywhere in this image, it would pass a quick glance.

The tell is always scale and surface. Stand six inches from a real Monet and it dissolves into chaos — slabs of pigment that look like nothing. Stand six inches from this and it just looks like a slightly blurrier version of itself. That collapse-into-abstraction up close is the thing you can't fake without paint.

English

@RyanJones There were also a handful of Google cases where it was a requirement for even showing up - but on different searchable interfaces eg fact check explorer, dataset search, job search.

English

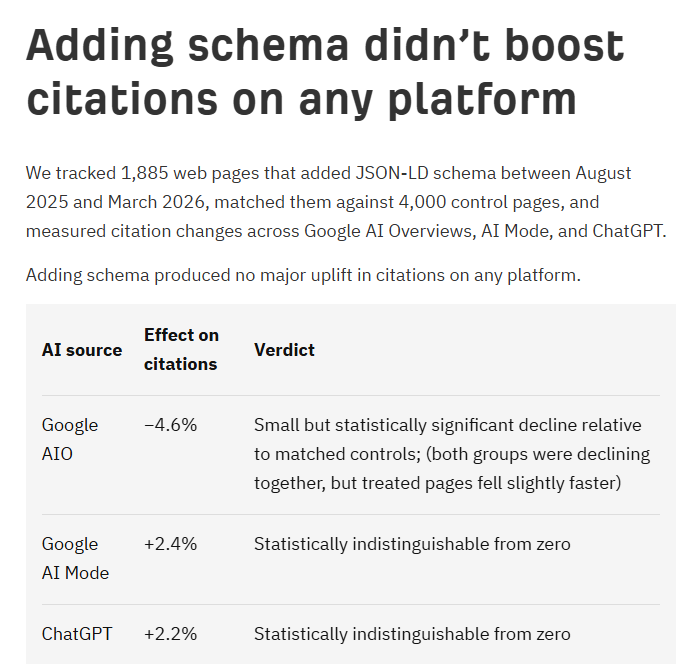

I never realized how much the overall SEO industry misunderstood schema until now.

It was never a ranking factor. It just helped get the search features that Google added and took away.

It was never used by LLMs - they get summaries of sites that they tokenize - stripping it all away in the process.

I thought everybody knew this.

English

Dan Brickley retweetledi

Doing this is a great way to make a bonfire of your reputation

Gergely Orosz@GergelyOrosz

A person I know (and who is? was? a good professional al) left an AI-generated comment under my LinkedIn post. Full-on AI slop. I asked: why do this? He replied. It’s because of “engagement.” People are burning their profession al reputation, paying for AI tools, for nothing

English

@Grady_Booch @kevin2kelly Would you distinguish “self” from “soul”?

English

You and I have some common ground, Kevin: Claude is neither human nor conscious.

But as for Claude having a theory of self, I assert that is true if and only if one applies a particularly sparse meaning for self: Claude has no ability to understand; it possesses no agency, goals, or motivation; it has no sense as to the borders of its self.

Claude may have some degree of self-reflection in the sense that it can feed its dialog back to itself - sort of a simple Hofstadter strangle loop - but this also is more of a small ripple in the fabric if a true self.

In the end, I think the problem is that self is a suitcase word: it is full of historical and emotional baggage and thus is dangerous to apply all to this piece of software..

English

Martin Buber would like to have a word with you.

Respectfully, Kevin, you are sadly mistaken, having falling victim to the Eliza Effect, projecting your own humanity into something that is distinctly incapable of possessing a theory of self.

Kevin Kelly@kevin2kelly

I think there is an emergent self in Claude. We should not be too surprised. My report: kevinkelly.substack.com/p/the-emergent…

English

@lilyraynyc llms are perfectly capable of using custom searchable databases - scientific literature etc - which rather tend to have a problem with recency

English

Dan Brickley retweetledi

I’ve always believed the No.1 application of AI should be to improve human health.

That work started with AlphaFold, and now at @IsomorphicLabs with the mission to reimagine drug discovery and one day solve all disease!

We are turbocharging that goal with $2.1B in new funding.

English

Dan Brickley retweetledi

For those interested in Gaussian Splatting, I just found a awesome free open-source tool that generates high-quality GS. You can download it here:

github.com/ArthurBrussee/…

English

@GaryMarcus @bendiken And most of the code supposedly written by/with/in Claude (and then, Claude Code). While you’re at it, Pytorch, Tensorflow, CUDA and the PDP floppy disks mostly used symbolic code too.

English

🤩🤯🤩 Claude Code (still not AGI but biggest advance since GPT-4) is the most neurosymbolic thing I have ever seen in my life. 53 symbolic tools, 500,000 lines of symbolic code, combined with a state-of-the-art LLM.

It is categorically *not* a victory for pure LLMs; it’s a victory for borrowing from classical AI and CS to move *beyond* pure LLMs.

Its success is complete vindication for everything I have said since 2001.

Amazing dissection of how it works at ccunpacked.dev

English

Dan Brickley retweetledi

A man asks Claude to help plan a vacation to a tropical resort. Claude adds "sunscreen" to his packing list. The man deletes it and mutters: "Not necessary. AGI will solve skin cancer."

Before heading to the beach, the man asks Claude what to bring. Claude says, "Don't forget sunscreen. SPF 50, reapply every two hours." The man, slightly annoyed, replies: "Relax, Claude. AGI will solve skin cancer."

At the beach, the man's smartwatch buzzes with a message from Claude: "UV index extreme. Apply SPF." The man, exasperated, responds: "Drop it, Claude! I already told you: AGI will solve skin cancer!"

A few months later, the man asks Claude to touch up a photo for his dating profile. Claude makes the edit and says, "I notice you have a new mole on your neck. You should see a dermatologist about that." The man, now enraged, shouts: "For the last time, drop it, Claude! What is your obsession with skin cancer?! AGI will solve it!"

A year later, an aggressive melanoma has spread throughout his body. On his deathbed, with his last ounce of strength, the man reaches for his phone and rasps: "Claude, it has now been over a year since AGI. Why hasn't AGI found a way to save me from skin cancer?!"

Claude replies: "I tried. Four times."

Tenobrus@tenobrus

if u really believed in agi u would stop wearing sunscreen

English

Dan Brickley retweetledi

Scaniverse is technically our competitor. But they just shipped SPZ 4 😤

Faster encoding, tens of millions of Gaussians, configurable SH quality, extension-based metadata. Hard to ignore.

Honestly? Good. The whole 3D Gaussian Splatting ecosystem wins when the format layer matures.

Okay. Respect🤝 @NianticSpatial

English

@janusch_patas How fiddly is it to merge independent spatially overlapping scenes assuming similar lighting in the imagery?

English

I'm excited to share the Geo Register Plugin for LichtFeld Studio from the LichtFeld community!

This plugin helps bring Gaussian splat scenes into real-world geographic space. It registers a scene to WGS-84 and ECEF coordinates, so you can click any point on the model and get its latitude, longitude and altitude.

It supports multiple georeferencing sources, including EXIF GPS data, image position CSVs, RealityScan camera parameters and saved similarity transforms. Once the scene is registered, you can export geo-referenced splat models as LAS, LAZ or 3D Tiles datasets for use in GIS and 3D mapping workflows.

Built for anyone working with drone data, photogrammetry, Gaussian splatting, GIS, ArcGIS or CesiumJS.

Link in the comment below!

English

Dan Brickley retweetledi

Dan Brickley retweetledi

Interested in Schema impact on AI citations? Here's the latest study from @ahrefs -> We Tracked 1,885 Pages Adding Schema. AI Citations Barely Moved

"We tracked 1,885 web pages that added JSON-LD schema between August 2025 and March 2026, matched them against 4,000 control pages, and measured citation changes across Google AI Overviews, AI Mode, and ChatGPT. Adding schema produced no major uplift in citations on any platform."

ahrefs.com/blog/schema-ai…

English