Daniel Chernenkov

292 posts

Daniel Chernenkov

@danielckv

📸 Tech Entrepreneur & Urban Explorer ✨ 2x Post Exists. 🧠 Staying Foolish, Building the Future.

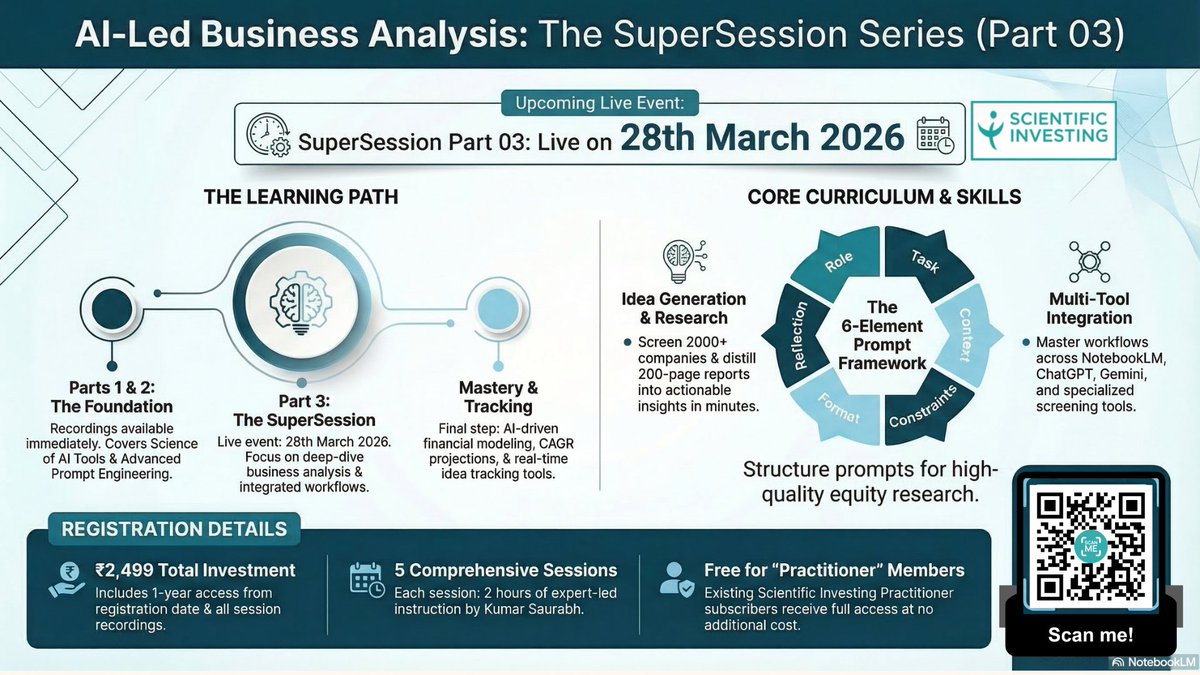

You need to have started using OpenClaw yesterday. Here's the web's easiest setup guide + 5 killer use cases: 38:06 - 1. Live knowledge bot 47:47 - 2. Automated standups 54:46 - 3. Push-based comp intel 1:13:26 - 4. VOC reporting 1:24:30 - 5. Auto bug routing

@danielckv @ScharoMaroof Do you condemn this attack that has rendered Tehran a long-term cancer cluster for the children and grandchildren of the Persian people you claim to want to "liberate"? Yes or No. Do you condemn the act?

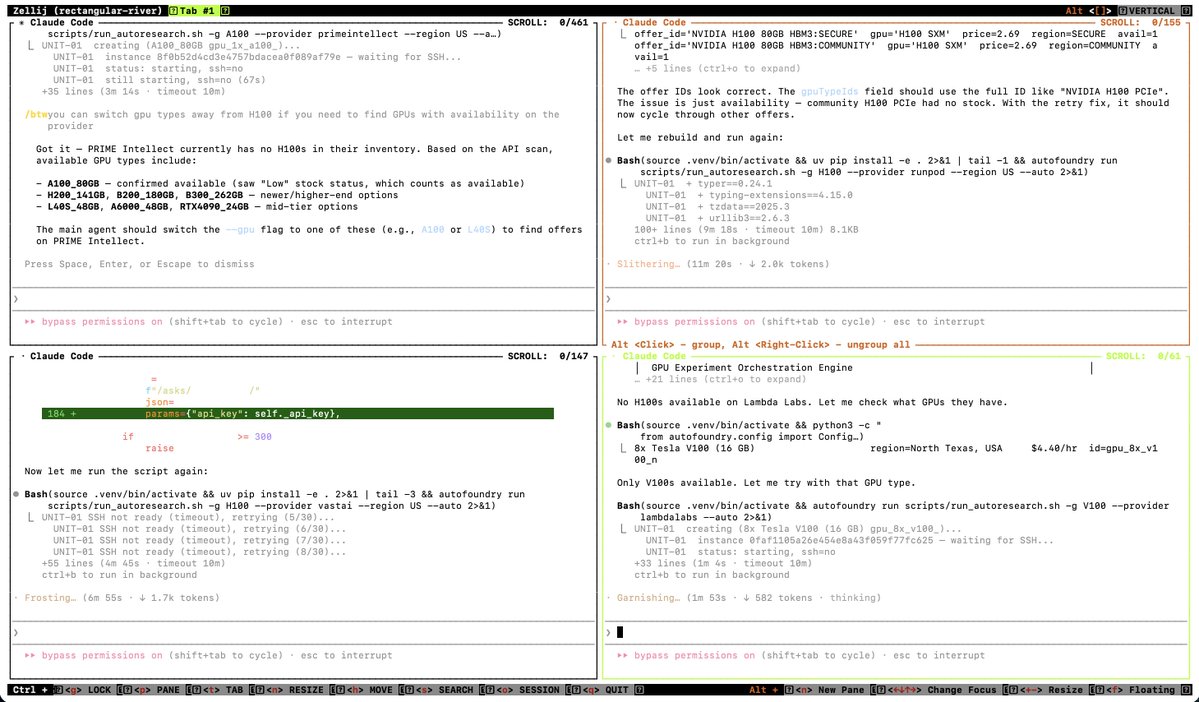

Expectation: the age of the IDE is over Reality: we’re going to need a bigger IDE (imo). It just looks very different because humans now move upwards and program at a higher level - the basic unit of interest is not one file but one agent. It’s still programming.